In the world of systematic trading, the speed and reliability of iterating on strategies are paramount. Manually managing data, running backtests, and deploying code quickly becomes a bottleneck, introducing errors and slowing down the research-to-production cycle. Designing an efficient automation pipeline for systematic trading strategies development is not just about convenience; it’s a critical component for maintaining a competitive edge. This involves a cohesive integration of data infrastructure, research tools, deployment mechanisms, and robust monitoring, all working in concert to streamline the entire lifecycle of an algorithmic trading strategy. A well-engineered pipeline reduces operational overhead, enhances consistency, and ultimately allows quantitative teams to focus on generating alpha rather than managing infrastructure.

Defining the Automated Pipeline for Strategy Development

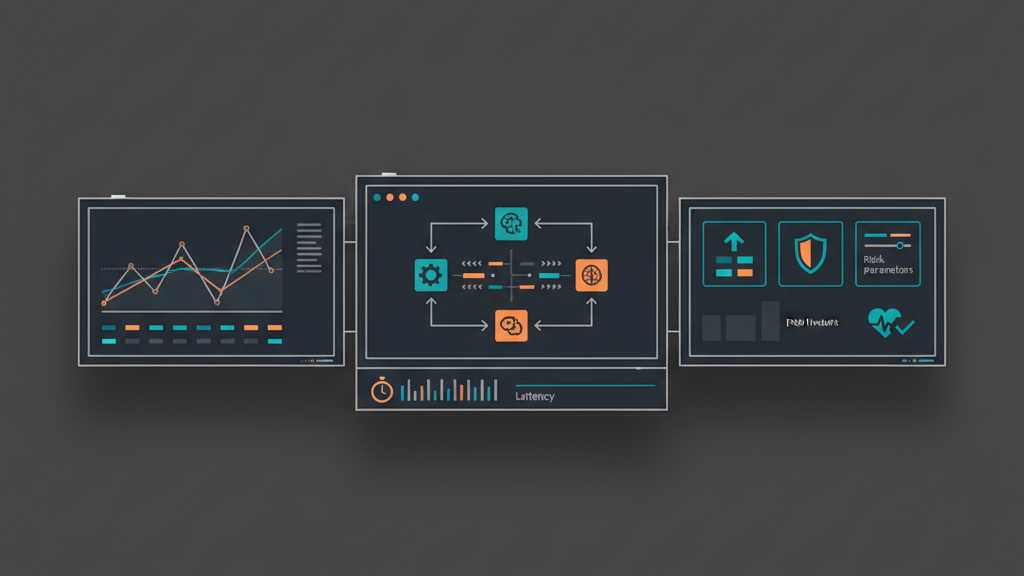

An effective automation pipeline for systematic trading strategies development serves as the backbone for any serious quantitative trading operation. It encompasses the entire journey of a strategy, from initial concept validation through to live deployment and continuous monitoring. The primary goal is to minimize human intervention at every stage, thereby reducing errors, accelerating the development cycle, and ensuring repeatability. This isn’t just about scripting a few tasks; it’s about building an integrated system where data flows seamlessly, strategies are rigorously tested, and deployments are executed with confidence. A well-designed pipeline treats strategies as software products, subject to version control, automated testing, and continuous integration/continuous deployment (CI/CD) principles. Ignoring this architectural discipline often leads to fragile systems, significant operational risks, and missed trading opportunities due to slow iteration.

Robust Data Infrastructure: The Bedrock of Automation

The foundation of any systematic trading pipeline is a robust and reliable data infrastructure. Without high-quality, normalized, and easily accessible data, even the most sophisticated strategies are doomed. This involves not only ingesting vast quantities of historical tick and fundamental data but also maintaining real-time data feeds with minimal latency. Data integrity checks are non-negotiable; corrupted or missing data can lead to misleading backtest results and costly live trading errors. Storage solutions must be performant enough to handle frequent queries for backtesting and analysis, often utilizing specialized databases like KDB+ or highly optimized file formats like HDF5 on distributed storage. The process of cleaning, normalizing, and time-aligning data from disparate sources (e.g., different exchanges, vendors) is complex but critical for consistent strategy performance.

- Automated ingestion and storage of historical and real-time market data.

- Rigorous data quality checks, including gap detection, outlier identification, and checksum validation.

- Normalization and time-alignment across various data sources to ensure consistency.

- High-performance data retrieval mechanisms for efficient backtesting and live processing.

- Robust data warehousing solutions, considering scalability, redundancy, and access latency.

Accelerating Strategy Backtesting and Optimization

Once the data infrastructure is in place, the next critical step in designing an efficient automation pipeline for systematic trading strategies development is to automate the strategy research and backtesting workflow. Manual backtesting is slow, prone to setup errors, and makes rigorous optimization nearly impossible. An automated backtesting framework allows quants to quickly iterate on strategy ideas, run large-scale parameter optimizations, and evaluate performance across various market conditions without constant manual oversight. This involves versioning strategy code (e.g., Git), triggering backtests automatically on code commits, and standardizing performance metrics calculation (e.g., Sharpe Ratio, Max Drawdown, Calmar Ratio). Crucially, the backtesting engine must accurately model real-world constraints such as slippage, transaction costs, order book depth, and latency, rather than relying on idealized assumptions. Failing to account for these can lead to strategies that perform exceptionally in backtests but fail spectacularly in live trading.

Pre-Live Validation and Simulation Environment

Before deploying any strategy to a live exchange, a comprehensive pre-live validation and simulation phase is essential. This stage bridges the gap between theoretical backtest performance and actual live execution. It involves running strategies in a controlled environment that mirrors production as closely as possible, using real-time market data but without placing actual orders. This could be a paper trading account, a synthetic exchange simulator, or a shadow trading system that logs potential orders without executing them. The goal is to stress-test the entire stack: data feed reliability, strategy logic under live conditions, order management system (OMS) integration, and network latency. Observing how the strategy interacts with real-time market dynamics and identifying any unforeseen issues—such as API rate limits, message parsing errors, or unexpected market micro-structure effects—is paramount. This step is critical for catching edge cases that backtests might miss due to their historical nature or simplified execution models.

- Running strategies against live market data in a paper trading or synthetic environment.

- Simulating realistic execution latency, market impact, and order fill scenarios.

- Validating integration with broker APIs and order management systems.

- Performing A/B testing or walk-forward analysis with multiple strategy variations.

- Monitoring system stability and performance under sustained live data loads.

Automated Deployment, Execution, and Monitoring

The culmination of an efficient automation pipeline for systematic trading strategies development is the automated deployment and execution system. This involves transforming a validated strategy into an actively trading algorithm on a live exchange. Implementing CI/CD practices for strategy deployment means that new versions can be pushed to production quickly and reliably, often using blue/green deployments or canary releases to minimize risk. The execution component requires robust OMS integration, sophisticated order routing logic, and comprehensive error handling for API failures, network outages, or partial fills. Beyond deployment, continuous monitoring is non-negotiable. Real-time metrics on strategy performance (P&L, exposure, order flow), system health (CPU, memory, network), and connectivity status are collected and visualized in dashboards. Automated alerting systems notify operators immediately of any deviations from expected behavior, potential infrastructure failures, or unexpected trading activity, enabling rapid response to mitigate losses or address operational issues.

Integrated Risk Management and Post-Trade Analytics

An automated trading pipeline is incomplete and dangerous without integrated, real-time risk management. This isn’t just a separate module; it must be deeply embedded within the execution engine and monitoring systems. Pre-trade risk checks ensure that orders conform to predefined limits (e.g., max position size, max daily loss, fat-finger checks). In-trade risk controls include dynamic stop-losses, circuit breakers to halt trading under extreme volatility, and exposure limits across various instruments or strategies. Furthermore, an efficient pipeline must include robust post-trade analytics for continuous improvement. This involves detailed transaction cost analysis (TCA) to quantify slippage and impact, profit and loss (P&L) attribution to understand performance drivers, and in-depth analysis of fills and rejections. These insights feed back into the development cycle, informing strategy adjustments, optimization of execution algorithms, and refinement of risk parameters, ensuring the system continually learns and adapts to market conditions and operational realities.

- Implementing pre-trade risk checks: max position size, daily loss limits, order value limits.

- Real-time monitoring of strategy P&L, exposure, and drawdown against predefined thresholds.

- Automated circuit breakers and kill switches for emergency shutdown of strategies or entire systems.

- Post-trade analysis for slippage, market impact, and transaction cost attribution.

- Continuous feedback loop from risk and performance analytics to strategy development and optimization.