Developing an effective low latency execution workflow design for multi-venue order routing is fundamental for quantitative trading firms operating in today’s fragmented market landscape. It’s not merely about fast connections; it’s an intricate orchestration of hardware, software, and network protocols optimized to shave off every microsecond from order placement to execution acknowledgment. The primary goal is to ensure that orders are routed to the optimal venue – considering price, liquidity, and fees – with minimal delay, thereby maximizing fill rates and minimizing slippage. This demands a holistic approach, from physical infrastructure to the most granular details of the order management system and smart order router logic. Missteps at any stage, from network configuration to data normalization, can introduce critical latency, undermining the profitability of even the most sophisticated trading strategies. This deep dive focuses on practical architectural choices and operational considerations for building robust, high-performance systems that can consistently deliver competitive execution.

The Latency Landscape: Identifying Bottlenecks in Multi-Venue Execution

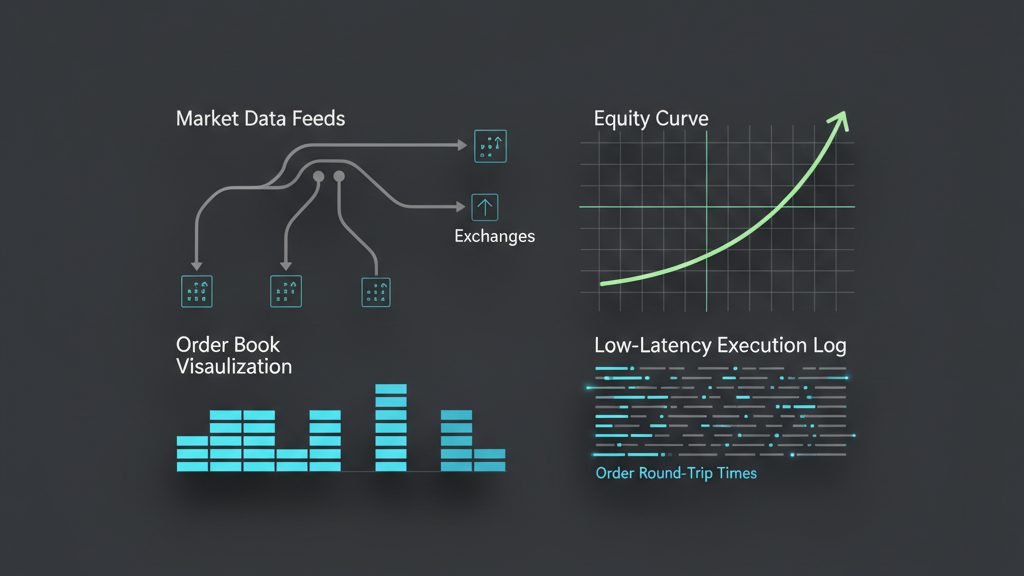

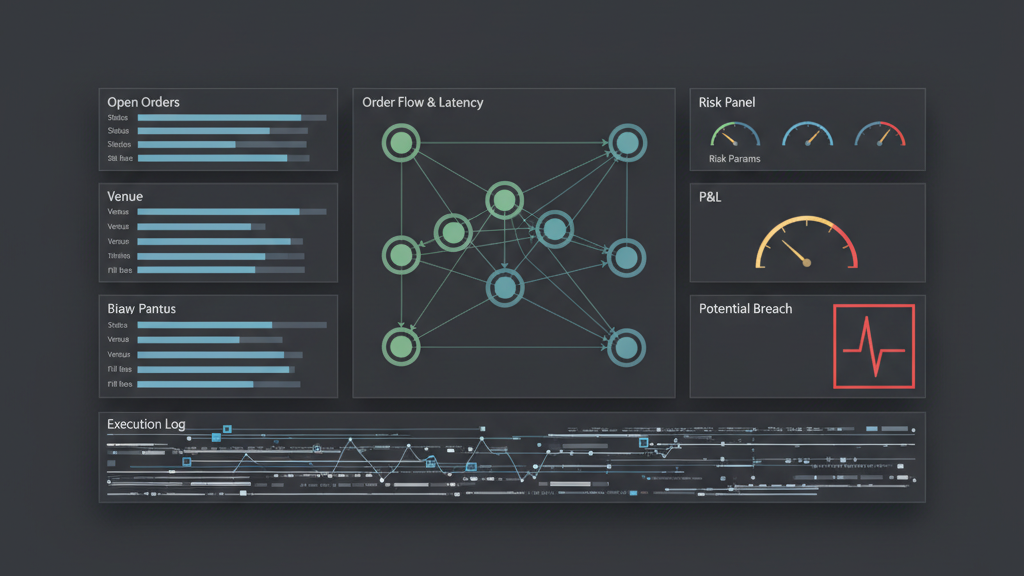

Understanding where latency manifests is the critical first step in optimizing a low latency execution workflow design for multi-venue order routing. In a typical setup, delays accumulate from several sources: the market data feed itself, the trading strategy’s decision-making process, the order management system’s internal processing, network transmission to the exchange, and the exchange’s internal matching engine. When routing orders across multiple venues, this complexity multiplies. Each exchange or dark pool introduces its own unique API, messaging protocol, and network path. Furthermore, market data normalization and aggregation across these disparate sources can introduce synthetic latency if not handled efficiently. The cumulative effect of these micro-delays can be substantial, leading to missed opportunities or adverse fills. Real-world scenarios often reveal unexpected bottlenecks, such as slow serialization/deserialization of messages, inefficient memory access patterns, or contention for critical resources within the application stack. Pinpointing these areas requires detailed profiling and systematic instrumentation of the entire order path, from strategy signal generation to exchange acknowledgment.

Architecting for Speed: Co-location and Network Fabric

Achieving true low latency execution workflow design for multi-venue order routing inherently starts at the physical layer: co-location and network infrastructure. Placing your trading servers as close as possible to the exchange’s matching engines, ideally within the same data center cage, drastically reduces network transit times. This direct proximity, often referred to as ‘proximity hosting’ or ‘co-lo’, is non-negotiable for high-frequency strategies. Beyond physical location, the choice of network hardware – high-end switches, network interface cards (NICs) supporting kernel bypass techniques like Solarflare’s OpenOnload or Mellanox’s VMA – can shave off crucial microseconds. Furthermore, designing the network fabric to minimize hops and eliminate unnecessary packet processing is paramount. Dedicated, unshared fiber optic lines directly to exchange gateways offer the most predictable and lowest latency path. Even within the co-location facility, optimizing internal network routing to prevent congestion or unnecessary data replication is a constant battle. It’s a perpetual arms race where even minor improvements in cable length or switch port configuration can yield a competitive edge.

- Utilize co-location services for critical execution infrastructure directly adjacent to target exchange matching engines.

- Deploy high-performance network interface cards (NICs) with kernel bypass capabilities to reduce OS-level latency.

- Design a flat network topology with minimal hops and dedicated direct market access (DMA) lines to exchanges.

- Implement PTP (Precision Time Protocol) or NTP for accurate clock synchronization across all trading servers and network devices.

Smart Order Routing (SOR) Logic and Implementation Challenges

The heart of any effective low latency execution workflow design for multi-venue order routing is the Smart Order Router (SOR). This component is responsible for intelligently deciding where to send an order based on a multitude of factors in real-time. These factors typically include current best bid/offer (BBO) across all accessible venues, available liquidity at those prices, exchange fees (taker/maker), and internal strategy-specific constraints such as maximum fill size per venue. The core challenge is making these decisions with minimal latency, often within single-digit microseconds, while ensuring correctness and resilience. Building an SOR requires robust market data aggregation that normalizes disparate data feeds and presents a unified view of the market. The routing algorithm itself must be highly optimized, often implemented in low-level languages like C++ to avoid garbage collection pauses or excessive CPU cycles. Furthermore, dynamic routing decisions based on prevailing market conditions, like high volatility or thin order books on a particular venue, demand adaptive logic. Incorrect SOR logic can lead to ‘venue hopping’ (sending orders to multiple venues without proper aggregation or state management) or ‘information leakage’ to the market, both detrimental to execution quality.

Concurrency, State Management, and Idempotency in Multi-Venue Systems

Managing concurrent order flow and maintaining accurate state across multiple, asynchronously responding venues is a significant hurdle in low latency execution workflow design. Each order sent generates a lifecycle of states: submitted, pending, partially filled, fully filled, canceled, or rejected. When orders are fragmented across venues, tracking the aggregate state, total filled quantity, and remaining open quantity becomes complex. A common architectural pattern is to centralize order state management within a single, high-performance in-memory database or key-value store. This ensures a consistent view of all active orders and positions, crucial for risk checks and subsequent trading decisions. Idempotency is another critical concept; orders or cancellations sent multiple times due to network retries or application failures should not result in duplicate actions on the exchange. Implementing unique client order IDs (ClOrdIDs) and ensuring the exchange correctly handles duplicate submissions is vital. Furthermore, careful design of thread pools, lock-free data structures, and message queues is necessary to handle the high throughput of market data and order acknowledgments without introducing contention or blocking operations that would degrade performance.

- Centralize order state management in a high-performance, in-memory system to maintain a unified view across venues.

- Implement unique, immutable client order IDs (ClOrdIDs) for all order submissions to ensure idempotency at the exchange.

- Utilize lock-free data structures and non-blocking I/O patterns to maximize concurrency and minimize thread contention.

- Design robust message queues and asynchronous processing pipelines to handle high-volume market data and execution reports.

Mitigating Execution Risk: Slippage, Partial Fills, and API Failures

Even with an optimized low latency execution workflow design for multi-venue order routing, inherent risks remain. Slippage, where the execution price deviates from the expected price, is a constant threat, especially in volatile markets or when trading large block sizes. Implementing intelligent order types, such as pegged orders or dark liquidity sweeps, can help. Partial fills are also common, particularly when routing large orders to venues with limited depth. The system must be capable of tracking these partial fills and either re-routing the remaining quantity or adapting the strategy. Perhaps the most challenging risk involves API failures, exchange outages, or network disruptions. A resilient system must include comprehensive error handling, automated retry mechanisms with back-off strategies, and graceful degradation or failover to alternative venues. Circuit breakers, dynamic throttling, and real-time monitoring of API health are essential. Without these safeguards, a single point of failure can lead to significant unmanaged risk exposure or substantial losses due to orders being ‘stuck’ or not filled as expected. This also extends to post-trade reconciliation; matching internal fills against broker statements to catch any discrepancies is a non-trivial process that requires robust data logging and comparison tools.

Performance Monitoring and Iterative Optimization Cycles

Developing a low latency execution workflow design for multi-venue order routing is not a one-time project; it’s an ongoing process of monitoring, analysis, and iterative optimization. Comprehensive instrumentation is non-negotiable, capturing timestamped metrics at every stage of the order lifecycle: strategy decision time, SOR routing time, network egress, exchange acknowledgment, and fill processing. Tools like high-resolution hardware timers, profiling libraries (e.g., Google Perftools, Intel VTune), and network sniffers are invaluable. Key performance indicators (KPIs) include end-to-end latency, round-trip time (RTT) to each venue, fill rates, slippage metrics, and effective execution price versus market best. Anomalies or performance regressions must be immediately identified and investigated. Regular A/B testing of routing logic or network configurations in a controlled testing environment, often against recorded market data (replay testing), allows for safe experimentation before deploying to production. The feedback loop from live trading metrics back into development is crucial for continuous improvement, refining both the architectural choices and the algorithmic logic to maintain a competitive edge. Even minor improvements of tens of nanoseconds can compound to significant performance gains over time, justifying the continuous investment in measurement and optimization.

- Implement granular, high-resolution logging and timestamping at every stage of the order lifecycle to measure true latency.

- Utilize specialized profiling tools (e.g., hardware counters, network analyzers) to pinpoint performance bottlenecks.

- Establish key performance indicators (KPIs) like end-to-end latency, slippage, and fill rates for ongoing evaluation.

- Conduct regular backtesting and replay testing with production-like loads and historical market data to validate changes.

- Automate performance regression testing within CI/CD pipelines to prevent new code from degrading execution speed.