Developing a robust signal logic framework for multi-timeframe strategy design is fundamental for quantitative traders looking to capture diverse market dynamics. This approach combines insights from various time granularities—from tick data to daily bars—to generate higher conviction trading signals. However, integrating and synchronizing data across these disparate timeframes, then translating those insights into actionable, low-latency execution, presents significant technical and architectural challenges. This article delves into the practical considerations for building such a framework, drawing on real-world experience in developing and deploying complex algorithmic trading systems. We’ll cover everything from data pipeline design to sophisticated signal aggregation and robust risk management, all crucial for achieving consistent performance in live trading environments. Understanding these nuances is key to moving beyond simplistic single-timeframe strategies and unlocking a broader spectrum of trading opportunities.

Architecting Data Synchronization for Multi-Timeframe Inputs

The foundation of any multi-timeframe strategy is a reliable, high-fidelity data pipeline capable of delivering synchronized historical and real-time data across various granularities. This isn’t just about fetching different bar types; it’s about ensuring that when a signal is computed, all underlying data points—whether a 1-minute volume spike or a daily moving average—are aligned to the same conceptual ‘present.’ Implementing this requires a robust time-series database and a data aggregation service that can accurately resample raw tick or level-1 data into various bar sizes without look-ahead bias during backtesting or stale data during live execution. Common pitfalls include using different data providers for different timeframes, leading to subtle timestamp misalignments or volume discrepancies, or failing to account for market holidays and session breaks which can skew daily bar calculations. A well-designed data loader needs to manage data gaps, corporate actions, and even differing market open/close times for various assets, all while maintaining low latency for real-time processing.

Designing Independent Signal Components Across Timeframes

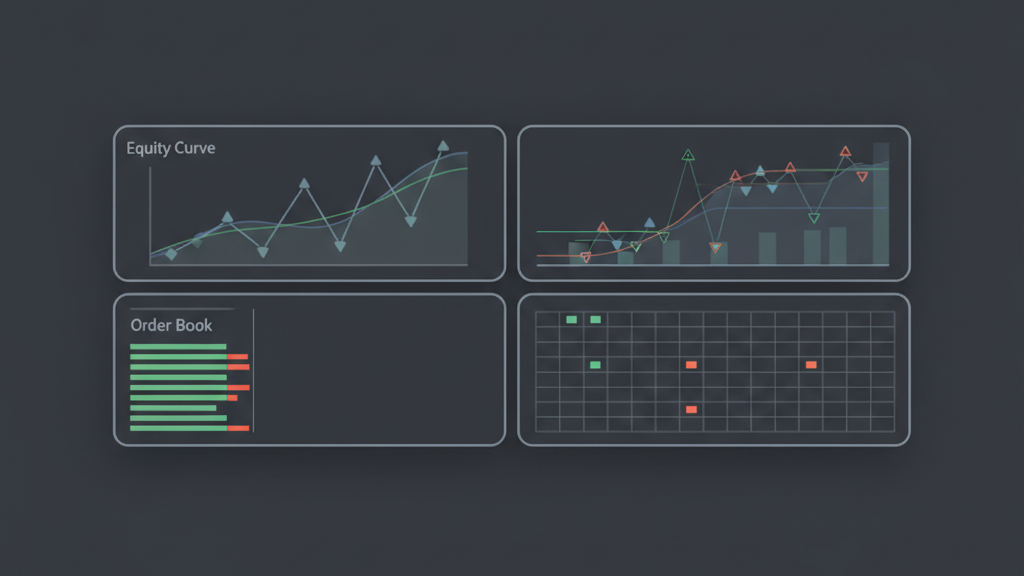

When constructing a signal logic framework, it’s often best to design individual signal components independently for each target timeframe before attempting to combine them. This modular approach allows for specialized indicators and patterns to be developed, backtested, and optimized for their native time horizon. For instance, a high-frequency component might analyze order book imbalances and micro-price action, generating signals valid for seconds or minutes. Concurrently, a medium-frequency component could focus on trend strength using hourly or 4-hour bars, while a low-frequency component identifies regime shifts or major support/resistance levels from daily or weekly charts. Each component will have its own parameter set, sensitivity, and typical signal duration. The challenge then becomes how to effectively combine these disparate ‘opinions’ without creating conflicting or redundant signals. This separation of concerns also simplifies debugging and performance attribution, letting developers isolate which timeframe’s logic is contributing most to overall strategy performance or, conversely, causing unexpected drawdowns.

- Develop distinct indicator sets and pattern recognition logic for each specific timeframe (e.g., 1-min, 5-min, 1-hr, daily).

- Optimize parameters for each component based on its native timeframe’s market behavior and signal characteristics.

- Ensure each component outputs a standardized ‘signal state’ (e.g., long, short, neutral, or a score) for subsequent aggregation.

- Implement robust unit tests for each independent signal component to verify its behavior in isolation.

Aggregating and Confirming Signals for Higher Conviction Trades

The true power of a multi-timeframe strategy emerges in the aggregation and confirmation stage, where signals from different horizons are combined to form a higher-conviction trading decision. This is where the signal logic framework truly takes shape. Simple aggregation methods might involve voting schemes, where multiple timeframes must agree on a direction (e.g., 1-minute and 5-minute signals both ‘buy’ to initiate a long position). More sophisticated approaches could use weighted averages based on historical performance or confidence scores, or even machine learning models trained to predict optimal entry/exit points from a concatenated feature set of multi-timeframe indicators. A common strategy involves using longer-timeframe signals for trend direction or market regime filtering, while shorter-timeframe signals trigger entries and exits within that context. For example, a daily trend filter might dictate a ‘longs only’ bias, with 5-minute chart breakouts providing the actual entry signals. Effective aggregation needs to account for potential ‘signal lag’ where a slower timeframe signal might be late to confirm a faster one, requiring careful temporal synchronization and perhaps look-back windows for validation.

Backtesting Multi-Timeframe Logic with Fidelity

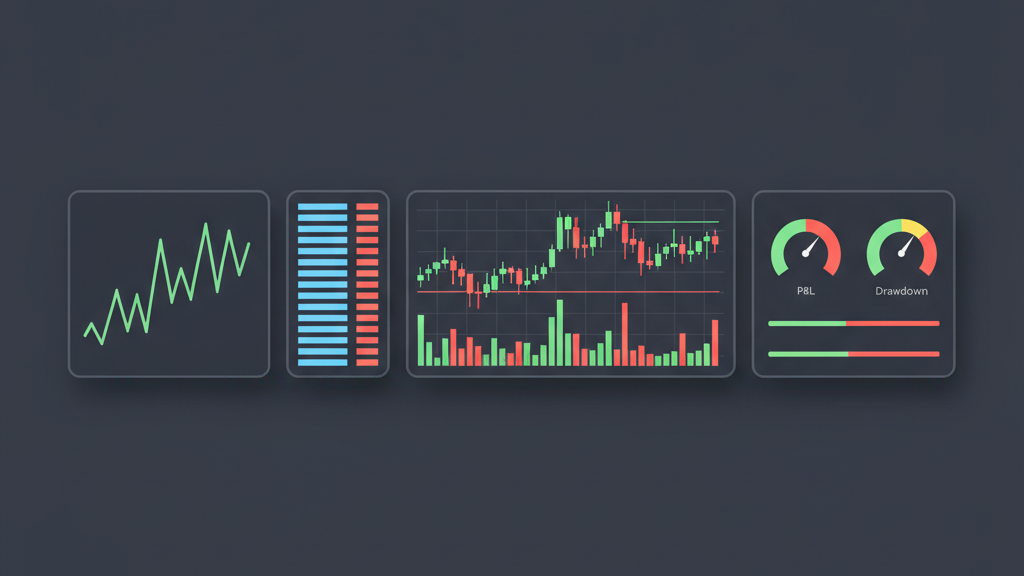

Backtesting a signal logic framework for multi-timeframe strategy design introduces specific complexities beyond single-timeframe simulations. The primary challenge is ensuring accurate data alignment and avoiding look-ahead bias across all synchronized timeframes. A common mistake is allowing a higher-timeframe bar to ‘close’ and update its indicators before all relevant lower-timeframe bars within its period have also closed. For instance, if a 4-hour bar’s signal is used to filter 15-minute entries, the 4-hour signal must only update *after* its corresponding 4 hours of 15-minute data have fully transpired and closed. This often necessitates event-driven backtesting engines that can process market events (trades, quotes, bar closes) sequentially, correctly updating all relevant indicators at their precise chronological point. Additionally, robust backtesting must simulate realistic execution conditions, including slippage and order book depth, which can significantly impact strategy performance, especially for high-frequency components that might generate many small trades. Ignoring these details can lead to wildly optimistic backtest results that fail catastrophically in live trading.

- Implement an event-driven backtesting engine to maintain strict chronological order of multi-timeframe data updates.

- Rigorous check for look-ahead bias, ensuring indicators only use data available at the exact moment of signal generation.

- Simulate realistic slippage and execution costs based on historical market depth and volatility for each timeframe’s typical order size.

- Test the strategy across diverse market conditions, including varying volatility regimes and liquidity environments, to assess robustness.

- Evaluate performance not just on total PnL, but also maximum drawdown, Sharpe ratio, and individual component attribution.

Execution Layer Challenges and Optimization

Translating a multi-timeframe signal into a live trade introduces a new set of challenges at the execution layer. Latency becomes paramount; a high-frequency signal generated from 1-minute or even tick data needs near-instantaneous execution to capture fleeting opportunities, while a slower, daily signal might tolerate slightly higher latencies. The execution system must be intelligent enough to understand the intent of the aggregated signal—is it a quick scalp, a trend-following entry, or a position adjustment? This often dictates the choice of order types (market, limit, IOC, FOK) and algorithms (TWAP, VWAP, POV). Furthermore, dealing with API rate limits, connection failures, and partial fills across different brokers or venues adds another layer of complexity. Implementing robust retry logic, order state management, and position reconciliation is critical. Slippage is also a constant threat; a strategy might look profitable in backtests, but if the combined signals lead to execution during volatile periods, the real-world fill prices can significantly erode profit margins, especially for strategies with tight profit targets. Real-time monitoring of execution quality and performance metrics is essential to identify and mitigate these issues.

Integrating Risk Management and Adaptive Signal Logic

A comprehensive signal logic framework for multi-timeframe strategy design is incomplete without robust, adaptive risk management. Risk controls should not be a static layer but dynamically interact with the signals themselves. This means that a ‘buy’ signal, even if confirmed by multiple timeframes, might be suppressed or downsized if the overall portfolio risk exposure is too high, or if a longer-term market regime signal indicates significant downside risk. Stop-loss and take-profit levels should also adapt to volatility and the strength of the combined multi-timeframe signals. For instance, a stronger signal confirmation might allow for a tighter stop-loss and a more aggressive take-profit target. Beyond fixed thresholds, consider implementing dynamic position sizing based on available capital, market volatility (e.g., using ATR), and the confidence score of the aggregated signal. Continuous monitoring of key metrics like maximum adverse excursion (MAE) and maximum favorable excursion (MFE) in real-time allows for on-the-fly adjustments to signal generation or execution parameters, ensuring that the system can adapt to changing market conditions and protect capital effectively.

- Implement dynamic position sizing based on real-time portfolio risk, market volatility, and signal confidence scores.

- Integrate multi-level stop-loss and take-profit mechanisms that adapt to the strength and timeframe of the generating signals.

- Develop ‘kill switches’ or circuit breakers that can temporarily disable or scale down trading based on extreme market conditions or system health alerts.

- Use longer-timeframe signals to filter or reduce the aggressiveness of shorter-timeframe signals during high-risk market regimes.

- Continuously monitor live strategy performance, including slippage, fill rates, and PnL, to identify deviations from expected behavior.