Developing profitable algorithmic trading strategies hinges on reliable backtesting. However, a common and often insidious pitfall is overfitting – creating a strategy that performs exceptionally well on historical data but fails dramatically in live markets. This occurs when a model inadvertently learns the noise and specific patterns of the training data rather than the underlying predictive relationships. Effectively preventing backtesting overfitting requires rigorous out-of-sample validation techniques that rigorously test a strategy’s true robustness and adaptability to unseen market conditions. Without these methods, even the most promising backtest results can lead to significant capital losses once deployed live. We need to go beyond simple train-test splits and adopt more sophisticated approaches that respect the time-series nature of financial data.

The Insidious Nature of Backtesting Overfitting

Overfitting is a critical problem in quantitative finance, manifesting when a trading strategy’s parameters are excessively tuned to a specific historical dataset. This ‘curve fitting’ to past noise creates an illusion of profitability and robustness that crumbles when exposed to fresh market data. We often see strategies with near-perfect equity curves in backtests, only to discover they’ve merely memorized historical price movements or specific event sequences. This isn’t just about picking parameters; it can stem from implicitly optimizing strategy logic, entry/exit conditions, or even trade filters through repeated testing cycles on the same data. The danger here is significant: a strategy that appears highly profitable on paper due to overfitting will likely generate substantial losses in a live trading environment because its supposed edge is not generalizable. Recognizing that every decision made during strategy development can contribute to this bias is the first step toward building truly robust systems.

Forward-Chaining Cross-Validation for Time Series

Traditional K-Fold cross-validation, while powerful in other domains, needs careful adaptation for time-series data to avoid look-ahead bias. Simply shuffling data and splitting it randomly breaks the chronological dependency that is fundamental to financial markets. A more appropriate approach is forward-chaining or ‘purged’ cross-validation, where each fold’s training data strictly precedes its validation data. This means training on an initial segment, validating on the next, then extending the training set to include the previous validation data, and repeating the process. This method ensures that the model only learns from past information, simulating a live trading scenario more accurately. It’s a computationally intensive process, but indispensable for assessing how consistently a strategy adapts and performs across different, sequential market regimes without ever ‘seeing’ future data during its training phase. The selection of window sizes for training and validation is a crucial hyperparameter itself, often requiring empirical tuning.

- Define an initial training period and a subsequent, non-overlapping validation period.

- Train the strategy on the initial period and evaluate performance on the validation period.

- Expand the training period to include the previous validation period, then define a new, subsequent validation period.

- Repeat the training and validation steps across several sequential folds to capture performance consistency over time.

- Aggregate performance metrics from all validation folds to get a robust estimate of out-of-sample performance.

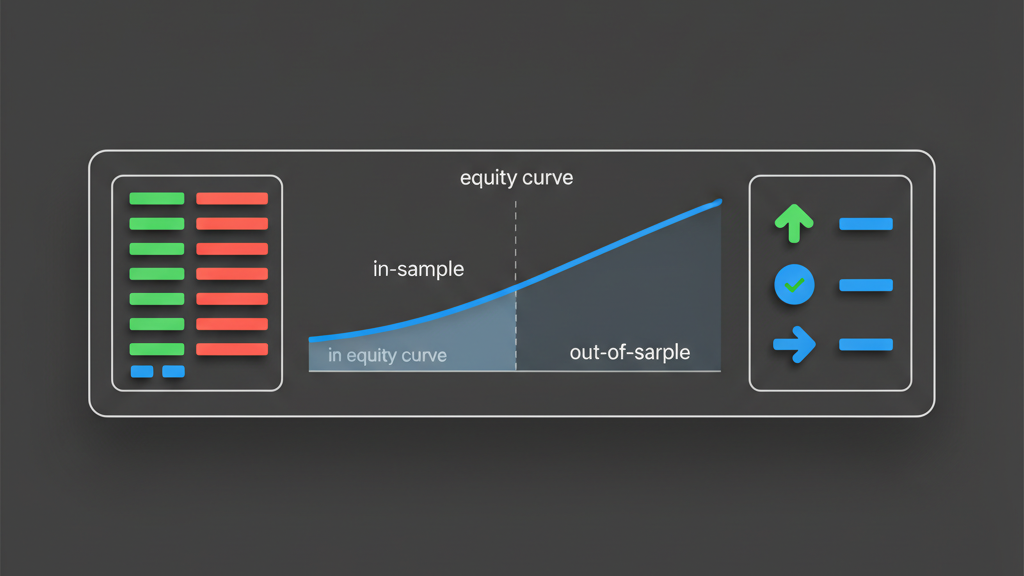

Implementing Walk-Forward Optimization

Walk-forward optimization (WFO) is a widely adopted technique for out-of-sample validation that simulates a strategy’s performance through sequential re-optimization. The process involves defining an ‘in-sample’ window for parameter optimization and a subsequent ‘out-of-sample’ window for evaluation, strictly separated in time. After evaluating, the entire window slides forward, and the process repeats. This directly addresses the problem of parameter decay, where optimal parameters from one market regime may not hold in another. The challenge lies in determining the optimal frequency of re-optimization and the size of the in-sample and out-of-sample windows. Too frequent re-optimization can lead to churn and transaction costs, while infrequent re-optimization might miss critical market shifts. Furthermore, ensuring that the backtesting engine can handle the continuous re-compilation and re-execution of strategies with new parameters is an implementation detail that often requires custom scripting within platforms like Algovantis, rather than relying on standard backtesting features alone.

Dedicated Holdout Sets and Market Regime Testing

Beyond rolling validation, it’s crucial to reserve one or more completely unseen, dedicated holdout sets for final validation. These holdout sets should represent diverse market conditions that were not used in any phase of optimization or initial walk-forward testing. Think of periods covering distinct bull markets, bear markets, volatile phases, and calm periods, including significant historical events like the 2008 financial crisis or the COVID-19 crash. The idea is to subject the final, ‘tuned’ strategy to extreme stress tests against data it has never encountered. If a strategy fails spectacularly on a well-chosen holdout set, it’s a strong indicator of overfitting or lack of robustness. This isn’t just about overall performance; it’s about understanding drawdowns, recovery times, and consistency across varied regimes. A strategy might perform well on average, but a single catastrophic failure in a specific market condition, revealed by a well-selected holdout, is a critical risk signal.

- Select a truly independent, chronologically distinct data segment that was not part of training or iterative validation.

- Ensure the holdout period includes diverse market environments (e.g., high volatility, low volatility, recessions, booms).

- Test the strategy with its final, optimized parameters on this holdout set only once.

- Analyze key risk metrics (e.g., maximum drawdown, VaR) and performance metrics (e.g., Sharpe, Sortino) specific to the holdout.

- Use the holdout results as a final ‘go/no-go’ decision point before contemplating live deployment.

Addressing Multiple Testing Bias and Statistical Significance

The process of developing and backtesting strategies often involves implicitly or explicitly testing numerous hypotheses. Each time we run a backtest and adjust parameters, or select one strategy from a pool of many, we introduce multiple testing bias. This inflates the probability of finding a ‘significant’ result purely by chance, even if no real edge exists. A strategy with a strong Sharpe ratio might just be the lucky outcome of hundreds of trials. To counter this, statistical methods like False Discovery Rate (FDR) control (e.g., Benjamini-Hochberg procedure) or family-wise error rate corrections (e.g., Bonferroni correction) should be considered, especially when evaluating multiple candidate strategies. Another approach involves using robust metrics and performance benchmarks, comparing the strategy’s equity curve against randomized or benchmark portfolios to ensure its performance isn’t merely an artifact of random market movements. True statistical significance often requires more than just a high Sharpe ratio on historical data.

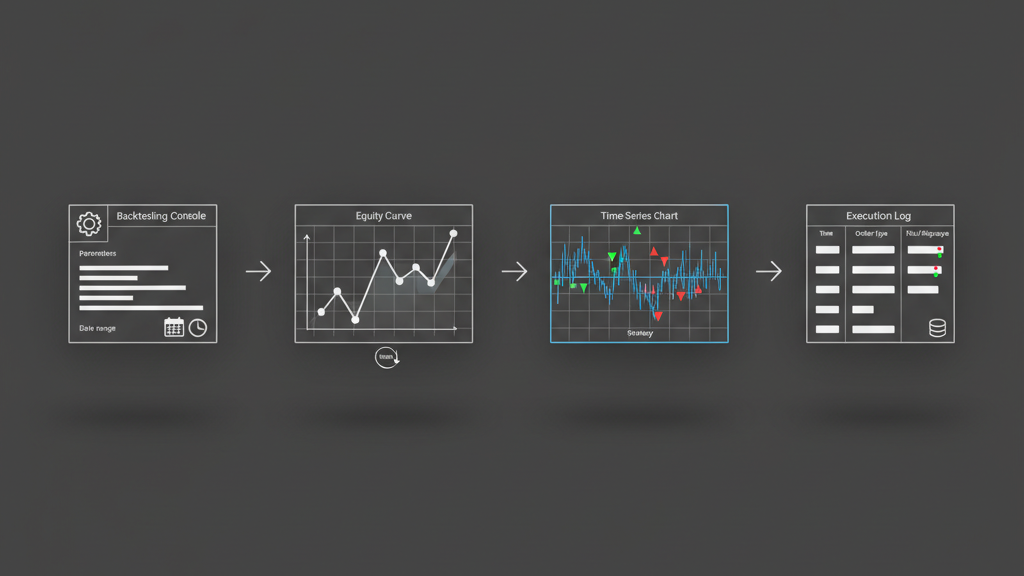

Operationalizing Validation: From Backtest to Live Monitoring

The rigor applied during backtesting’s out-of-sample validation must extend into live trading. A common mistake is to consider validation a one-time event before deployment. In reality, every day in live trading is a new out-of-sample test. Establishing robust monitoring systems is crucial to detect performance degradation or regime shifts that invalidate the strategy’s original assumptions. This means tracking key performance indicators (KPIs) like Sharpe ratio, maximum drawdown, profit factor, and even lower-level metrics like average trade duration or win rate, and comparing them against expected ranges from the backtest and validation phases. Automated alerts must be in place to flag significant deviations, indicating potential issues like changing market microstructure, increased slippage, data feed anomalies, or simply that the strategy’s edge has eroded. Integrating these monitoring tools into an execution platform allows for timely decisions, such as pausing, adjusting, or even shutting down a strategy to prevent further losses, making the live environment a continuous validation loop.