Developing robust trend following strategies in algorithmic trading requires more than just identifying common indicators. The core challenge lies in thoroughly validating the underlying signal logic against real-world market dynamics and system constraints. This isn’t a theoretical exercise; it’s a critical engineering process that involves rigorous data handling, backtesting with realistic assumptions, and an acute awareness of execution practicalities. Poorly validated signals lead to unexpected drawdowns and significant performance decay post-deployment. Our focus here is to unpack the practical considerations for strategy design for trend following signal logic validation, moving beyond textbook examples to address the intricacies encountered when building and deploying live trading systems.

Defining Actionable Trend Following Signals

Crafting effective trend following signals begins with a clear, unambiguous definition of what constitutes a trend and, more critically, when it’s actionable for your specific trading horizon. It’s not enough to say ‘the price is going up’; we need quantifiable thresholds, durations, and momentum criteria. For instance, a simple moving average crossover might be a starting point, but its efficacy is heavily dependent on the chosen periods and how ‘confirmation’ is defined. We often augment these with volume analysis, volatility filters, or even higher-timeframe trend context to prevent whipsaws during ranging markets. The objective isn’t just to identify a trend, but to identify one that offers sufficient persistence and momentum to cover transaction costs and deliver alpha after factoring in typical execution slippage. This iterative process of defining and refining signal generation rules is foundational for robust strategy design for trend following signal logic validation.

Data Quality and Preprocessing for Signal Generation

The integrity of your trend following signals hinges entirely on the quality and preprocessing of your market data. Raw, unfiltered data from exchange feeds often contains errors, corporate actions, or inconsistent ticks that can severely distort indicators. We routinely implement pipelines for data cleaning, which includes handling missing values, identifying and correcting outliers, and properly adjusting for splits, dividends, and other corporate events. Furthermore, selecting the appropriate sampling frequency—whether it’s tick, 1-minute, 5-minute, or daily bars—is crucial. A signal designed for a daily trend will fail catastrophically if applied to tick data without proper aggregation. Latency in data delivery can also introduce look-ahead bias if not carefully managed, especially when constructing indicators that rely on real-time price updates. Ensuring your data pipeline is robust and consistently delivers clean, synchronized data is a non-negotiable step before any serious strategy design for trend following signal logic validation begins.

- Implement robust outlier detection and correction mechanisms.

- Adjust historical data for corporate actions like splits and dividends.

- Ensure data synchronization across multiple assets or exchanges.

- Account for varying market hours and holidays when aggregating time-series data.

Backtesting Methodologies and Realistic Assumptions

Backtesting trend following signals demands a high degree of realism to avoid overfitting and generate truly predictive performance metrics. Beyond standard historical data simulation, we incorporate realistic slippage models that reflect actual market microstructure, varying with order size, liquidity, and market volatility. Transaction costs, including commissions and exchange fees, must be precisely modeled. Furthermore, understanding the impact of survivor bias and look-ahead bias is critical; using an asset universe that existed at the time of the signal generation is imperative, and ensuring your code doesn’t inadvertently use future data points for current decisions is paramount. Walk-forward optimization, where parameters are re-optimized on an expanding window of data and tested on unseen data, is often employed to assess parameter stability and adaptiveness. This disciplined approach to backtesting provides a more honest assessment of a strategy’s potential before deployment, forming a cornerstone of effective strategy design for trend following signal logic validation.

Execution Constraints and Their Impact on Trend Strategies

Even the most theoretically sound trend following signal can falter if execution constraints are not thoroughly considered. Market liquidity, especially for larger position sizes or less liquid assets, dictates how much price impact your orders will incur. A signal that theoretically generates profit on paper might become unprofitable in practice due to the cumulative effect of slippage. Furthermore, the choice of order types—market, limit, or more complex algorithms like TWAP/VWAP—directly influences entry and exit prices. A trend often accelerates, making rapid execution essential, yet market orders can be punitive. Conversely, limit orders risk missing fills entirely. API latency and execution platform reliability also play a significant role; a signal generated by a fast algorithm is useless if the order takes seconds to reach the exchange. Realistically modeling these execution gaps and costs during backtesting is vital for accurate strategy design for trend following signal logic validation.

- Model slippage dynamically based on average daily volume and bid-ask spread.

- Simulate order execution delays and potential rejections from the broker API.

- Account for varying market conditions that impact order fill rates.

- Consider the impact of ‘market impact’ when trading significant volumes.

Integrating Robust Risk Management Logic

Risk management for trend following strategies isn’t an afterthought; it must be an intrinsic part of the signal validation process. Each trend signal needs associated risk parameters: a clear stop-loss, a trailing stop, or a profit-taking mechanism. These aren’t just ‘good practices’; they are integral parts of defining the strategy’s P&L profile. For example, a signal might be validated as profitable, but if its maximum drawdown is unacceptable, it’s not a viable strategy. Position sizing algorithms, such as fixed fractional or volatility-adjusted sizing, should also be integrated into the backtesting framework to simulate realistic capital allocation. We often build in circuit breakers for individual positions, sectors, or the entire portfolio, triggering exits if specific downside thresholds are breached, regardless of the underlying trend signal. This multi-layered approach ensures that the validated signals operate within predefined risk tolerances, preventing catastrophic losses during unexpected market reversals or black swan events.

Performance Evaluation Beyond Simple Returns

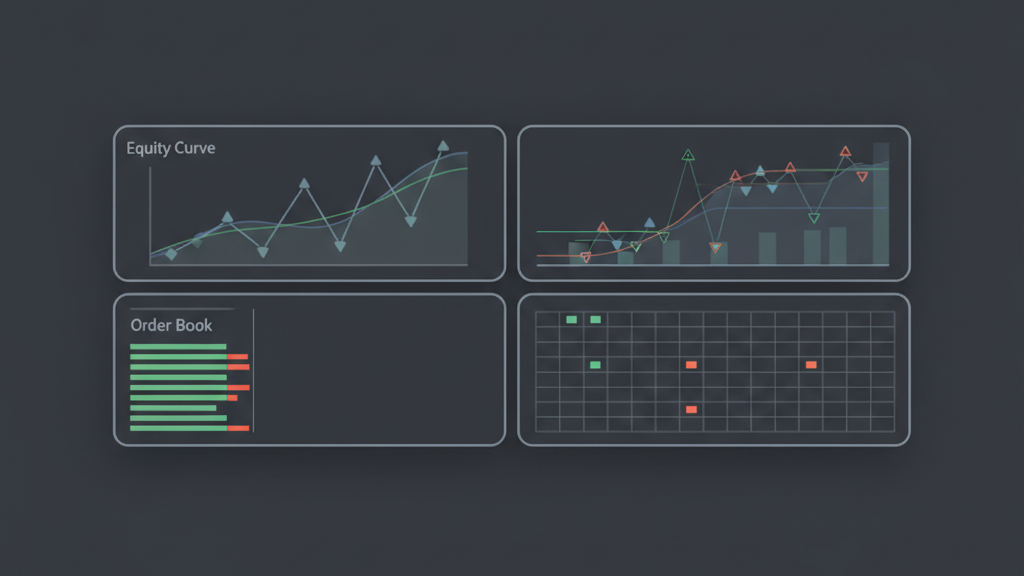

Evaluating the performance of a trend following strategy requires a nuanced approach that extends far beyond simple total returns. While net profit is important, metrics like maximum drawdown, Sharpe ratio, Sortino ratio, Calmar ratio, and win rate provide deeper insights into risk-adjusted performance and strategy robustness. Critically, we examine the consistency of returns across different market regimes (bull, bear, sideways) and analyze the distribution of trade outcomes to understand the ‘fatness’ of the tails. Did the strategy rely on a few big winners, or was it consistently profitable? We also conduct robustness checks, like Monte Carlo simulations, to understand how sensitive the strategy’s performance is to minor changes in parameters or market sequences. This comprehensive performance review is essential for truly validating trend following signal logic, ensuring the strategy holds up under various future scenarios and isn’t just a curve-fitted artifact of historical data.

- Analyze strategy performance across different market regimes (e.g., high volatility vs. low volatility).

- Conduct sensitivity analysis on key parameters to assess robustness.

- Evaluate the average holding period and its impact on transaction costs.

- Compare strategy performance against relevant benchmarks, including a buy-and-hold approach.

Iterative Development and Continuous Monitoring

Strategy design for trend following signal logic validation is not a one-time event; it’s an iterative development cycle supported by continuous monitoring. Even after successful backtesting and initial deployment, market dynamics evolve, and signal efficacy can decay. We implement rigorous live monitoring systems that track not just P&L, but also key performance indicators, slippage metrics, fill rates, and individual signal performance in real-time. Deviations from expected behavior trigger alerts for immediate investigation. Post-deployment, ongoing analysis might reveal new insights, leading to refinements in signal generation, risk management, or execution logic. This feedback loop between live performance and development is crucial. It allows for adaptive strategy evolution, ensuring the trend following signals remain effective and the overall system maintains its edge in an ever-changing market landscape, making it a living, breathing component of our trading infrastructure.