Developing profitable algorithmic trading strategies requires more than just identifying historical patterns; it demands a rigorous validation process to ensure these patterns are genuinely predictive and not merely artifacts of the past. Without proper testing, strategies often underperform or fail completely in live markets, a common pitfall of ‘curve-fitting’ to historical data. This article dives into the essential methodologies of robust backtesting with walk forward and out of sample testing, exploring how these techniques move beyond simple historical simulation to provide a more reliable assessment of a strategy’s true viability and adaptability, forming the bedrock for confident deployment in a live trading environment. Understanding these concepts is paramount for any serious algo trader looking to bridge the gap between backtested results and real-world execution.

The Inherent Problem of Overfitting in Algorithmic Strategies

One of the most persistent and insidious challenges in quantitative trading is overfitting. This occurs when a strategy’s parameters are tuned too precisely to a specific historical dataset, capturing noise and random fluctuations rather than genuine, repeatable market dynamics. A standard backtest, run once over a single historical period, provides a singular equity curve that can look incredibly promising, but this often masks a lack of generalizability. The temptation to tweak rules, add filters, or adjust indicators until the equity curve is perfectly smooth and profitable is a powerful one, leading to what’s often termed ‘data snooping bias’. While such a strategy might yield impressive hypothetical returns on the tested data, its performance inevitably deteriorates sharply when exposed to new, unseen market conditions, making it unsuitable for real-world deployment. Recognizing this fundamental limitation is the first step toward building genuinely robust trading systems.

Foundational Principles: The Role of Out-of-Sample Testing

Out-of-sample testing forms the bedrock of any credible strategy validation. The core idea is simple yet profound: you develop and optimize your trading logic using one segment of historical data (the ‘in-sample’ period) and then test its performance on an entirely separate, previously unseen segment of data (the ‘out-of-sample’ period). This strict separation prevents your optimization process from inadvertently learning the quirks of the data it will later be tested on. If a strategy performs well in the in-sample period but then crumbles in the out-of-sample period, it’s a strong indicator of overfitting. The out-of-sample period acts as a proxy for future market conditions, offering a more realistic gauge of how the strategy might perform when deployed live. Critically, the out-of-sample data must remain untouched throughout the entire development and optimization cycle, acting as the ultimate, unbiased arbiter of performance.

- Strictly partition data into distinct in-sample (training) and out-of-sample (validation) periods.

- Optimize strategy parameters exclusively on the in-sample data.

- Run a single, final test on the untouched out-of-sample data.

- A significant performance degradation in out-of-sample data suggests overfitting.

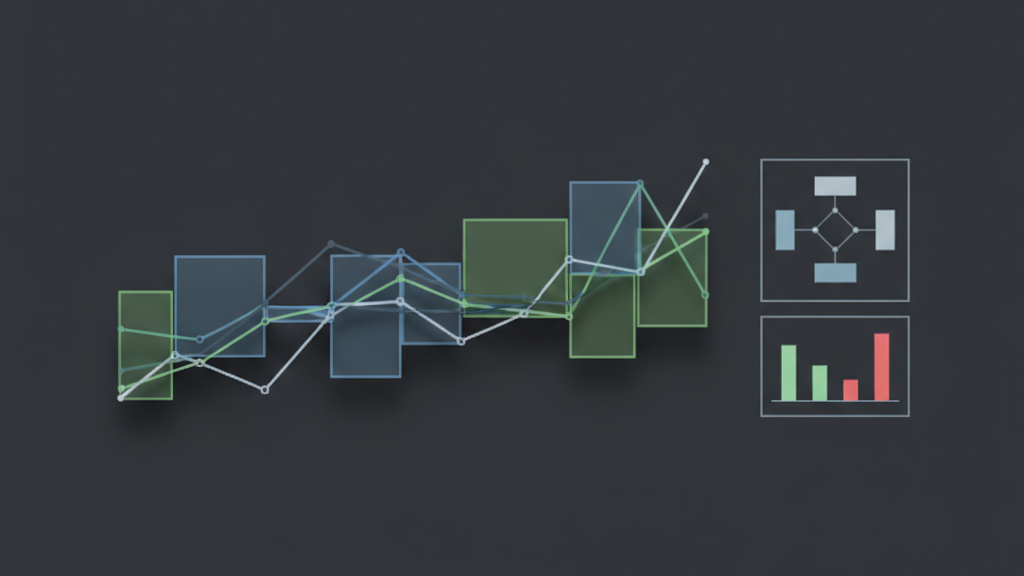

Advancing Validation with Walk Forward Optimization

While simple out-of-sample testing is crucial, walk forward optimization takes the concept further by simulating a dynamic trading environment. Instead of a single train/test split, walk forward iteratively optimizes parameters over a defined ‘in-sample’ window, then applies those optimized parameters to an immediately succeeding ‘out-of-sample’ window. After this validation step, both windows are advanced (or ‘walked forward’) in time, the process repeats, and new parameters are found and tested. This methodology provides a much more robust assessment of a strategy’s long-term adaptability and parameter stability. It answers the critical question: how often do optimal parameters shift, and how well does the strategy perform when re-optimized regularly, mimicking a real-world maintenance schedule? Walk forward analysis often reveals that parameters which were optimal for one market regime might be suboptimal, or even detrimental, in another.

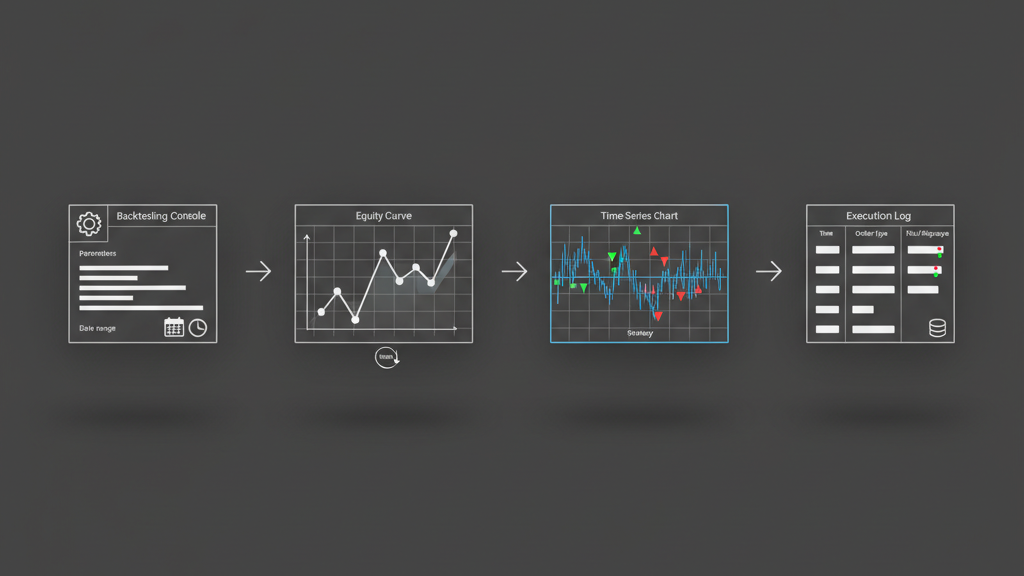

Implementing Walk Forward: Practical Architecture and Configuration

Implementing walk forward optimization in a backtesting engine requires careful architectural design. You’ll need a mechanism to define sliding windows for both in-sample optimization and out-of-sample testing. Key parameters include the ‘in-sample window size’ (how much data is used for optimization), the ‘out-of-sample window size’ (how much data is used for testing), and the ‘walk forward step’ (how much the windows advance after each iteration). A common setup might involve a 2-year optimization window, followed by a 3-month test window, advancing by 1 month each step. This iterative process demands significant computational resources, especially if the optimization involves a broad parameter space or complex objective functions. Efficient data loading, parallel processing for optimization runs, and robust logging of results for each segment are critical to manage the workload and derive meaningful insights. Errors in windowing or data management can easily invalidate the entire walk forward analysis, providing a false sense of security.

- Define ‘in-sample window size’ for parameter optimization.

- Specify ‘out-of-sample window size’ for testing those parameters.

- Set the ‘walk forward step’ (e.g., daily, weekly, monthly) for window advancement.

- Ensure efficient data handling and parallelization for computational intensity.

- Log all optimization parameters and test results for each iteration.

- Be wary of look-ahead bias in data feeds or indicator calculations across windows.

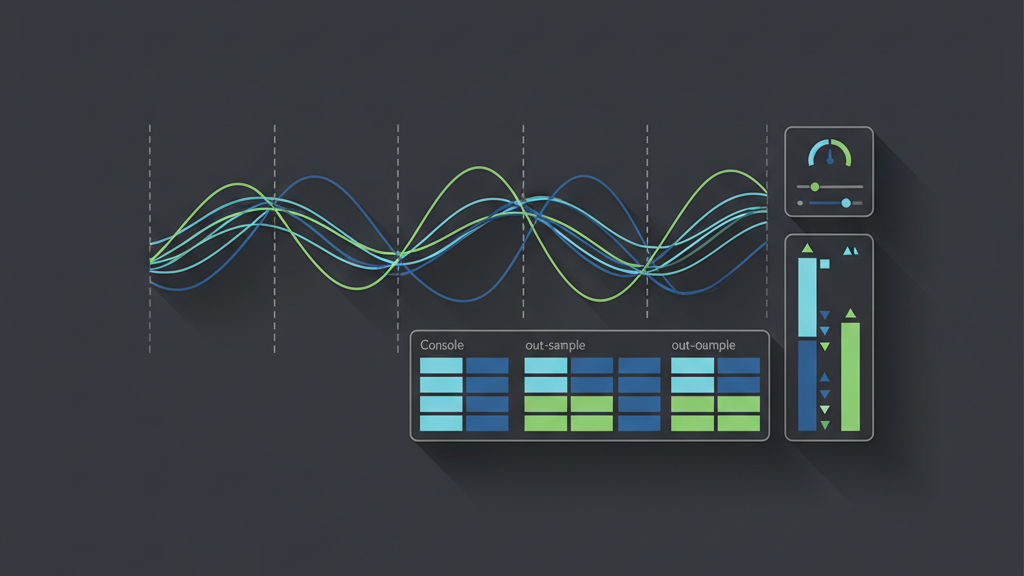

Interpreting Results: Beyond the Single Equity Curve

The output of a robust backtesting process, especially walk forward, extends far beyond a single, aggregated equity curve. You’ll generate a series of equity curves, one for each out-of-sample segment. The true ‘robustness’ of your strategy is not found in the highest Sharpe ratio, but in the consistency of performance metrics across these segments. Look for strategies that maintain acceptable, albeit not necessarily optimal, performance across different market regimes revealed by your walk forward steps. Key metrics to monitor across iterations include the consistency of daily returns, maximum drawdown, Sharpe ratio, profit factor, and win rate. Significant dips in these metrics during certain out-of-sample periods could signal a lack of adaptability or hidden dependencies on specific market conditions. A successful walk forward should show a reasonably smooth ‘equity curve of equity curves,’ demonstrating that the strategy’s edge persists even when its parameters are periodically re-tuned on fresh data.

Transitioning from Robust Backtesting to Live Execution

Once a strategy has demonstrated robustness through extensive walk forward and out-of-sample testing, the transition to live execution requires a methodical approach. The parameters used for live trading should typically be those optimized during the most recent walk forward optimization window. However, backtested robustness does not guarantee future performance; market conditions can change in unforeseen ways. Therefore, a crucial step is to run the strategy in a simulated live environment, often called ‘paper trading’ or ‘shadow trading,’ to observe its behavior with real-time data, actual API latency, execution slippage, and brokerage fees. This phase helps validate the practical aspects of the execution engine and confirms the strategy’s real-world behavior aligns with its robust backtested expectations. Continuous monitoring of live performance against the backtested benchmarks, coupled with regular re-optimization schedules based on the walk forward methodology, becomes indispensable for long-term viability.

- Deploy with parameters from the latest walk forward optimization window.

- Conduct extensive paper trading or shadow trading to validate execution flow and latency.

- Implement real-time performance monitoring against backtested expectations.

- Establish a schedule for regular re-optimization based on the walk forward methodology.

- Account for real-world execution costs like slippage and commissions during live testing.

- Integrate circuit breakers and risk management overrides for unexpected market behavior.