In the world of algorithmic trading, microseconds directly translate to profit or loss. An effective latency budgeting strategy is not merely an optimization exercise; it’s a fundamental requirement for competitive performance. This article delves into the practical aspects of defining, measuring, and optimizing latency across the entire execution pipeline within an order management system (OMS). We’ll cover the critical components that contribute to end-to-end latency, explore techniques for precise measurement, and discuss how to allocate specific time budgets to each stage to ensure orders are executed with minimal delay and maximum efficiency. Understanding and controlling these latencies is paramount for any high-frequency or even medium-frequency strategy seeking an edge in dynamic markets.

The Imperative of Latency Budgeting in Algorithmic Trading

In high-frequency and even medium-frequency algorithmic trading, the difference between a profitable trade and a missed opportunity often boils down to a few microseconds. Latency budgeting for execution pipelines isn’t a theoretical exercise; it’s a rigorous engineering discipline aimed at ensuring our order management systems can act on signals faster and more reliably than competitors. Every component, from strategy logic to network hops and exchange matching engines, introduces delay. Without a defined latency budget, development teams can easily lose sight of performance targets, leading to an opaque system where bottlenecks are discovered only during live trading, often at significant cost. This structured approach forces granular analysis, allowing us to proactively identify and mitigate areas where processing time could impede our strategy’s effectiveness, directly impacting fill rates and slippage. It’s about quantifying acceptable delays for each step, setting non-negotiable thresholds, and building systems to meet them.

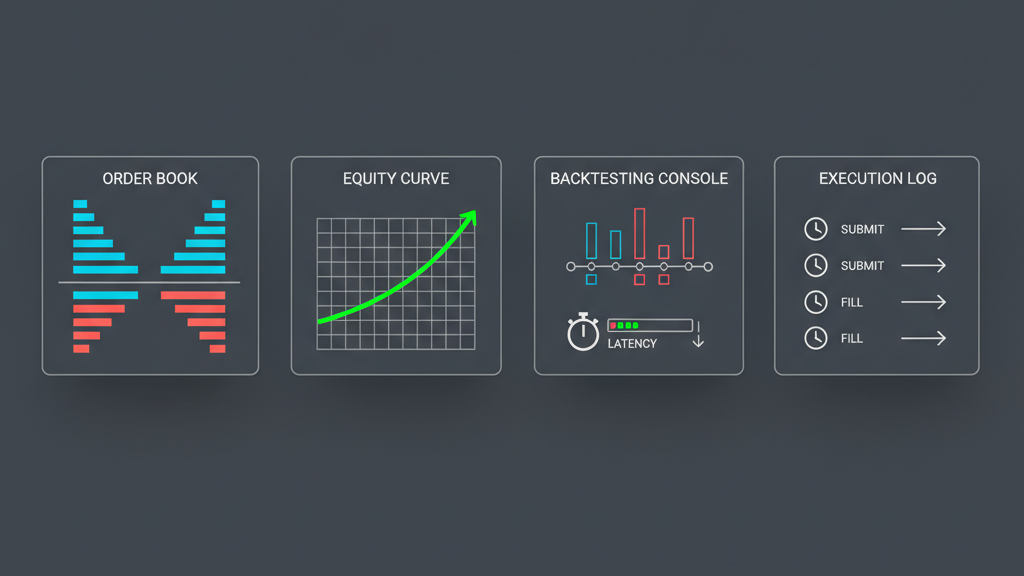

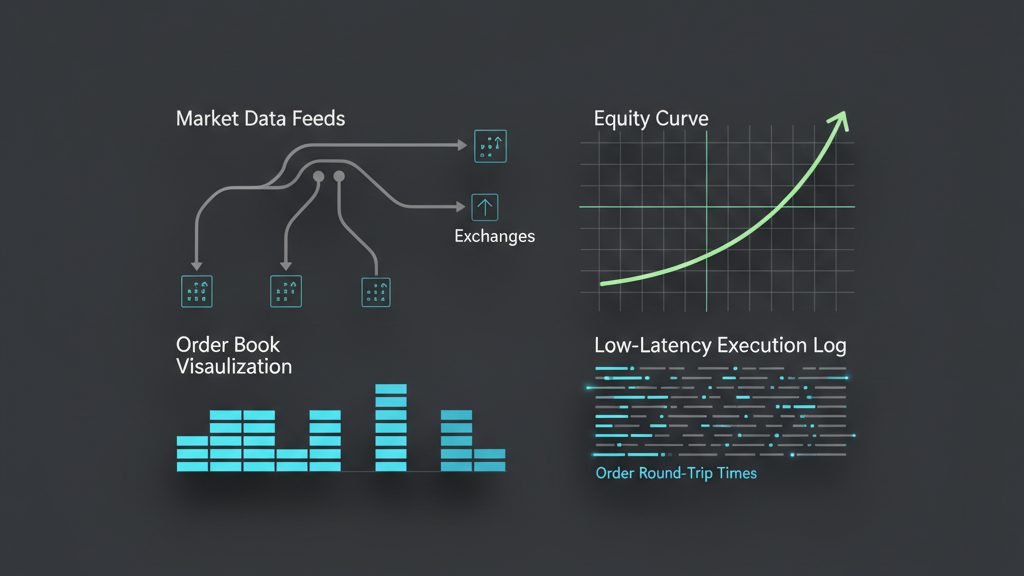

Deconstructing the Execution Pipeline for Latency Analysis

To effectively implement latency budgeting for execution pipelines, we first need a clear, granular understanding of every stage an order traverses. This typically begins when a trading signal is generated and concludes upon receiving a fill confirmation, or a rejection, from the exchange. Each stage represents a potential source of delay, and these delays accumulate to form the total end-to-end latency. A typical order management system breaks down into several key components, each with its own processing overhead that must be accounted for. From the initial API call to the final acknowledgment, understanding the critical path allows for precise time-stamping and subsequent optimization efforts.

- Signal Generation & Strategy Decisioning: Time taken to process market data and generate an order intent.

- Order Construction & Normalization: Building the exchange-specific order message from the internal order intent.

- Pre-Trade Risk & Compliance Checks: Verifying order parameters against defined limits (e.g., position limits, capital checks, fat-finger detection).

- Order Routing & Connectivity: Sending the order to the appropriate exchange gateway or broker via network infrastructure.

- Exchange Processing & Matching: The time taken by the exchange to receive, process, and potentially match the order.

- Fill Processing & Acknowledgment: Receiving and processing fill messages, updating internal state, and notifying the strategy.

Practical Latency Measurement and Profiling Techniques

Merely understanding the pipeline stages isn’t enough; we need concrete data. Practical latency budgeting demands robust measurement and profiling tools. This involves instrumenting every critical path with high-resolution timestamps, often down to nanoseconds using hardware-level clocks like PTP-synchronized NICs, especially for high-frequency setups. Software-based timing, while easier to implement, introduces OS jitter and context-switching overhead, making it less precise for critical path analysis. We commonly use custom logging frameworks that capture timestamps at entry and exit points of functions, inter-process communication boundaries, and network I/O operations. Post-processing these logs reveals accumulated latencies for each segment. Tools like `perf`, `bcc`, and custom profiling agents integrated into the codebase help identify CPU hotspots, memory access patterns, and contention points that contribute to unexpected delays. Consistent clock synchronization across all components of a distributed trading system is non-negotiable to get meaningful end-to-end latency figures.

Budget Allocation and Optimization Strategies for Minimal Latency

Once we have a clear picture of measured latencies, the next step in latency budgeting for execution pipelines is to allocate a specific time budget to each segment and then optimize to meet those targets. This often involves making difficult trade-offs. For instance, dedicating more CPU cores to a specific risk check module might reduce its latency but could impact overall system throughput if not carefully managed. Optimization strategies are diverse, ranging from hardware-level tweaks to software architecture choices. It’s a continuous process of iterative improvement, benchmarking, and re-evaluation under various load conditions. The goal is to consistently operate within the defined budget, ensuring the system remains responsive even during peak market activity.

- Hardware Co-location: Placing servers physically close to exchange matching engines to minimize network propagation delays.

- Kernel Bypass Networking: Utilizing technologies like Solarflare’s Onload or Mellanox’s VMA to bypass the operating system kernel for network I/O, reducing packet processing latency.

- FPGA Acceleration: Offloading critical, latency-sensitive tasks (e.g., market data parsing, specific risk checks) to Field-Programmable Gate Arrays for hardware-level execution.

- Efficient Data Structures & Algorithms: Opting for lock-free data structures, pre-allocated memory pools, and highly optimized algorithms to reduce CPU cycles and memory contention.

- Thread Affinity & CPU Pinning: Explicitly assigning threads to specific CPU cores to minimize context switching and improve cache locality.

- Optimized Message Queues: Using low-latency inter-process communication (IPC) mechanisms like shared memory or message queues tailored for performance rather than general-purpose RPC.

Mitigating External Factors and Unforeseen Latency Spikes

Even with meticulous internal latency budgeting, external factors can introduce significant, unpredictable delays. Market data feed quality, for example, can fluctuate, causing spikes in data processing latency or even gaps that impact signal generation. Exchange API rate limits or unexpected throttles can add artificial delays to order submission. Network congestion, whether within the data center or across wider internet links, is another common culprit. Building resilience against these factors is crucial. This involves implementing robust retry mechanisms with exponential back-off, dynamic routing logic to bypass congested paths, and intelligent queue management that can buffer or prioritize critical orders. Furthermore, monitoring external system health and integrating circuit breakers that can temporarily halt or slow down trading when external latencies exceed predefined thresholds is a pragmatic approach to prevent adverse executions and unexpected slippage.

Integrating Latency Constraints into Algorithmic Risk Management

Latency budgeting isn’t solely about speed; it’s intrinsically linked to risk management. Exceeding a defined latency budget, especially for critical pre-trade risk checks or order cancellations, can have severe consequences. If an order takes too long to reach the exchange, the market price might have moved significantly, leading to higher slippage than anticipated. This adverse selection can erode profitability or even cause losses. Consequently, our algorithmic risk management systems must be aware of latency performance. Mechanisms might include automatically pausing or disabling a strategy if its observed execution latency consistently breaches thresholds, or dynamically reducing order sizes to limit exposure when pipeline delays are detected. For instance, if an order cancellation request isn’t acknowledged within a defined window, the system might assume the order is still live and adjust its position tracking accordingly. Integrating latency metrics directly into the risk framework ensures that execution speed is treated not just as an optimization goal but as a critical component of overall system integrity and capital protection.