Developing and deploying profitable intraday trading strategies demands a rigorous backtesting methodology. Unlike swing or long-term strategies, intraday systems operate on granular data, making them acutely sensitive to microstructure effects, transaction costs, and data quality. A superficial backtest can easily lead to strategies that perform exceptionally well in simulation but fail spectacularly in live trading. This guide details a practical approach to building robust validation into your backtesting process, focusing on the specific challenges and considerations relevant to high-frequency intraday execution.

The Unique Challenges of Intraday Strategy Backtesting

Intraday strategies operate within an inherently noisy and volatile environment, demanding a backtesting methodology that accounts for phenomena largely ignored in longer-term analyses. The sheer volume of data, often tick-level, introduces significant storage and processing overhead, but it’s crucial for accurately simulating market interactions. Microstructure effects, such as bid-ask bounce, order book dynamics, and market maker behavior, play a dominant role in intraday profitability. Ignoring these can lead to unrealistic PnL projections. Furthermore, the speed and frequency of trades mean that even small amounts of slippage or commission can erode alpha entirely. This necessitates a forensic level of detail in data acquisition, trade simulation, and performance attribution, which often makes these systems more complex to validate than their lower-frequency counterparts.

Sourcing and Preparing High-Fidelity Intraday Data

The foundation of any robust backtest, especially for intraday strategies, is high-quality, granular data. This typically means tick-level data for order book events or at least one-minute bar data for simpler strategies, capturing bid, ask, last price, and volume. Data integrity is paramount; missing ticks, erroneous quotes, or incorrect timestamping can fatally compromise results. Practical implementation often involves subscribing to vendor feeds or direct exchange feeds, storing data efficiently in time-series databases, and implementing robust cleaning procedures. Common issues include handling data gaps during market holidays or technical outages, reconstructing order books from raw tick streams, and accurately applying corporate actions like stock splits or dividend adjustments without introducing look-ahead bias, which is particularly tricky when dealing with historical price adjustments that weren’t known at the time of the original trade.

- Validate data timestamps and sequence to prevent look-ahead bias.

- Implement filters for outlier prices and volumes that might be data errors.

- Reconstruct bid/ask spreads accurately from tick data for realistic entry/exit points.

- Address survivorship bias and delisted securities by including full historical data sets.

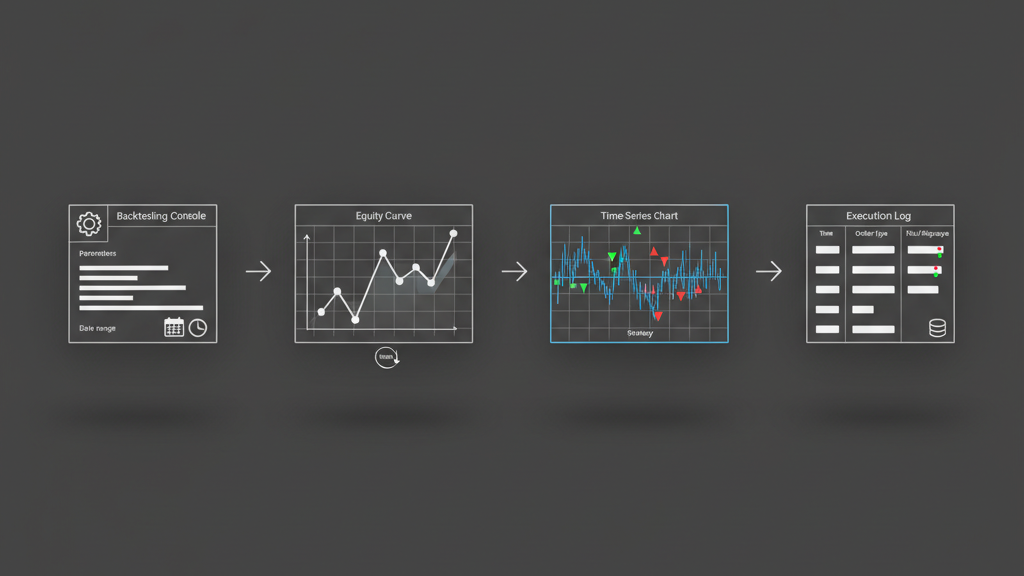

Realistic Execution Simulation and Transaction Cost Modeling

Simulating trade execution realistically is where many backtests fall short, turning paper profits into live losses. For intraday strategies, even a fraction of a cent of slippage per share can be devastating. A robust backtesting engine must incorporate sophisticated slippage models that go beyond fixed percentages, perhaps considering historical order book depth, volatility, and typical market impact for a given order size. Brokerage commissions, exchange fees, and regulatory fees must be factored into every single trade. Ignoring these can inflate perceived profitability significantly. We often develop custom execution modules that mimic actual API behavior, including potential partial fills, rejections, or delays in order placement, ensuring the backtest aligns closely with real-world trading constraints and potential API failures or rate limits experienced during periods of high market activity.

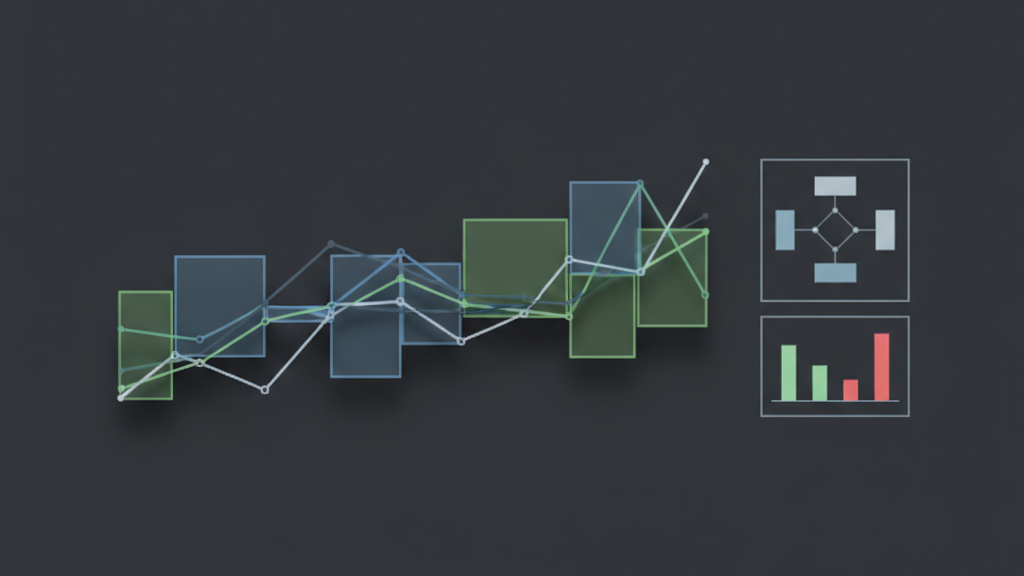

Advanced Validation Techniques for Robustness

Beyond a single, in-sample backtest, robust validation requires a multi-faceted approach to prove a strategy’s edge and resilience. Walk-forward optimization is critical, where the strategy is optimized on a rolling ‘in-sample’ window and then tested on an immediately subsequent ‘out-of-sample’ window. This process is repeated across the entire dataset, providing a more realistic gauge of how the strategy adapts to evolving market conditions. Monte Carlo simulations are invaluable for assessing parameter sensitivity and the distribution of potential outcomes, rather than relying on a single deterministic equity curve. Stress testing against historical crisis periods or artificially injected volatility helps identify breakpoints. Furthermore, testing the strategy across different asset classes or timeframes, if applicable, can highlight its generalizability or specificity to certain market structures.

- Implement walk-forward analysis with clearly defined optimization and testing periods.

- Conduct Monte Carlo analysis on key strategy parameters to understand performance sensitivity.

- Perform robustness checks by varying transaction costs, latency, or data quality in simulations.

- Evaluate performance across multiple market regimes (bull, bear, volatile, calm) and economic cycles.

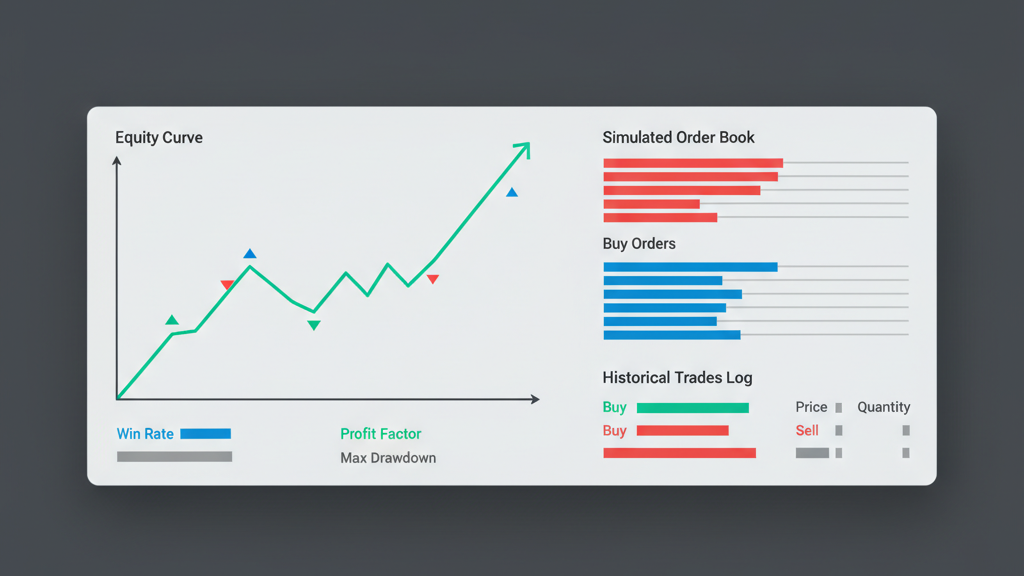

Integrating Performance Metrics and Risk Management Logic

A comprehensive backtest doesn’t just return PnL; it provides a detailed breakdown of performance and risk. Key metrics for intraday strategies include gross profit, net profit (after all costs), maximum drawdown, Sharpe ratio, Sortino ratio, win rate, average profit/loss per trade, and profit factor. It’s crucial to analyze these metrics not just overall, but also on a per-trade basis and across different market conditions. Robust backtesting also needs to accurately simulate internal risk management logic, such as trailing stops, time-based exits, maximum daily loss limits, or position sizing adjustments based on volatility. The backtester should trigger and adhere to these rules precisely as the live execution system would, exposing any flaws in the risk control mechanisms before capital is at stake. Over-optimizing these risk parameters can be a source of overfitting, so sensitivity analysis on risk limits is also recommended.

Mitigating Overfitting and Data Snooping Bias

Overfitting is the Achilles’ heel of quantitative trading, especially for intraday strategies where vast amounts of data and numerous parameters can lead to models that perfectly fit historical noise rather than underlying market dynamics. The more parameters you optimize or the more times you test variations of a strategy on the same historical data, the higher the risk of data snooping bias. To combat this, adhere to a strict research workflow: define hypotheses, test on distinct datasets, and avoid ‘cherry-picking’ the best-performing backtest out of hundreds of trials. Techniques like out-of-sample testing on completely unseen data are non-negotiable. If a strategy’s performance degrades significantly on out-of-sample data, it’s a strong indicator of overfitting. We often segment our data into distinct training, validation, and untouched test sets, ensuring the final performance evaluation is conducted on data the strategy has never ‘seen’ during its development or tuning phases.

- Strictly separate in-sample (training/validation) and out-of-sample (testing) datasets.

- Limit the number of parameters and parameter permutations during optimization.

- Utilize statistical significance tests to confirm that observed edge is not due to chance.

- Document every strategy iteration and avoid discarding ‘failed’ tests, which can indicate data snooping bias.