The modern financial landscape is characterized by market fragmentation, a reality where liquidity for a single security is dispersed across numerous exchanges, dark pools, and alternative trading systems. For quantitative trading firms and high-frequency traders, this environment presents both immense opportunities and significant challenges. An off-the-shelf brokerage platform often lacks the sophistication required to effectively navigate these diverse venues. Building or tailoring an advanced order management system (OMS) becomes a critical undertaking, directly impacting execution quality, latency, and ultimately, profitability. This deep dive focuses on the practical considerations and architectural choices involved in designing an order management system specifically engineered for fragmented market execution, ensuring traders can achieve optimal fills and manage risk effectively across disparate venues.

The Inevitable Challenge of Market Fragmentation

Market fragmentation is no longer a niche concern but a foundational aspect of equity, FX, and futures trading. Liquidity is spread across primary exchanges, multilateral trading facilities (MTFs), and a myriad of dark pools, each with its own rules, fees, and latency profiles. For an algorithmic strategy, this dispersion creates a complex optimization problem: how to source the best price and quantity without creating adverse market impact or missing available liquidity. A generic OMS, typically designed for simpler single-venue routing, often falls short. It may lack the intelligence to dynamically switch venues, aggregate quotes from disparate sources, or participate in non-displayed liquidity pools effectively. Developing a custom or highly specialized order management system for fragmented market execution is thus essential for strategies aiming to gain an edge, requiring a precise control over where, when, and how orders interact with the market.

Core Architectural Pillars of a Fragmented Market OMS

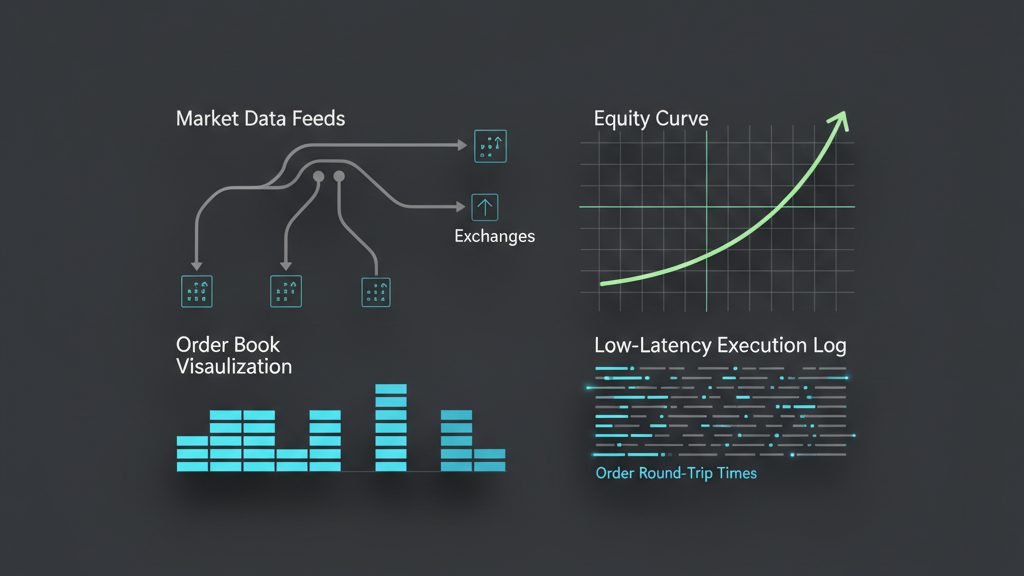

Designing an order management system for fragmented markets begins with a robust, modular architecture. At its heart, the system must act as a sophisticated state machine, meticulously tracking every order’s lifecycle from initial intent to final fill across potentially multiple venues. This requires more than just submitting an order; it demands continuous monitoring, intelligent update processing, and precise reconciliation. Key components include a unified market data aggregator capable of normalizing and presenting real-time Level 2 data from all connected venues, a set of flexible connectivity adapters to handle diverse API protocols (FIX, proprietary binary), and a comprehensive order state engine. This engine is responsible for managing parent-child order relationships, handling partial fills, cancellations, and modifications, ensuring an accurate internal representation of all outstanding positions. Furthermore, an integrated pre-trade risk gateway is non-negotiable, performing instant compliance, capital, and exposure checks before any order reaches an external venue, protecting against costly errors.

- Unified Market Data Aggregator for real-time venue information and normalization

- Configurable Connectivity Adapters for diverse exchange APIs (FIX, proprietary, REST)

- Order State Engine for comprehensive lifecycle tracking (parent/child orders, fills, pending actions)

- Pre-Trade Risk Gateway for instant compliance, capital, and exposure checks

Engineering Smart Order Routing (SOR) Logic in Practice

The Smart Order Router (SOR) is the brain of an OMS tailored for fragmented markets. Its primary function is to achieve best execution by dynamically choosing the optimal venue(s) for an order. This isn’t a static decision; it involves sophisticated algorithms that consider current market conditions across all venues, including available liquidity, price levels, venue fees and rebates, and estimated execution latency. For instance, an SOR might prioritize routing to a dark pool first to capture non-displayed liquidity without revealing intent, then sweep across lit markets, or slice an order into smaller components to minimize market impact across multiple venues simultaneously. Developing this logic demands continuous access to aggregated, low-latency market data and the ability to rapidly adapt routing decisions as liquidity shifts or new best bids/offers emerge. The core challenge is balancing speed, price, and impact in real-time, making the SOR a complex, highly specialized module within the order management system.

Navigating Latency and Throughput in Execution

Even the most intelligent SOR is ineffective if the underlying system can’t act with extreme speed. Low latency and high throughput are paramount for an OMS operating in fragmented markets. This requires meticulous attention to every layer, from network topology to application code. Co-location with exchange matching engines is often a prerequisite for competitive strategies, minimizing network hop delays. On the software side, techniques such as lock-free data structures, efficient inter-process communication (IPC) frameworks (e.g., Aeron, ZeroMQ), and careful thread affinity management are vital to reduce contention and ensure predictable performance. Operating system-level tuning, including kernel bypass mechanisms for network I/O, can shave off critical microseconds. The goal is to process incoming market data, make routing decisions, and submit orders with minimal internal processing overhead, ensuring the OMS can react to fleeting opportunities and manage high volumes of order submissions and acknowledgments across multiple exchange APIs concurrently.

- Strategic co-location with exchange infrastructure for minimized network latency

- Optimized inter-process communication (IPC) for internal message passing efficiency

- Leveraging kernel bypass techniques (e.g., Solarflare OpenOnload) for low-latency network I/O

- Careful thread affinity and CPU pinning for predictable and isolated processing

Building a Resilient and Fault-Tolerant OMS

In the unpredictable world of electronic trading, system failures, API disconnects, and venue outages are not ‘if’ but ‘when’. A robust OMS designed for fragmented market execution must embed resilience at its core. This includes implementing comprehensive error handling for all external API interactions, with clear retry logic, timeouts, and circuit breakers to prevent cascading failures. Idempotent order submission logic is crucial, ensuring that re-transmitting an order request after a communication failure doesn’t result in duplicate orders. Furthermore, maintaining a persistent and transactional order book, often backed by a high-performance database or journaled message queue, allows for rapid system recovery without losing track of outstanding orders. Automated reconciliation procedures with brokers are essential to verify positions and order states post-recovery. Ultimately, the system must be designed to either continue operating in a degraded mode or fail safely, with clearly defined operational fallbacks, including potential manual intervention, to mitigate financial risk from unexpected events.

Backtesting and Validating Fragmented Execution Logic

Backtesting an OMS for fragmented market execution poses unique and significant challenges that extend beyond typical strategy backtesting. Realistic simulation demands access to high-fidelity historical market data from all relevant venues, encompassing full order book depth (Level 2 data) and timestamped events from each source, accurately synchronized. It’s not sufficient to simply use consolidated tick data; the backtesting engine must accurately model queue positions, internal system latency, and the impact of the OMS’s own orders on market dynamics across multiple, interacting order books. This includes simulating venue-specific fees, rebates, and the success rates of various routing strategies (e.g., dark pool fills). The process requires a sophisticated simulation environment that can precisely replay market conditions and evaluate the OMS’s execution quality metrics, such as implementation shortfall, VWAP deviation, and fill rates per venue, providing empirical evidence of the system’s effectiveness before live deployment.

- Aggregating and synchronizing high-fidelity Level 2 data from all target venues

- Accurately simulating latency, queue dynamics, and market impact for each venue

- Modeling venue-specific fees, rebates, and order types within the simulation

- Evaluating execution quality metrics: Implementation Shortfall, VWAP deviation, Fill Rate by venue