Achieving reliable live trading execution for algo strategies is critical for sustained profitability in automated trading. Developing a robust algorithmic trading system goes beyond just strategy logic; it demands meticulous attention to the operational aspects that underpin real-time order placement and management. This article explores the key considerations and practical steps to build and maintain a trading infrastructure that reliably executes your carefully crafted algorithms, minimizing downtime and maximizing performance.

Robust Infrastructure and Connectivity

The foundation of reliable live trading execution for your algo strategies starts with a robust and resilient infrastructure. This includes high-performance servers, stable network connectivity, and redundant systems to prevent single points of failure. Dedicated hardware, rather than shared resources, often provides predictable performance crucial for latency-sensitive strategies. Proximity to exchange servers through colocation services can significantly reduce network latency. Additionally, uninterruptible power supplies and backup internet connections are essential safeguards against operational disruptions. Careful planning and investment in this core infrastructure ensure your trading system can consistently process data and execute orders without unexpected interruptions.

- Utilize dedicated servers for consistent performance.

- Implement network redundancy with multiple ISPs or routes.

- Consider colocation services for ultra-low latency access.

- Deploy uninterruptible power supplies for hardware stability.

- Ensure secure and stable direct market access pathways.

Data Quality and Feed Management

Accurate and timely market data is non-negotiable for effective algorithmic trading. Poor data quality, including stale prices, missing ticks, or incorrect historical records, can lead to flawed strategy signals and detrimental trade decisions. Establishing robust data validation checks at the ingress point is vital to filter out erroneous information. Aggregating feeds from multiple reliable sources and cross-referencing data points can enhance integrity. Furthermore, precise timestamp synchronization across all data sources and system components is crucial for backtesting and live execution alignment. Effective data feed management ensures that your algorithms operate on the most accurate and current market information available.

- Implement real-time data validation for accuracy.

- Utilize multiple data feeds to ensure redundancy and cross-verification.

- Synchronize timestamps across all data sources.

- Handle missing or corrupted data gracefully to prevent system halts.

- Monitor data feed latency and throughput continuously.

Low Latency Execution Pathways

Minimizing the time between a strategy generating a signal and the order reaching the exchange is paramount, especially for high-frequency strategies. Low latency execution pathways involve optimizing every component in the trade lifecycle. This includes highly efficient code, direct market access (DMA) bypassing intermediate brokers where possible, and leveraging colocation facilities. Network topology optimization, using specialized hardware for order routing, and employing fast messaging protocols also contribute to reducing milliseconds of delay. Each reduction in latency can translate into better order fill prices and increased profitability, directly impacting the reliability of your execution.

- Optimize strategy code for maximum execution speed.

- Utilize direct market access for minimal routing delays.

- Co-locate servers near exchange matching engines.

- Employ high-speed network components and protocols.

- Streamline order management system interactions.

Comprehensive Error Handling and Resilience

Even with the most robust systems, errors are inevitable. A resilient trading system must be designed to anticipate and gracefully recover from unexpected events. This involves implementing comprehensive error handling mechanisms, such as robust try-catch blocks, circuit breakers that halt trading under extreme conditions, and automated restart capabilities for critical services. Detailed logging of all operations, errors, and system states is essential for post-mortem analysis and debugging. Designing for fault tolerance, including redundant components and failover strategies, helps maintain system uptime and prevents minor issues from escalating into major operational failures.

- Implement robust exception handling for all system components.

- Design circuit breakers to prevent runaway strategies.

- Automate critical service restarts upon failure detection.

- Maintain detailed and structured logs for diagnostics.

- Develop failover mechanisms for key infrastructure elements.

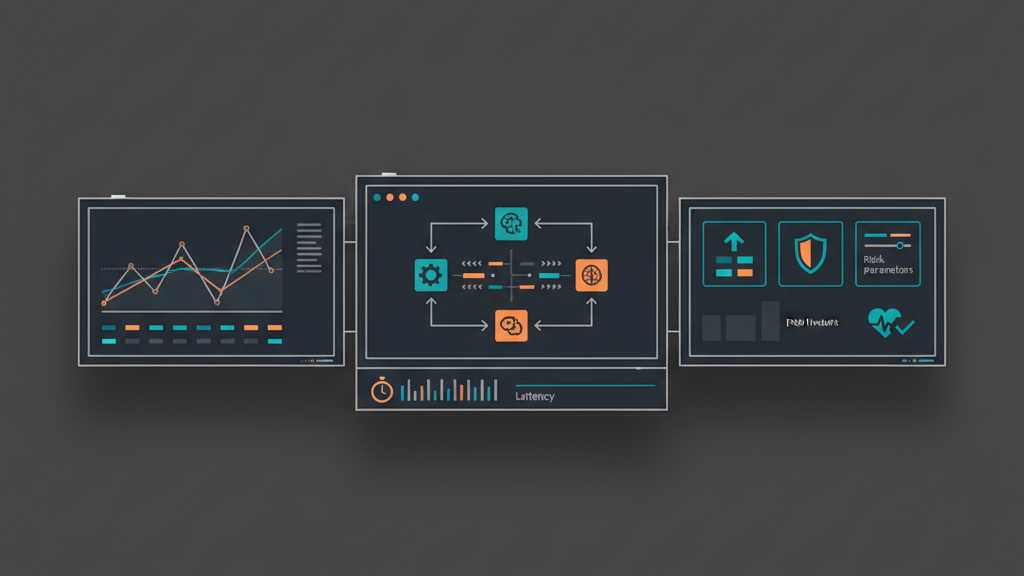

Real-time Monitoring and Alerting

Continuous, real-time monitoring is crucial for maintaining the health and performance of your live trading systems. This encompasses tracking system metrics like CPU utilization, memory usage, and network activity, alongside application-specific metrics such as strategy PnL, fill rates, order queue depth, and connectivity status to exchanges and data feeds. Proactive alerting systems should notify operators immediately of any anomalies, performance degradation, or critical failures. Custom dashboards that visualize key performance indicators provide a consolidated view of the trading environment, enabling rapid identification and resolution of potential issues before they impact trading outcomes.

- Monitor system resource usage: CPU, memory, disk, network.

- Track strategy performance metrics: PnL, fill rates, slippage.

- Implement alerts for connectivity loss or data feed interruptions.

- Set up custom dashboards for real-time operational oversight.

- Configure threshold-based alerts for critical trading parameters.

Pre-Trade and Post-Trade Risk Controls

Automated risk management is a fundamental component of any reliable algo trading system. Pre-trade risk controls prevent excessive exposure by setting limits on order size, maximum position, daily loss, and instrument-specific volatility. These controls act as a last line of defense before an order is placed. Post-trade controls continuously monitor open positions, realized PnL, and exposure against predefined thresholds. Automated kill switches or circuit breakers can be triggered to halt trading, liquidate positions, or disable strategies if certain risk parameters are breached. Integrating these controls directly into the execution pathway helps mitigate potential catastrophic losses.

- Implement granular limits on order size and daily volume.

- Enforce maximum position size and exposure limits per instrument.

- Set automated daily profit and loss thresholds.

- Integrate kill switches for immediate strategy halting.

- Monitor margin utilization and capital allocation in real-time.

Deployment and Version Control Best Practices

Managing changes to your algo strategies and infrastructure requires a disciplined approach. Utilizing version control systems like Git is essential for tracking all code changes, enabling easy rollbacks to previous stable versions. A structured deployment pipeline, including development, testing, staging, and production environments, ensures that changes are thoroughly validated before going live. Automated testing in staging environments can catch regressions and performance issues. Implementing a clear change management protocol, coupled with phased rollouts or dark launches for new features, minimizes the risk of introducing errors into the live trading environment. This systematic approach contributes significantly to maintaining execution reliability.

- Use version control for all code and configuration files.

- Implement a multi-stage deployment pipeline (dev, test, prod).

- Automate unit, integration, and performance testing.

- Establish clear rollback procedures for quick recovery.

- Document all changes and deployment schedules comprehensively.