In high-frequency and even medium-frequency algorithmic trading, the difference between a profitable strategy and a losing one often boils down to execution fill quality. It’s not enough to simply have a good signal; how your orders interact with the market and are ultimately filled is paramount. Two critical metrics for measuring execution fill quality are latency and slippage. These aren’t just abstract concepts; they represent tangible costs and missed opportunities that can erode alpha. Developing a robust framework to capture, analyze, and act upon these metrics is essential for any serious algo trading operation. This involves more than just logging timestamps; it requires a deep understanding of market microstructure, system architecture, and the practical challenges of live execution.

Understanding Execution Latency in Detail

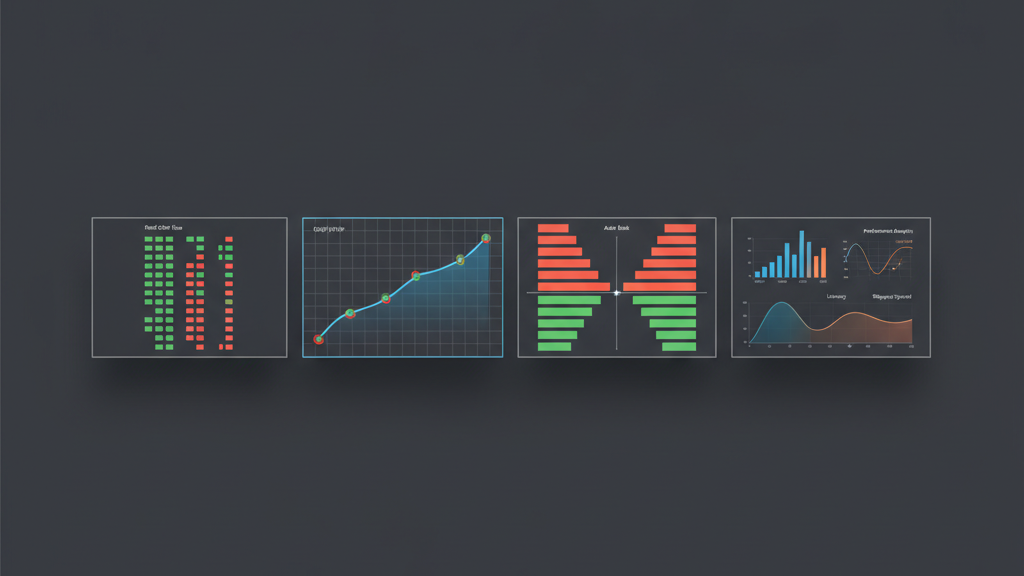

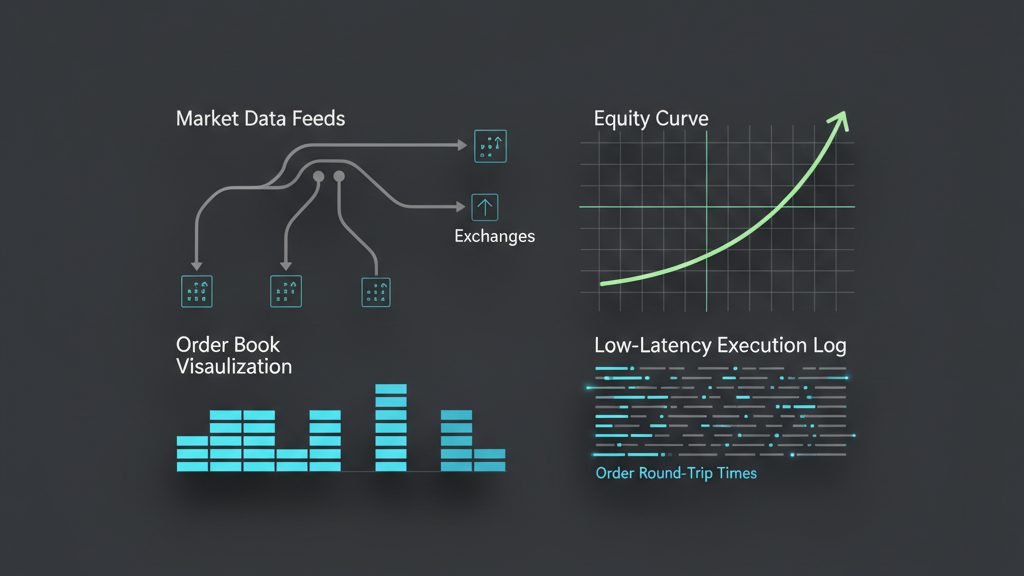

Latency in algorithmic trading isn’t a single, monolithic number; it’s a composite of various stages, each contributing to the total time from signal generation to market fill. This often begins with the time taken to receive market data, process it within your strategy, construct an order, transmit it to the broker or exchange, and finally, for that order to be acknowledged and potentially filled. Each hop, from network packet travel to exchange matching engine queueing, adds delay. For market-making strategies, microsecond differences can determine whether a quote is stale or a trade is missed. Understanding these different latency components – network latency, processing latency, and exchange latency – is crucial for pinpointing bottlenecks and accurately measuring execution fill quality. A high average latency, particularly for time-sensitive strategies, directly translates to increased adverse selection and degraded fill prices.

Quantifying Slippage in Live Trading

Slippage, at its core, is the difference between an expected trade price and the actual executed price. While often seen negatively, it can be positive (pro-slippage) in rare, favorable market movements, though negative slippage is far more common. It’s not just about market volatility; factors like order size relative to available liquidity, market impact, and the execution algorithm chosen all play a role. Measuring slippage effectively requires logging the intended order price (e.g., bid/ask at the time of order submission for market orders, or the limit price for limit orders) and comparing it against the volume-weighted average price (VWAP) of the fills for that order. This comparison provides a concrete dollar-and-cent metric for execution efficiency, allowing traders to quantify the hidden costs of their trading activity. Accurately attributing slippage to specific market conditions or execution issues is key for strategy refinement.

- Implicit Slippage: The difference between the midpoint at order submission and the fill price, indicating market impact.

- Explicit Slippage: The difference between the limit price of a resting order and its fill price, or the market order price at submission and the fill price, usually due to market movement or order book depth.

- VWAP Slippage: Comparing the actual VWAP of fills against the theoretical VWAP if the order had been filled instantaneously at the market price at submission.

Data Collection Infrastructure for Fill Quality Metrics

Building a reliable data collection infrastructure is foundational for measuring execution fill quality using latency and slippage metrics. This isn’t just about dumping logs; it requires precise timestamping at various critical points within the trading system, from the moment a signal is generated, to the API call for order submission, to the confirmation of an order and its subsequent fills. High-resolution, synchronized timestamps across all system components are non-negotiable. Without this, dissecting the true journey of an order and accurately calculating latency components becomes impossible. Furthermore, robust logging of order parameters (price, quantity, type), market data snapshots at the time of submission, and detailed fill reports (price, quantity, timestamp per fill) are essential. Data integrity and the ability to correlate these disparate data points precisely are significant challenges, especially across different execution venues or broker APIs, which may have varying reporting granularities.

Analyzing Latency and Slippage Data for Insights

Once collected, raw latency and slippage data need sophisticated analysis to yield actionable insights. Simply looking at averages can be misleading; distributions and outliers often reveal more critical information. For latency, analyzing histograms of time-to-fill can expose specific peaks that correspond to network congestion, exchange throttling, or processing spikes in your own system. Similarly, slippage analysis should involve examining distributions across different asset classes, order sizes, times of day, and volatility regimes. Are certain order types consistently performing worse? Is slippage higher during specific news events or market open/close? Visualizing these metrics over time, perhaps correlating them with market data events, can highlight systemic issues or transient conditions that significantly impact execution. Identifying these patterns allows for targeted optimizations, whether it’s tweaking order routing logic, adjusting execution algorithms, or improving system hardware.

- Distribution Analysis: Use histograms and box plots to visualize the spread and skewness of latency and slippage, rather than just mean values.

- Correlation with Market Data: Overlay latency and slippage data with metrics like volatility, volume, and bid-ask spread to identify causal relationships.

- Outlier Detection: Implement robust methods to identify and investigate extreme latency or slippage events, which often point to critical system or market issues.

- Segmented Analysis: Break down metrics by instrument, order type, strategy, or time of day to uncover granular performance differences.

Mitigating Execution Risks through Optimization

Understanding latency and slippage isn’t just an academic exercise; it drives tangible optimizations to mitigate execution risks. Strategies can include co-location to minimize network latency, implementing smarter order routing logic that dynamically selects the fastest execution venue, or deploying sophisticated execution algorithms like VWAP or TWAP that adapt to market conditions to reduce market impact. For slippage, techniques range from using smaller order slices (iceberg orders) to reduce immediate market impact, to dynamic limit pricing that adjusts based on real-time order book depth and recent volatility. The trade-off between speed and price improvement is constant, and optimized execution means finding the right balance for each specific strategy and market context. This continuous feedback loop of measurement, analysis, and optimization is a cornerstone of robust algorithmic trading system development, aiming to squeeze out every basis point of performance.

Integrating Fill Quality into Backtesting and Simulation

Effective backtesting isn’t just about validating strategy signals; it must also realistically account for execution costs, including expected latency and slippage. Neglecting these in backtesting leads to overly optimistic performance projections that crumble in live trading. Simulating execution fill quality requires more than simply deducting a fixed percentage from every trade. It involves incorporating realistic models of market impact based on historical order book depth and executed volume, simulating network and processing delays, and even accounting for partial fills or order rejections. This requires high-fidelity historical tick data, including level 2 or level 3 order book snapshots, to accurately model how an order would have interacted with the market. While perfect simulation is impossible, building an execution simulator that can project a plausible range of latency and slippage for hypothetical orders provides a far more robust assessment of a strategy’s true potential. This integration is critical for developing strategies that are truly robust to real-world execution constraints.

- Micro-slippage Models: Incorporate models that estimate slippage based on order size, market depth, and volatility from historical data.

- Latency Distribution Simulation: Apply observed latency distributions from live trading to simulated orders, rather than a fixed latency value.

- Partial Fill Logic: Simulate scenarios where orders are partially filled or require multiple messages to complete, reflecting real-world execution.

- Market Impact Models: Utilize historical trade data and order book changes to estimate the price movement caused by simulated large orders.