Developing robust algorithmic trading strategies extends far beyond signal generation. A crucial, often underappreciated, component is effective position sizing. It dictates how much capital to allocate to each trade, directly influencing risk exposure, capital efficiency, and overall strategy profitability. For rule-based systems, defining these methods and understanding the inherent design constraints is paramount. This isn’t just about simple arithmetic; it’s about integrating capital allocation logic that accounts for market dynamics, execution realities, and the strategy’s risk profile. Mismanagement here can quickly erode profits, even from otherwise strong trading signals. We need to look at how position sizing methods interact with the practical constraints of rule-based strategy design to build resilient and profitable systems.

Fundamental Position Sizing Methods and Their Constraints

Position sizing isn’t a one-size-fits-all problem; the method chosen heavily depends on the strategy’s risk appetite, asset class, and execution environment. Common approaches include fixed dollar amounts per trade, fixed share counts, a percentage of account equity, or volatility-adjusted sizing. Fixed dollar or share methods are simple but don’t adapt to changing account equity or market conditions, making them suitable only for very stable, low-volatility strategies or when managing a small, fixed pool of capital. Percentage of equity, like a 1% risk rule, scales with the account but requires a clear definition of ‘risk per trade’ often tied to a stop-loss. Volatility-adjusted sizing, such as using Average True Range (ATR) to determine position size, attempts to normalize risk across different instruments or market regimes, ensuring that a more volatile asset doesn’t disproportionately impact the portfolio. Each method introduces its own set of design constraints, from computational overhead for dynamic adjustments to the need for reliable volatility data feeds.

Implementing Percentage Risk and Kelly Criterion Models

For many rule-based strategies, percentage risk models provide a scalable approach. This involves calculating the position size such that the potential loss, if a stop-loss is hit, does not exceed a predefined percentage of the total trading capital (e.g., 1-2%). The formula typically involves (Account Equity * Risk Percentage) / (Entry Price – Stop Loss Price). The challenge lies in accurately determining the stop-loss distance, especially in volatile markets where initial stops can be easily breached. The Kelly Criterion, while theoretically optimal for maximizing long-term wealth, often proves too aggressive in practice due to its sensitivity to win rate and profit/loss ratio estimates, which are never perfectly known and can shift over time. Implementing Kelly typically requires significant modification to reduce its recommended leverage, making it more of a theoretical upper bound than a direct application. These models demand continuous re-evaluation during backtesting and live trading to ensure the underlying assumptions remain valid.

- Define clear stop-loss logic for accurate risk calculation.

- Regularly backtest sensitivity to risk percentage and stop-loss variations.

- Consider fractional Kelly or conservative modifications for practical application.

- Monitor the stability of win rates and average P/L to keep the model relevant.

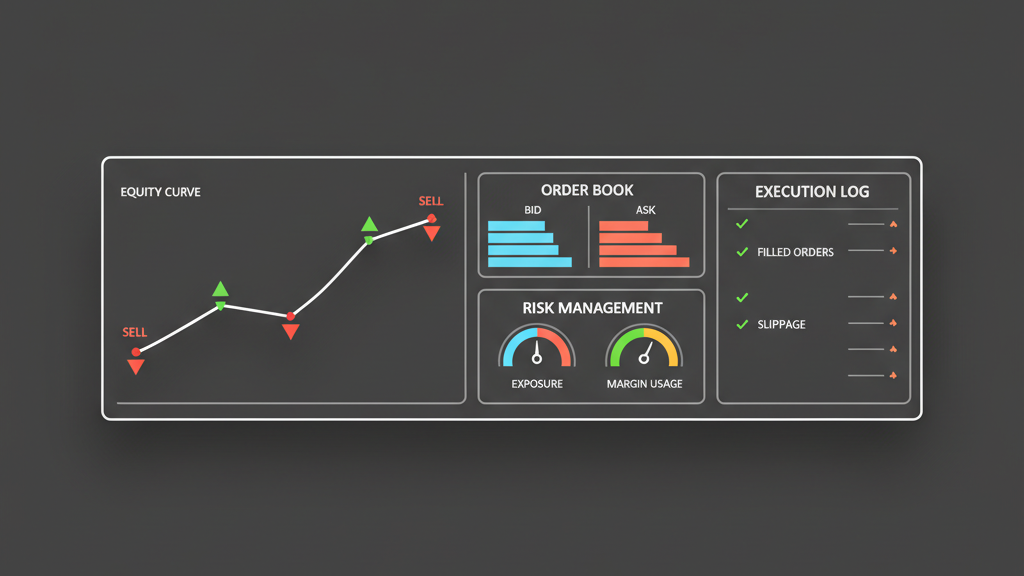

Mitigating Execution Slippage and Latency Impacts

Real-world execution is a significant constraint on theoretical position sizing. The calculated size assumes a perfect fill at the desired price, which is rarely the case, particularly for larger orders or less liquid assets. Slippage can significantly eat into expected profits, effectively increasing the ‘cost’ of the trade and reducing the true risk-adjusted return. Latency in order routing or market data can exacerbate this, leading to stale prices for sizing calculations or delayed order placements. Traders often have to pre-emptively reduce position sizes to account for potential slippage, especially in strategies that enter or exit during periods of high volatility. Advanced execution algorithms like TWAP (Time-Weighted Average Price) or VWAP (Volume-Weighted Average Price) can help mitigate market impact but might lead to partial fills or longer execution times, meaning the full intended position might not be established when the signal is strongest. This interplay forces a trade-off between ideal position size and achievable fill quality.

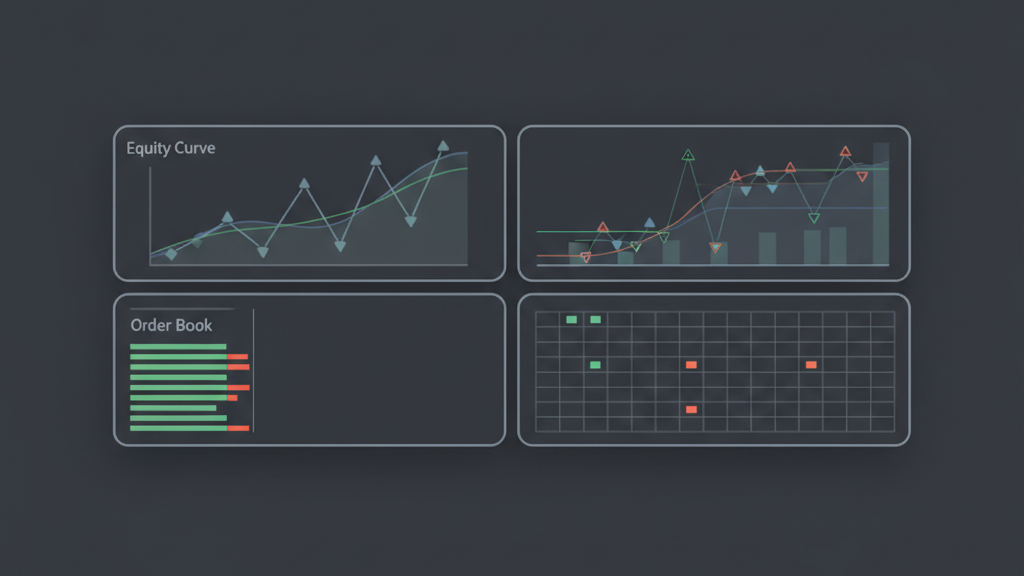

Dynamic Position Adjustment and Portfolio Capital Allocation

Sophisticated rule-based strategies often incorporate dynamic position sizing, where the allocated capital per trade adjusts based on evolving market conditions or internal strategy metrics. This can involve scaling positions up or down based on current market volatility, the correlation of an asset to the overall portfolio, or even a ‘confidence score’ derived from recent strategy performance. For instance, a strategy might reduce its exposure to highly correlated assets during periods of market stress or increase its size in a trending market after a series of successful trades. When managing a portfolio of multiple strategies, capital allocation becomes even more complex, as the position sizing of one strategy impacts the available capital and overall risk for others. This requires a robust, centralized risk management module that can dynamically rebalance capital across strategies or scale down all positions if aggregate risk limits are approached. The computational demands and data pipeline requirements for such dynamic adjustments are substantial and must be carefully designed to avoid bottlenecks or stale data issues during live operations.

- Integrate real-time volatility and correlation data for adaptive sizing.

- Implement a centralized risk engine for portfolio-level capital management.

- Design feedback loops for strategy performance to inform sizing adjustments.

- Account for increased computational load and potential latency in dynamic calculations.

Common Pitfalls and Realistic Limitations in Sizing

Even with well-defined position sizing methods, several pitfalls can undermine a strategy’s effectiveness. Over-optimization during backtesting is a common issue, where sizing parameters are curve-fitted to historical data, leading to brittle performance in live markets. Another limitation is underestimating transaction costs; commissions, exchange fees, and especially spread and slippage can significantly reduce net returns, making smaller, frequent trades less viable for larger sizes. Traders often fail to account for ‘tail risk’ events—unforeseen, extreme market moves that can blow through stop-losses and intended risk limits, leading to much larger losses than anticipated by the sizing model. Liquidity constraints are also critical; attempting to size into an illiquid market with large orders will inevitably lead to adverse price impact. Effective position sizing isn’t just about the ‘how much,’ but also the ‘can we actually get that size at a reasonable price?’ and ‘what if everything goes wrong?’ It demands continuous monitoring and a conservative approach to leverage and risk assumptions in live trading environments.