Developing robust algorithmic trading systems goes beyond optimizing alpha; it fundamentally requires sophisticated risk controls to prevent catastrophic drawdown. A significant loss event can erase months or even years of profit, leading to severe capital erosion and a loss of confidence in the strategy. True resilience in automated trading is built not just on the ability to generate returns, but on the capacity to protect capital when market conditions shift unexpectedly or system anomalies occur. This demands a proactive approach, integrating risk management directly into the core architecture of the trading algorithm rather than treating it as a peripheral consideration. Understanding the vectors of potential failure, from market liquidity shocks to execution engine glitches, is crucial for building safeguards that activate precisely when needed, ensuring the longevity and viability of any automated trading operation.

Understanding Drawdown Dynamics in Algorithmic Trading

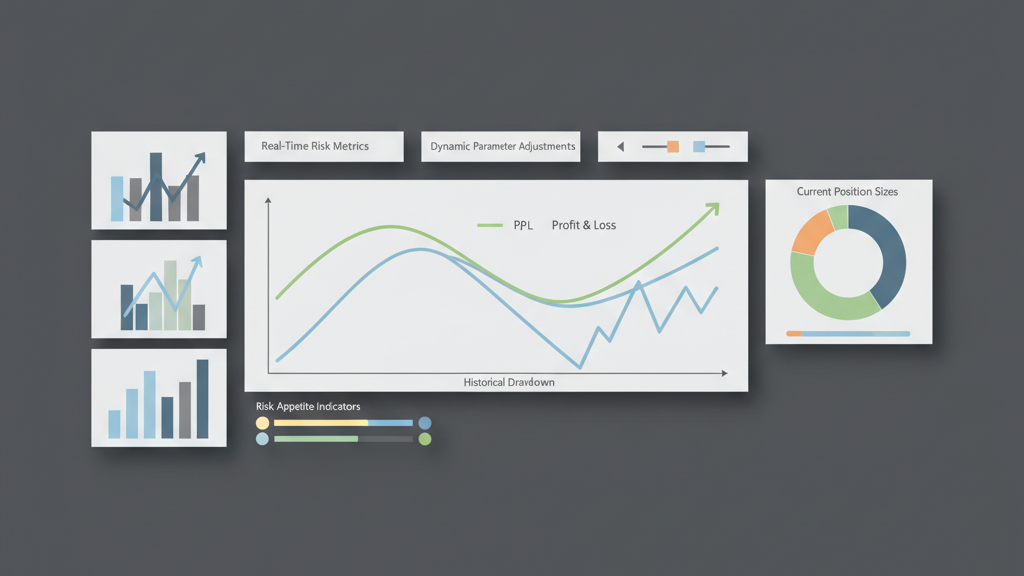

Catastrophic drawdown in an algorithmic context often stems from an unforeseen confluence of market events, systemic failures, or design flaws that overwhelm conventional risk parameters. Unlike manual trading where human intuition might intervene, an algorithm will relentlessly follow its programmed logic, even into detrimental conditions, unless specifically coded to disengage or adapt. We’ve seen scenarios where models trained on stable market regimes fail spectacularly during flash crashes or extreme volatility, leading to rapid capital depletion. Identifying the ‘breaking points’ of a strategy during backtesting requires not just historical data, but also synthetic stress tests designed to push assumptions to their limits. A common oversight is optimizing for average returns without adequately penalizing tail risks, creating a system that performs well most of the time but is highly vulnerable to Black Swan events. Effective risk management starts with a deep, almost cynical, understanding of how the system can fail, focusing on edge cases rather than just the mean.

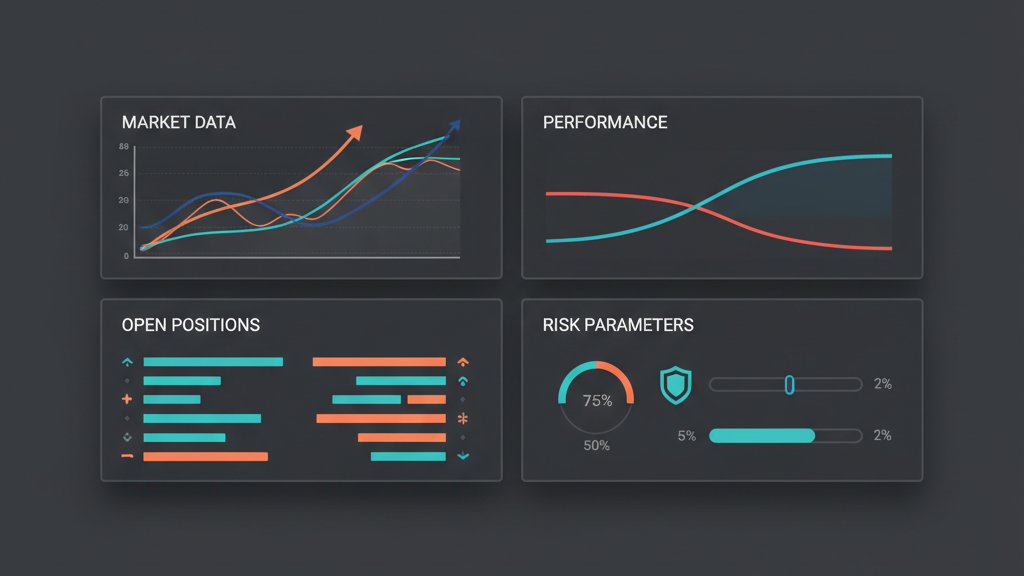

Real-time Monitoring and Automated Circuit Breakers

Implementing robust real-time monitoring is non-negotiable for preventing catastrophic drawdown. This isn’t just about tracking P&L; it involves a comprehensive suite of metrics designed to detect anomalous behavior in market data, strategy signals, order execution, and account health. Automated circuit breakers are the immediate defensive line, programmed to halt or significantly scale back trading activity when predefined thresholds are breached. These thresholds can relate to percentage capital drawdown, maximum open position size, daily P&L limits, or even liquidity metrics of the instruments being traded. The challenge lies in setting these limits dynamically, avoiding premature stops during normal volatility while ensuring rapid intervention when true systemic risk emerges. Granularity matters here; a global circuit breaker might be too blunt, whereas instrument-specific or strategy-specific controls offer more precise protection, allowing other parts of the portfolio to continue operating.

- Global capital drawdown limits (e.g., -5% daily equity).

- Strategy-specific loss limits (e.g., -1% of allocated capital).

- Max open position limits per instrument or across the portfolio.

- Rate limits for orders and cancellations to prevent runaway algorithms.

- Liquidity checks: halting trades if market depth drops below a critical threshold.

Dynamic Position Sizing and Adaptive Capital Allocation

Static position sizing can significantly amplify losses during adverse market conditions. A proactive algorithmic risk management system employs dynamic position sizing, adjusting trade size based on current market volatility, available capital, and the perceived edge of the strategy. This often involves algorithms that calculate optimal position size using metrics like Average True Range (ATR), Value-at-Risk (VaR), or Conditional Value-at-Risk (CVaR). As volatility increases, the system automatically reduces position sizes, thereby lowering exposure. Conversely, during periods of low volatility or high conviction, positions might be scaled up cautiously. This adaptive approach extends to capital allocation across multiple strategies. If one strategy experiences sustained losses or elevated risk signals, the system should automatically reduce the capital allocated to it, or even temporarily disable it, reallocating resources to more stable or promising strategies. This dynamic recalibration is vital for preventing a single faltering strategy from dragging down the entire portfolio.

Impact of Data Quality and API Latency on Risk Controls

The efficacy of any algorithmic risk management system is profoundly dependent on the quality and timeliness of its input data and the reliability of its execution infrastructure. Stale or corrupted market data can lead to erroneous risk calculations and trigger false positives or, worse, fail to detect actual threats. Latency in receiving market data feeds or, critically, in submitting and confirming orders via exchange APIs, can create execution gaps where the perceived risk profile of a position deviates significantly from reality. For example, a circuit breaker might trigger based on a price feed, but high latency means the close order executes at a much worse price, leading to slippage that exceeds the intended stop loss. Proactive systems incorporate latency monitoring and data validation checks as integral parts of their risk architecture, often rejecting risk calculations or orders if data integrity or network conditions are compromised. Redundant data feeds and smart order routing with fallbacks are not luxuries, but necessities in this context.

- Validate incoming market data for freshness, completeness, and consistency.

- Monitor API response times and order confirmation latencies.

- Implement fail-safes for data feed disconnections or corrupted data streams.

- Account for network latency and slippage in risk limit calculations.

- Ensure robust error handling and retry logic for order submission failures.

Stress Testing and Scenario Analysis for Tail Events

While historical backtesting is essential, it’s insufficient for truly preventing catastrophic drawdown because markets evolve, and past performance is not indicative of future results. Robust proactive algorithmic risk management demands extensive stress testing and scenario analysis that go beyond observed historical events. This involves simulating extreme, hypothetical market conditions: flash crashes, sudden liquidity evaporation, prolonged periods of high volatility, or even systematic failures like exchange outages. We employ techniques like Monte Carlo simulations with fat-tailed distributions to generate thousands of potential future market paths, evaluating strategy performance and risk exposure under each. This helps identify the strategy’s vulnerabilities to tail events and informs the design of more resilient risk controls. Understanding how the strategy would behave if a specific asset suddenly lost 20% of its value, or if correlation structures dramatically shifted, is critical for building truly robust systems. These simulations often reveal hidden dependencies and unexpected systemic risks that a purely historical analysis would miss.

Post-Mortem Analysis and Continuous Improvement

Even with the most rigorous proactive algorithmic risk management in place, incidents will occur. The key to long-term success and preventing catastrophic drawdown is a disciplined approach to post-mortem analysis. Every significant drawdown, system anomaly, or near-miss event must be thoroughly investigated. This involves detailed log analysis, performance attribution, and identification of root causes, whether they be market-driven, system-related, or logic flaws. The insights gained from these post-mortems are invaluable for iterating on and improving risk models, adjusting circuit breaker thresholds, refining position sizing algorithms, and enhancing monitoring tools. It’s an iterative process of learning from both successes and failures, continuously adapting the risk framework to new market realities and operational challenges. A static risk management system is a fragile one; continuous evolution is the only pathway to sustained capital preservation in the dynamic landscape of algorithmic trading.