As algo traders expand their strategy portfolios, the underlying live trading execution infrastructure must evolve to support increased volume and complexity. What begins as a simple setup can quickly become a bottleneck, impacting performance, reliability, and profitability. Effectively scaling this infrastructure is critical for sustaining growth and competitive advantage in dynamic markets. This article explores practical considerations and architectural approaches. It delves into how to overcome common challenges, from managing data and connectivity to implementing robust risk controls, ensuring your automated trading systems can handle a growing number of strategies without compromise.

Identifying and Addressing Execution Bottlenecks

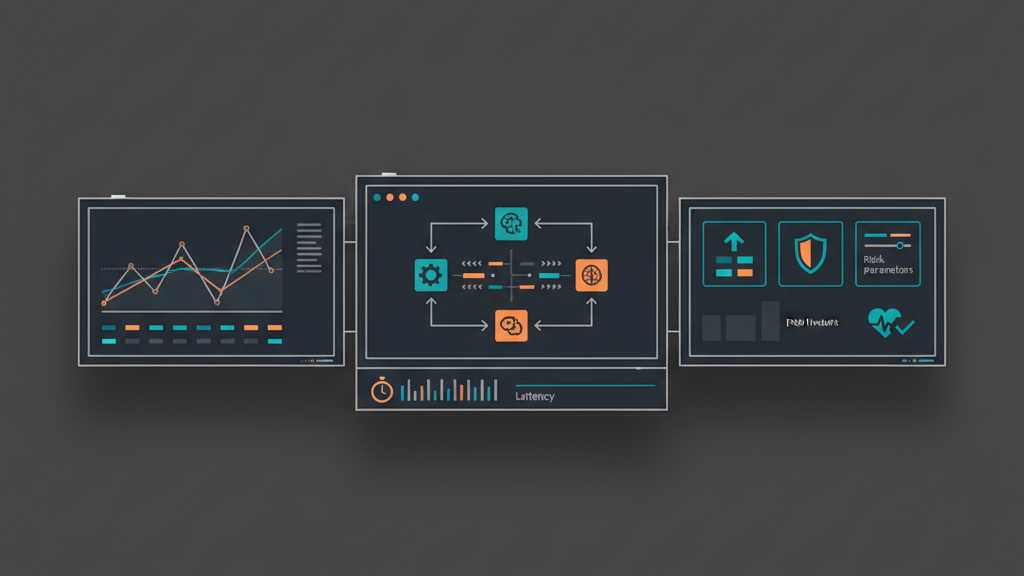

Expanding an algo trading strategy portfolio invariably introduces new pressure points on your execution infrastructure. What might have been an efficient setup for one or two strategies can quickly become overwhelmed by increased order flow, market data processing demands, and concurrent risk calculations. Common bottlenecks manifest as increased latency in order submission, delayed market data updates, or system crashes under peak load. Identifying these limitations requires a systematic approach, evaluating not just raw processing power but also network efficiency, database performance, and the inherent concurrency model of your existing system. Proactive identification prevents performance degradation and ensures smooth operation as your trading operations grow.

- Evaluate existing system throughput and latency metrics under load.

- Pinpoint specific resource limitations (CPU, memory, network I/O, storage).

- Analyze market data processing pipelines for delays or drops.

- Review order management system capacity for concurrent submissions.

- Map dependencies to external services like brokers or data providers.

Designing for Scalable and Resilient Architecture

The foundation of a successful scaling live trading execution infrastructure lies in its architectural design. Moving beyond monolithic structures, a distributed system approach using microservices offers superior flexibility and resilience. This involves breaking down core functionalities such as market data ingestion, strategy logic, order execution, and risk management into independent, deployable services. Communication between these services typically occurs asynchronously via message queues, enhancing fault tolerance and enabling independent scaling of components. This modularity ensures that the failure of one service does not cascade across the entire system, while allowing specific high-load components to scale horizontally without affecting others.

- Adopt a microservices architecture for component independence.

- Implement message brokers (e.g., Kafka, RabbitMQ) for reliable communication.

- Design stateless services for easy horizontal scaling.

- Incorporate redundancy and failover mechanisms for critical components.

- Decouple data storage from compute logic to improve flexibility.

Optimizing Market Data and Order Routing

Efficient management of market data and optimized order routing are critical for maximizing performance within a scaled trading environment. High-frequency strategies demand ultra-low latency data feeds and execution paths. This often necessitates techniques such as colocation within exchange data centers, direct market access (DMA), and implementing specialized hardware for network processing. For order routing, intelligent algorithms can select the optimal broker or exchange based on factors like liquidity, fees, and execution speed. A robust, low-latency market data processing pipeline, coupled with an adaptive order management system capable of handling thousands of concurrent orders, becomes indispensable when scaling strategy portfolios.

- Prioritize colocation and direct market access for latency-sensitive operations.

- Implement high-throughput data processing engines for market feeds.

- Develop smart order routing logic considering execution quality and cost.

- Ensure order management systems can handle concurrent, diverse order types.

- Manage multiple broker API integrations for diversified execution.

Implementing Robust and Distributed Risk Controls

As the number and complexity of trading strategies increase, so does the imperative for sophisticated risk management. A centralized risk system can quickly become a bottleneck, leading to delays in crucial checks. Distributed risk controls integrate real-time position monitoring, exposure limits, and P&L tracking across the entire execution stack. This means risk parameters are enforced at multiple levels: individual strategy, sub-portfolio, and overall firm exposure. Automated circuit breakers and pre-trade checks are vital to prevent fat-finger errors and mitigate rapid market movements. The ability to instantly assess and react to risk across a growing portfolio is non-negotiable for stable and secure operations.

- Implement real-time portfolio-level position and P&L monitoring.

- Develop dynamic exposure limits configurable per strategy or asset class.

- Integrate pre-trade risk checks directly into the order flow path.

- Deploy automated circuit breakers to halt trading under specific conditions.

- Ensure audit trails for all risk parameters and enforcement actions.

Performance Monitoring and Proactive Optimization

Sustaining high performance within a scaled live trading execution infrastructure demands continuous and proactive monitoring. Implementing comprehensive observability solutions, encompassing detailed logging, granular metrics, and end-to-end tracing, provides deep insights into system behavior. Real-time dashboards enable rapid identification of performance bottlenecks, latency spikes, or service outages. Beyond reactive monitoring, regular performance profiling, load testing, and capacity planning are essential. These proactive measures help anticipate future resource needs and identify potential points of failure before they impact live trading, ensuring the infrastructure remains robust and efficient as strategy portfolios continue to grow.

- Establish comprehensive real-time monitoring dashboards for all services.

- Configure automated alert systems for performance deviations and errors.

- Conduct regular load testing and capacity planning exercises.

- Utilize distributed tracing to analyze end-to-end latency.

- Perform continuous code profiling and infrastructure audits for optimization.

Leveraging Cloud, On-Premise, and Hybrid Models

The choice of deployment environment significantly influences the scalability, cost-effectiveness, and latency profile of your live trading infrastructure. Cloud platforms offer unparalleled elasticity, allowing resources to be scaled up or down on demand, which can significantly reduce capital expenditure and operational overhead for less latency-critical components. However, for ultra-low latency execution, dedicated on-premise hardware or colocation within exchange data centers often remains superior. A hybrid approach, combining the agility of cloud for back-office and analytical tasks with the raw performance of on-premise for core execution, can provide a balanced solution that optimizes both performance and cost when scaling strategy portfolios.

- Evaluate public cloud for scalability and cost efficiency in non-critical areas.

- Consider dedicated servers or colocation for ultra-low latency execution.

- Implement a hybrid cloud strategy to balance performance and flexibility.

- Address data governance and regulatory compliance for each environment.

- Optimize network architecture for cross-environment connectivity.

Future-Proofing with Continuous Integration and Deployment

To maintain agility and ensure the infrastructure can adapt to evolving market conditions and new strategies, a robust CI/CD pipeline is indispensable. Automating the build, test, and deployment processes minimizes manual errors and accelerates the delivery of new features or performance enhancements. This approach allows for rapid iteration and deployment of strategy updates or infrastructure changes with confidence. Furthermore, implementing infrastructure-as-code (IaC) principles ensures that your execution environment is consistently provisioned and managed, providing a repeatable and scalable foundation for continuous growth and evolution of your algo trading operations.

- Automate build, test, and deployment pipelines for rapid iteration.

- Implement infrastructure-as-code for consistent environment provisioning.

- Utilize version control for all configuration and deployment scripts.

- Adopt blue/green deployments or canary releases for minimal downtime.

- Ensure comprehensive automated testing covers all system components.