Developing profitable algorithmic trading strategies isn’t just about coding; it’s a rigorous, iterative process. A robust strategy research workflow is critical for transforming raw ideas into executable code that performs reliably. Without a structured approach to backtesting, validation, and systematic hypothesis tracking, even promising concepts can lead to significant losses in live trading. This isn’t just about iterating faster; it’s about iterating smarter, minimizing common pitfalls like overfitting and data snooping, and building confidence in your models before they touch real capital. Our focus is on practical steps, from initial concept to rigorous testing, ensuring your strategies are built on solid ground. This disciplined process ensures that any edge identified is real and robust, not an artifact of observational bias or flawed testing.

The Foundation: Hypothesis Generation and Initial Scoping

Every successful trading strategy begins with a clearly defined, testable hypothesis. This isn’t just a vague idea like ‘stocks tend to go up’; it’s a specific, measurable statement about market behavior under certain conditions. For instance, ‘When a 50-day moving average crosses above a 200-day moving average in SPY, and the VIX is below 20, SPY will show a statistically significant positive return over the next 10 trading days, with an average daily volume above 100M shares.’ This level of specificity is crucial because it dictates your data requirements, the type of backtesting engine you’ll need, and the performance metrics you’ll track. Without this foundational step, you’re not conducting research; you’re simply fishing for patterns, which almost invariably leads to overfitted and brittle strategies. The initial scoping also involves defining the target market, asset class, holding period, and the approximate frequency of trading. These parameters guide the subsequent data collection and backtesting efforts, ensuring resources are focused appropriately.

Data Acquisition and Preprocessing for Robust Backtesting

The integrity of your backtesting results is directly tied to the quality of your input data. Sourcing clean, accurate historical data is often one of the most time-consuming and challenging aspects of the strategy research workflow. This goes beyond just getting price feeds; it includes corporate actions, delisting information, volume, fundamental data, and sometimes even alternative datasets. Common pitfalls include survivorship bias, where only currently listed assets are considered, leading to an overly optimistic view of past performance. Look-ahead bias, where future information inadvertently leaks into historical simulations, is another critical error to avoid. Our systems often involve elaborate data pipelines to ingest, clean, and normalize disparate datasets, time-aligning them and handling missing values or outliers. This preprocessing stage is where many strategies fail before they even get to the execution engine because bad data translates to misleading backtest results and, eventually, live trading losses.

- Acquire high-resolution historical data (tick, 1-min) for accurate simulation of order book dynamics.

- Implement robust data cleaning pipelines to handle corporate actions, splits, dividends, and delistings.

- Time-align disparate datasets (e.g., price, fundamental, sentiment) to prevent look-ahead bias.

- Validate data integrity against multiple sources and identify periods of anomalous or missing data.

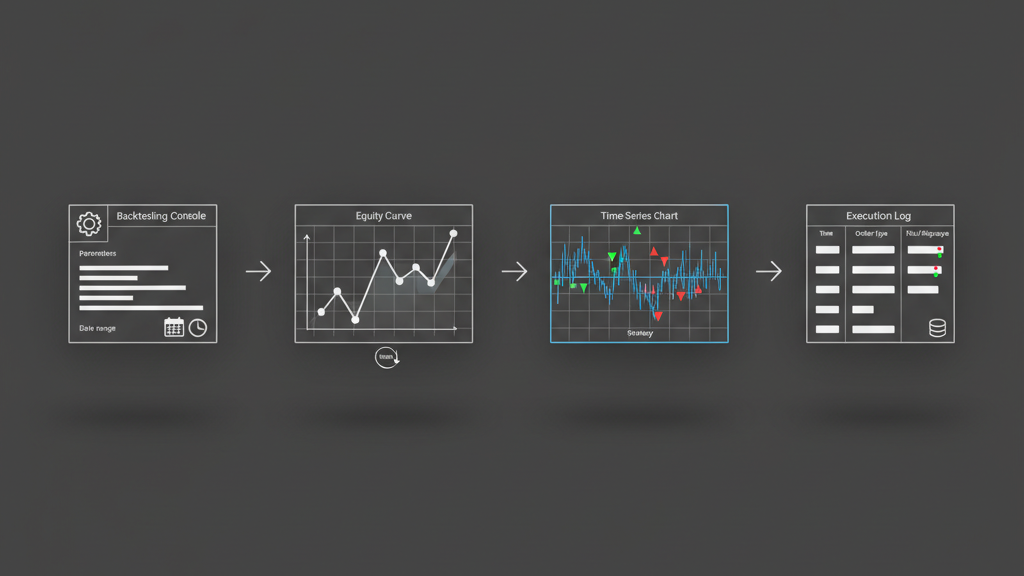

Designing the Backtest Environment and Core Logic

With a clear hypothesis and clean data, the next step is constructing a realistic backtesting environment. This isn’t merely about coding your entry and exit conditions; it involves simulating market mechanics as accurately as possible. Considerations include how orders are filled (market, limit, stop), the impact of slippage (especially for larger orders or illiquid assets), and transaction costs (commissions, exchange fees, bid-ask spread). A robust backtesting engine should allow for precise control over these parameters, enabling you to model various market scenarios. You’ll also need to define position sizing rules, which could range from fixed capital allocation to dynamic, volatility-adjusted sizing. Risk parameters, such as maximum drawdown limits or daily loss caps, should be integrated into the simulation from the outset, not as an afterthought. The fidelity of your backtest determines its usefulness; a simplistic simulation might show fantastic returns that evaporate in a real-world execution environment due to ignored market frictions.

Performance Metrics and Robustness Validation

Once a backtest runs, interpreting the results goes far beyond just looking at the total profit and loss. A comprehensive set of performance metrics is essential for truly understanding a strategy’s behavior and risks. Metrics like Sharpe Ratio, Sortino Ratio, Calmar Ratio, maximum drawdown, profit factor, win rate, and average trade size provide a multidimensional view. Crucially, validation is where you prove the strategy isn’t just lucky or overfitted. Techniques like out-of-sample testing, where the strategy is tested on data it hasn’t ‘seen’ before, are non-negotiable. Walk-forward optimization involves re-optimizing parameters on rolling data windows, mimicking how a live strategy might adapt. Monte Carlo simulations can assess the sensitivity of results to minor parameter changes or random event sequences. Failing to rigorously validate means you’re likely deploying a curve-fitted model that will break down under varying market conditions. The aim is to find a strategy that is robust, meaning its performance holds up across different market regimes and input data variations, rather than one that achieves exceptional, but ultimately spurious, results on a single historical dataset.

- Calculate key performance indicators: Sharpe Ratio, Sortino Ratio, Max Drawdown, Profit Factor, and average trade statistics.

- Implement out-of-sample testing and walk-forward analysis to evaluate robustness against unseen data.

- Conduct Monte Carlo simulations to assess parameter sensitivity and expected performance distribution.

- Perform stress testing by simulating extreme market events (e.g., flash crashes, liquidity crises) to gauge resilience.

Beyond Backtesting: Stress Testing and Production Readiness

A successful backtest is a necessary, but not sufficient, condition for live trading. The transition from a simulated environment to production introduces a host of new challenges. Stress testing involves pushing the strategy to its limits by simulating highly adverse market conditions, significantly increased slippage, or prolonged periods of illiquidity. This helps uncover vulnerabilities that might not appear in standard backtests. Latency considerations become paramount; a strategy designed for tick data might perform poorly if execution delays mean orders are filled at stale prices. API rate limits, connectivity issues, and unexpected exchange downtimes are all real-world constraints that must be accounted for. Robust error handling, logging, and monitoring systems are essential for live deployment, allowing for quick identification and resolution of issues. This stage often involves paper trading or low-capital live testing to observe real-world performance discrepancies and refine execution logic, bridging the gap between theoretical edge and practical application.

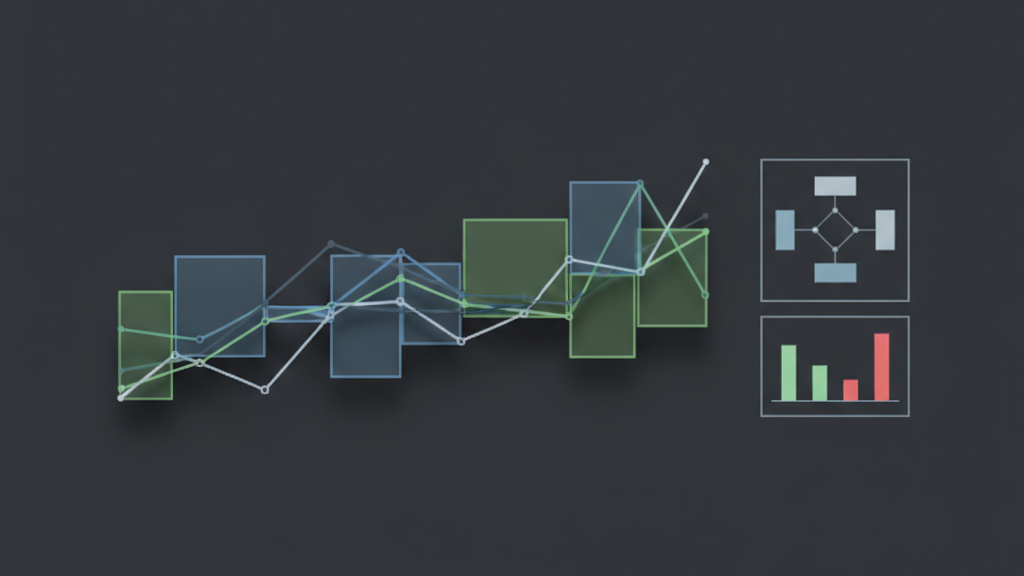

Iteration and Hypothesis Tracking: A Continuous Cycle

The strategy research workflow is rarely linear; it’s a continuous feedback loop. Each backtest, validation, and live trading observation generates new insights, leading to new hypotheses and refinements. Maintaining a disciplined approach to hypothesis tracking is vital. This involves documenting every hypothesis, the specific tests performed, the data used, the parameters explored, and the resulting performance metrics. Version control systems are indispensable not just for code, but for parameter sets and research findings. When a strategy underperforms or a market regime shifts, a detailed history of your research allows for efficient debugging and adaptation. Without a clear audit trail, you risk repeating failed experiments or misattributing performance changes. This systematic approach transforms strategy development from an art to a science, ensuring that knowledge is accumulated and leveraged effectively across the entire lifecycle of an algorithmic strategy, fostering continuous improvement and deeper understanding of market dynamics.

- Utilize version control (Git) for strategy code, configuration files, and backtest results.

- Maintain a research log or database to track hypotheses, test parameters, and performance observations.

- Implement A/B testing frameworks for live strategies to evaluate minor variations or parameter tweaks.

- Automate report generation for backtest results and live performance to facilitate consistent analysis.