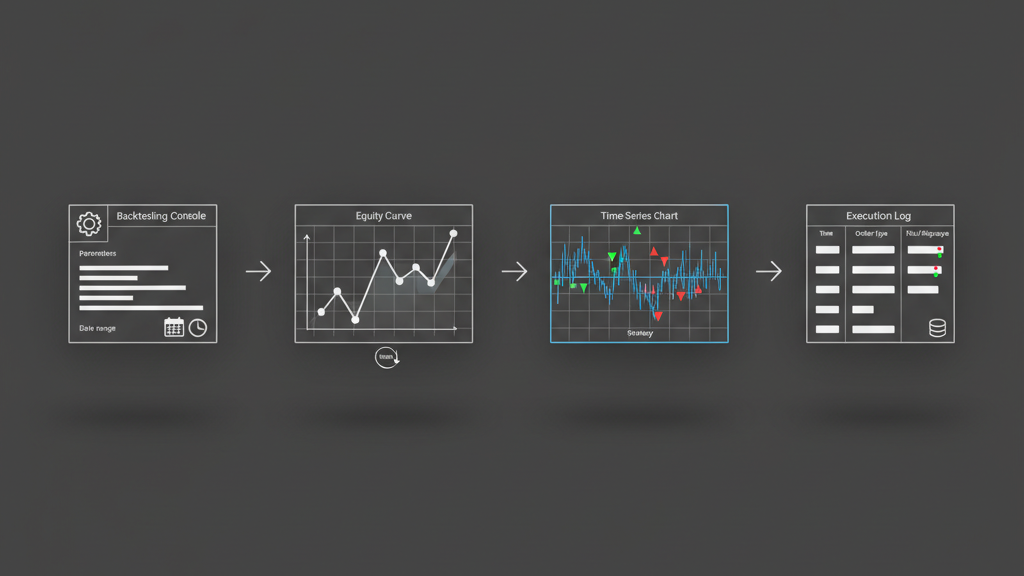

Developing systematic trading strategies often involves extensive historical data analysis, but a simple backtest can easily lead to curve-fitting. The challenge lies in building strategies that perform consistently on unseen data. This is where a robust walk forward backtesting setup becomes indispensable. Unlike static backtesting which optimizes parameters once over the entire dataset, walk forward analysis simulates real-world conditions by re-optimizing strategy parameters periodically on a rolling window of in-sample data and then evaluating performance on a subsequent, untouched out-of-sample segment. This methodology helps identify strategies that are genuinely adaptive and resilient, providing a more realistic assessment of a system’s viability before deployment into live markets.

The Rationale Behind Walk Forward Testing

Static backtests, while simple to execute, are notoriously prone to overfitting. When a strategy’s parameters are optimized once over the entire historical dataset, there’s a significant risk that those parameters are simply capturing noise specific to that historical period, rather than robust market dynamics. In a live trading environment, market conditions evolve, and parameters that performed exceptionally well in the past might degrade rapidly. Walk forward backtesting addresses this fundamental issue by continuously challenging the strategy with new, unseen data. It forces the system to adapt, much like it would in real trading, revealing whether the strategy’s logic holds up over varying market regimes and if its parameters remain stable enough to be operationally viable. This iterative process of re-optimization and forward testing provides a much higher degree of confidence in a strategy’s long-term potential and reduces the likelihood of deploying a curve-fitted system.

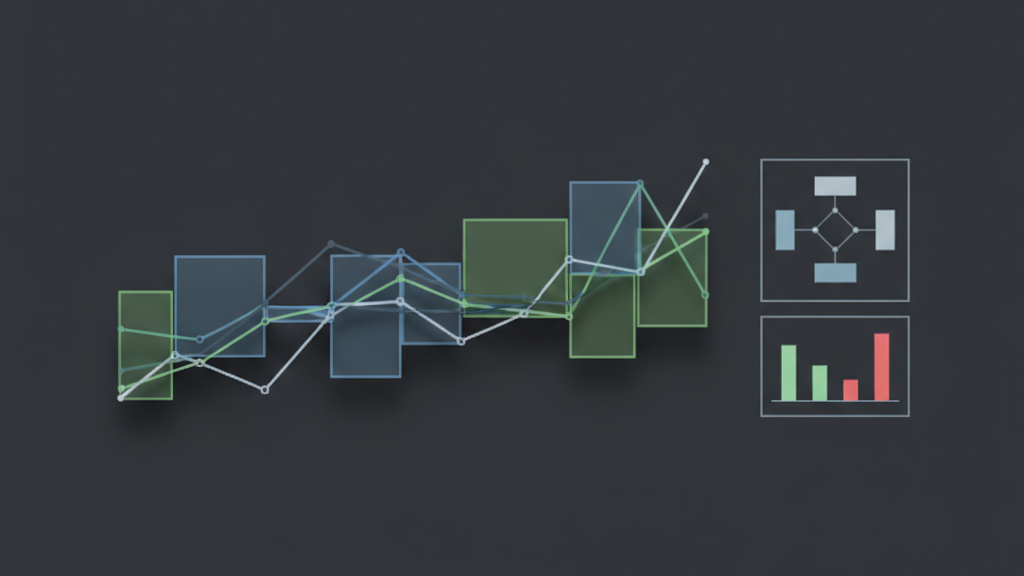

Core Components of a Walk Forward Setup

Setting up a walk forward backtesting framework requires careful definition of several critical components. At its heart is the concept of a rolling window, where the historical data is divided into sequential segments. Each segment begins with an ‘in-sample’ period, used for optimizing the strategy’s parameters. Following this, the optimized parameters are applied to the subsequent ‘out-of-sample’ period to evaluate their performance on data not used during optimization. This cycle then ‘walks forward’ by shifting both the in-sample and out-of-sample windows along the timeline, ensuring that the strategy is always tested on fresh data. The choice of window lengths for both in-sample optimization and out-of-sample evaluation, along with the step size (how often the windows advance), are crucial design decisions that significantly impact the computational load and the insights gained from the analysis. Too short an in-sample might lead to unstable optimizations, while too long could mask parameter decay.

- Defining fixed or adaptive lengths for the in-sample (optimization) and out-of-sample (testing) periods.

- Establishing the ‘step size’ or ‘re-optimization frequency,’ dictating how often the windows slide forward.

- Implementing robust logic to store and accurately apply the best-performing optimized parameters from each in-sample to its corresponding out-of-sample segment.

Data Management and Preprocessing for Walk Forward

Data quality is paramount in any backtesting, but it becomes even more critical in a walk forward backtesting setup due to the repeated nature of the process. Ensuring the integrity and consistency of historical data across all rolling windows is non-negotiable. This involves meticulously handling corporate actions like stock splits, dividends, and delistings to prevent look-ahead bias or inaccurate historical prices. For instance, if a stock splits, the historical price data must be adjusted retrospectively across the entire dataset *before* any walk forward iteration begins, not just within a specific window. Additionally, efficient data loading and caching mechanisms are essential, as the system will repeatedly query and segment large datasets. Any data gaps or errors can propagate through multiple optimization cycles, leading to misleading performance metrics and ultimately, flawed strategy conclusions. A robust data pipeline, capable of handling historical data revisions and ensuring time-series integrity, is a fundamental architectural requirement.

Automated Parameter Optimization and Evaluation

Within each in-sample window, the strategy requires automated parameter optimization. This process involves systematically searching through a defined parameter space to find the combination that maximizes a chosen objective function, such as Sharpe Ratio, Profit Factor, or net profit, within that specific historical period. Common methods range from brute-force grid searches, which can be computationally intensive for high-dimensional parameter spaces, to more sophisticated algorithms like genetic algorithms or particle swarm optimization, which can find good solutions more efficiently. The key is to define clear performance targets and constraints for the optimization engine. After parameters are identified, they are locked in for the subsequent out-of-sample period, where their performance is rigorously evaluated without any further adjustments. If a particular in-sample optimization fails to yield parameters meeting a minimum threshold, the system should be designed to handle this, perhaps by defaulting to a prior stable set or flagging the window for review, indicating potential strategy weakness in that market regime.

- Implementing diverse optimization algorithms (e.g., Grid Search, Genetic Algorithms) to efficiently explore the parameter space.

- Defining a robust objective function (e.g., Maximum Sharpe Ratio, Minimum Drawdown, Custom Profit/Risk Ratio) for parameter selection in each in-sample period.

- Establishing criteria for parameter validity and stability to prevent selecting overfitted or outlier parameter sets, even if they yield high in-sample performance.

Aggregating Results and Performance Analysis

Once all walk forward iterations are complete, the individual out-of-sample equity curves and performance metrics need to be aggregated to form a single, composite walk forward equity curve. This cumulative curve represents the strategy’s hypothetical performance if it had been traded live with periodically optimized parameters. Critical metrics for evaluating the overall walk forward performance include the total net profit, maximum drawdown, Sharpe Ratio, and Calmar Ratio, all calculated from this composite curve. Beyond these standard metrics, it’s also crucial to analyze the ‘walk forward efficiency’ (WFE), which compares the strategy’s walk forward performance to its overall optimized performance, indicating how much predictive power was lost. Furthermore, examining parameter stability across windows is vital; highly fluctuating optimal parameters often signal an unstable strategy prone to overfitting. Visualizing parameter changes over time can highlight regimes where the strategy struggled to find consistent performance, prompting further research into market condition filtering.

Operational Considerations and Real-World Execution

The transition from a successful walk forward backtesting setup to live execution introduces a new set of challenges. One primary concern is the latency associated with re-optimization. If the strategy requires daily or even intra-day parameter updates, the computational resources and time required to run the optimization within a tight window can become a bottleneck. Furthermore, real-world execution impacts like slippage and commissions, which might be modeled simplistically in backtesting, can significantly erode the edge identified in the walk forward analysis. The actual fills received might deviate from theoretical prices, especially for larger orders or illiquid assets. A live system must be designed to dynamically load and apply the newly optimized parameters at the correct frequency, often requiring robust configuration management and an API-driven parameter update mechanism. Continuous monitoring of parameter performance and stability in the live environment is crucial to detect early signs of decay, enabling timely intervention or recalibration of the strategy.