Developing an effective algorithmic trading system often hinges on its ability to execute orders with minimal delay across multiple trading venues. In fragmented markets, where liquidity is dispersed across various exchanges, dark pools, and ECNs, a robust low latency execution architecture for multi-venue order routing isn’t just an advantage—it’s a necessity for profitability. This isn’t about mere speed; it’s about making intelligent routing decisions in microseconds, ensuring optimal fill prices, and managing execution risk in a highly competitive environment. We’ll delve into the technical and operational considerations that go into building and maintaining such a system, drawing from practical experience rather than theoretical ideals.

The Imperative of Speed in Fragmented Markets

In today’s electronic markets, liquidity is rarely consolidated on a single exchange. Financial instruments often trade simultaneously across numerous venues, each with distinct pricing, depth, and fee structures. For strategies like arbitrage, market making, or even large order execution seeking best price, the ability to quickly identify and interact with the most favorable venue is paramount. This multi-venue landscape creates opportunities but also significant challenges for order routing. A low latency execution architecture for multi-venue order routing isn’t merely about raw speed; it’s about the entire workflow from receiving a market data update to getting an order acknowledgment back from the venue. The time budget for this cycle can be measured in single-digit microseconds, and any bottleneck, whether in network hops, data parsing, or decision logic, directly impacts the potential for capturing fleeting opportunities or minimizing adverse selection.

Core Architectural Pillars for Ultra-Low Latency

Achieving ultra-low latency requires a holistic approach, starting from physical infrastructure and extending through every layer of the software stack. Proximity hosting, often colocation within the exchange’s data center, is a non-negotiable foundation, minimizing network propagation delays to the absolute physical minimum. Beyond that, the choice of networking hardware, operating system tuning, and even CPU architecture can shave critical nanoseconds. Memory access patterns, cache utilization, and thread scheduling become dominant factors. We’re talking about direct memory access (DMA) where possible, user-space networking stacks, and bypassing the kernel for critical data paths. Every component must be scrutinized for its latency contribution, with an emphasis on deterministic performance rather than just average speed, as outliers can quickly erode profitability.

- Colocation services provide direct fiber access to exchange matching engines, reducing network latency to the physical limit.

- Leveraging FPGA-based network cards and specialized network stacks for hardware-accelerated packet processing and order submission.

- Custom operating system kernels (e.g., Linux with RT_PREEMPT patch) and judicious use of CPU pinning to reduce context switching and jitter.

- Employing memory-mapped files and zero-copy architectures to minimize data movement and reduce CPU cache misses.

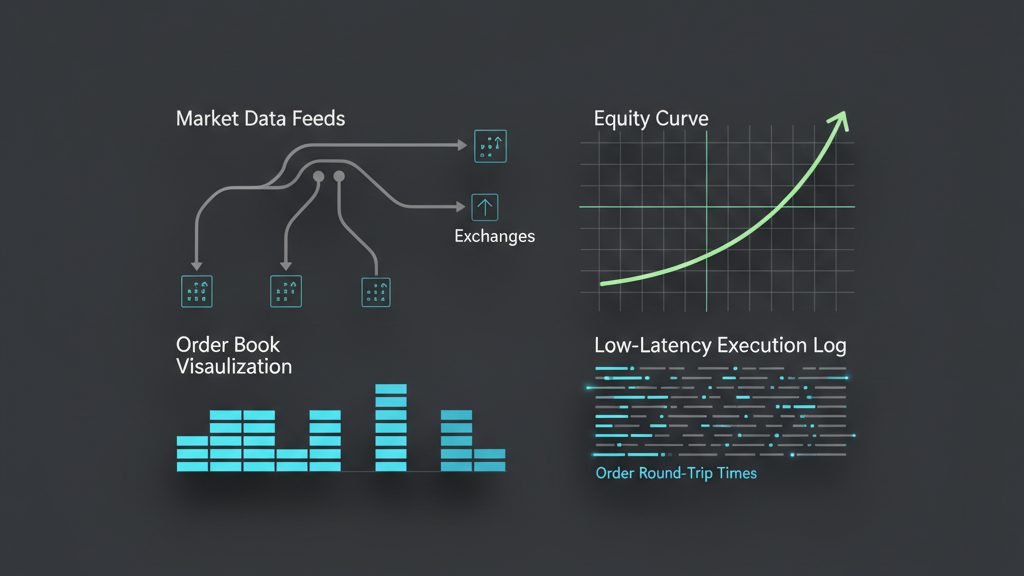

Efficient Market Data Ingestion and Normalization

The intelligence of any multi-venue routing system is only as good as the market data it consumes. In a low latency execution architecture, this means ingesting raw, unadulterated market data feeds directly from each venue, typically via UDP multicast. The challenge lies not just in receiving these feeds at line rate—which can be immense, generating millions of updates per second across multiple symbols and venues—but in parsing them efficiently and normalizing them into a consistent internal format. Each exchange often has its own proprietary binary protocol, requiring specialized decoders designed for minimal overhead. Any delay or error in this process can lead to stale quotes, incorrect order book state, and ultimately, suboptimal or even detrimental routing decisions. Data integrity, including sequence number checks and retransmission handling for dropped packets, is crucial to maintain an accurate view of the market.

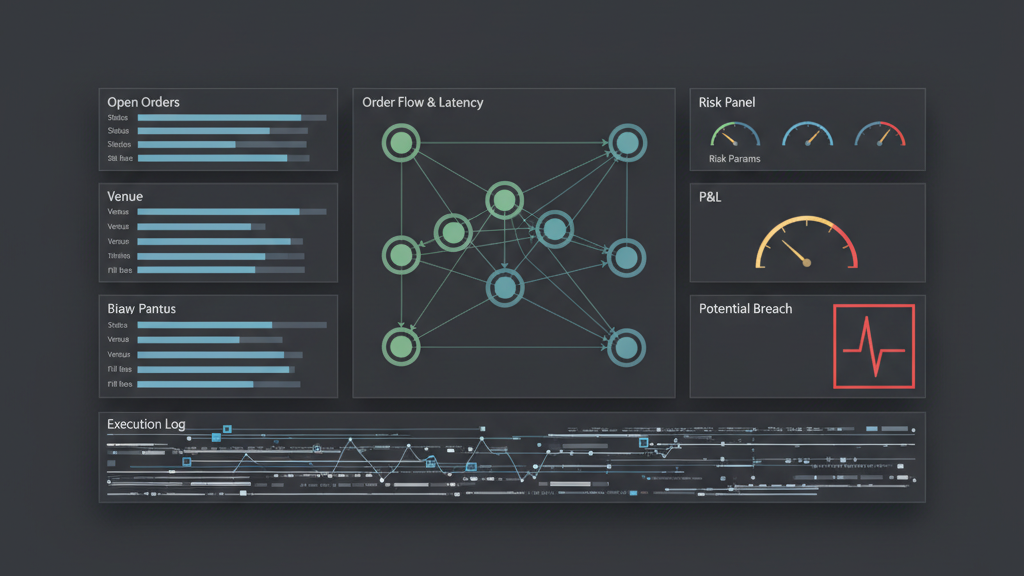

Designing the Smart Order Router (SOR) Logic

The Smart Order Router (SOR) is the brain of the low latency execution architecture, responsible for dynamically determining the best venue and price for an order. Its algorithms must consider real-time factors beyond just the displayed best bid and offer. Latency to each venue, prevailing spread, actual depth of book, estimated fill probability, execution costs (fees and rebates), and potential for information leakage all factor into the routing decision. Implementing this requires highly optimized C++ code, often eschewing dynamic memory allocation in critical paths to avoid unpredictable garbage collection pauses. Furthermore, the SOR needs to handle order splitting across venues for larger quantities and manage the associated risk of partial fills and race conditions, all while adhering to regulatory requirements like Reg NMS in the US or MiFID II in Europe.

- Real-time evaluation of execution quality metrics including fill rates, effective spread, and realized slippage for each venue.

- Dynamic routing algorithms that adapt to changes in market microstructure, such as liquidity shifts or volatility spikes.

- Support for various order types and attributes (e.g., immediate-or-cancel, passive, dark pool participation) specific to each exchange’s API.

- Robust error handling and failover mechanisms to reroute orders in case of venue outages or API connectivity issues.

Robust Order Lifecycle and Risk Management

Once an order is routed, managing its lifecycle is critical, especially when dealing with multi-venue interactions. This involves tracking its state (pending, partial fill, fully filled, canceled, rejected) across potentially multiple destinations. A centralized order book for the firm, consistent across all venues, is essential for accurate position keeping and real-time risk calculations. Pre-trade risk checks, performed in nanoseconds, must validate orders against pre-defined limits for maximum order size, notional value, daily P&L, and exposure. Crucially, a low latency system needs redundant execution paths and failover logic to ensure that orders are never ‘lost’ or stuck in an indeterminate state due to API failures or network hiccups. Operational resilience against these real-world constraints is just as important as raw speed, as an unmanaged runaway order can lead to significant financial exposure.

Backtesting Challenges for Multi-Venue Strategies

Developing and refining strategies for a low latency execution architecture for multi-venue order routing heavily relies on robust backtesting. However, accurately simulating a multi-venue environment presents unique challenges. The primary hurdle is access to high-fidelity, synchronized tick-by-tick market data from all relevant venues, including order book depth. Simulating execution requires modeling venue-specific latency, queue priority, slippage, and the nuances of partial fills. A simple ‘fill at best price’ assumption is often insufficient and misleading. Reconstructing the true state of the order book across multiple venues at any given microsecond, and understanding how an order placed on one venue might impact liquidity or prices on another, requires sophisticated event-driven simulation engines that can handle out-of-order data and accurately model market microstructure. Without a realistic backtesting environment, strategy performance in live trading can diverge significantly from expectations.

Monitoring, Tuning, and Operationalizing the System

Deployment isn’t the end; it’s the beginning of continuous monitoring and optimization. A low latency execution architecture generates vast amounts of operational data: network latencies, CPU utilization, order processing times, fill rates, and message acknowledgments. Tools for real-time performance monitoring are indispensable, from network taps capturing raw packets to custom application-level metrics that track every stage of the order flow. Identifying and diagnosing performance bottlenecks requires specialized profiling tools and often deep dive analysis of kernel-level statistics. Furthermore, dealing with the daily operational complexities—exchange downtimes, API changes, market data feed glitches, and regulatory updates—demands highly skilled support teams and robust, automated operational runbooks. Proactive alerting for anomalies and rapid incident response are critical to minimize financial impact and maintain system integrity.

- Implementing comprehensive telemetry and logging to capture microsecond-level latency measurements across all system components.

- Utilizing network performance monitoring tools to identify jitter, packet loss, and propagation delays to individual venues.

- Establishing clear, automated alerts for performance degradation, API errors, unacknowledged orders, and unusual trading activity.

- Regularly performing system health checks, ‘race tests’ against other systems, and simulating failure scenarios to validate resilience.