In the world of algorithmic trading, the speed and reliability of market data acquisition directly translate into a competitive edge. Even microsecond delays can mean missed opportunities or, worse, trading on stale information. Building robust low-latency trading systems requires a deep understanding of how to source, process, and deliver market data to your trading logic with minimal latency and maximum integrity. This isn’t just about faster internet; it involves intricate decisions about infrastructure, data protocols, processing pipelines, and resilient error handling. We’ll dive into the practical considerations and technical approaches that system engineers and quant teams employ to achieve superior market data performance, moving beyond theoretical concepts to the realities of live production environments.

The Latency Imperative in Data Acquisition Pipelines

For low-latency algorithmic trading, the journey of a market data update, from its origin at the exchange to its arrival at your trading strategy, is a critical path where every nanosecond counts. This isn’t an exaggeration; strategies relying on arbitrage, market making, or high-frequency pattern recognition derive their edge from reacting faster than the competition. The goal is to minimize the ‘glass-to-glass’ latency, encompassing the time from the exchange’s matching engine event to its reflection in your order book state. This involves more than just network speed; it’s about efficient data encoding, robust transport protocols, and streamlined processing at every hop. Any bottleneck—be it an overloaded network interface card (NIC), inefficient parsing logic, or disk I/O contention for logging—can introduce unacceptable delays, eroding the performance of even the most sophisticated trading algorithms. Understanding and continuously measuring this end-to-end latency is fundamental to maintaining a competitive stance.

Direct Exchange Feeds vs. Third-Party Vendor APIs

Choosing your market data source is a foundational architectural decision, heavily influencing potential latency and data quality. Direct feeds, often provided via proprietary protocols like NASDAQ’s ITCH, NYSE’s PITCH, or CME’s MDP 3.0, offer the lowest possible latency because they bypass intermediate aggregators. These feeds typically require significant engineering effort for parsing, normalization, and handling sequence gaps or retransmissions, often demanding specialized hardware and direct fiber connections in co-location facilities. In contrast, third-party vendor APIs (e.g., Refinitiv, Bloomberg, Polygon) provide a more managed solution, abstracting away much of the complexity. While easier to integrate and often cheaper, they introduce additional hops and processing, inherently adding latency – usually in the range of tens to hundreds of milliseconds, which can be prohibitive for high-frequency strategies. The trade-off is often between development complexity and raw speed, with lower-frequency or arbitrage strategies sometimes tolerating vendor latencies if the data breadth or managed service benefits outweigh the speed penalty.

- Direct exchange feeds: Raw binary protocols (e.g., ITCH, PITCH, MDP 3.0), minimal latency, requires custom parser development and sophisticated error handling (sequence gaps, retransmission requests).

- Third-party vendor APIs: REST/WebSocket interfaces, managed infrastructure, easier integration, but introduce additional latency (typically >10ms) due to aggregation and distribution.

- Hybrid approaches: Using direct feeds for primary instruments and vendor APIs for ancillary data (e.g., news, slower moving markets, fundamental data).

- Data normalization challenges: Harmonizing varying symbols, tick sizes, and timestamp formats across multiple direct and vendor feeds for unified processing.

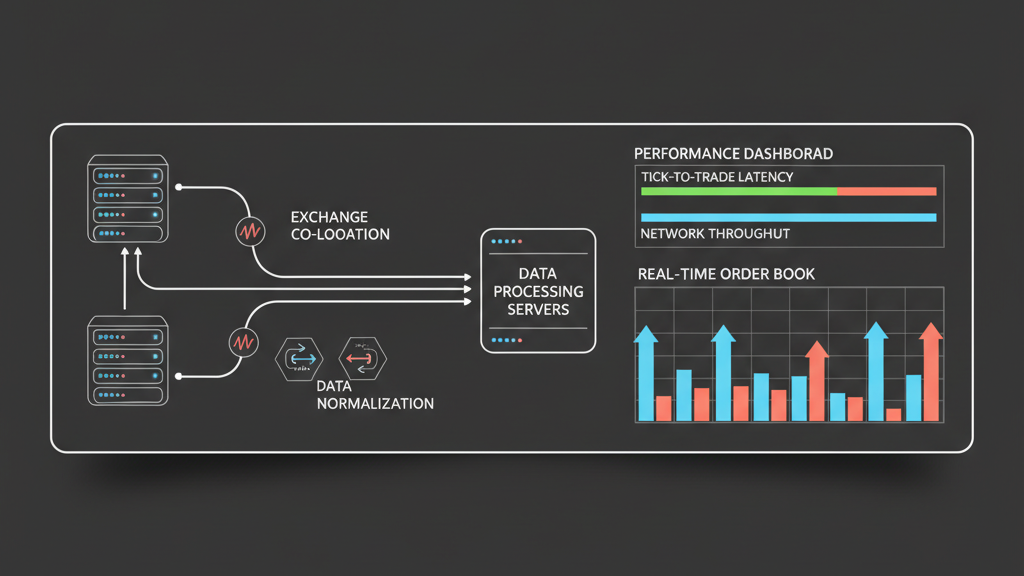

Infrastructure Design and Co-location for Minimal Hop Latency

Achieving true low-latency market data acquisition often necessitates physical proximity to the exchange matching engines, which is primarily facilitated through co-location. This involves housing your servers within the same data center as the exchange, minimizing network latency to literally meters of fiber optic cable. Within the co-location facility, the design of your network topology is paramount. Using high-performance, low-latency network interface cards (NICs) with kernel bypass technologies like Solarflare or Mellanox, along with optimizing network stack settings, can shave off critical microseconds. Furthermore, dedicating physical servers for specific tasks, such as market data ingestion and processing, helps avoid resource contention. Efficient server configurations include high clock-speed CPUs, sufficient RAM to hold order book states in memory, and fast SSDs for persistent logging and historical data capture. These architectural decisions are not trivial investments; they represent a fundamental commitment to speed that directly impacts an algo trading firm’s ability to compete.

Real-time Data Processing: Parsing, Normalization, and Timestamping

Once raw market data arrives, the next critical challenge is to process it efficiently. Raw exchange feeds are often in highly optimized, compact binary formats designed for speed over readability. Custom parsers, typically written in C++ for maximum performance, are essential to quickly decode these messages into usable data structures. Normalization is then required to bring disparate data formats, symbol conventions, and timestamp resolutions into a consistent internal representation for your trading strategies. Accurate timestamping is non-negotiable; assigning a high-resolution, synchronized timestamp (e.g., using PTP or NTP synchronized to nanoseconds) at the earliest possible point of ingestion is crucial for causality analysis, backtesting accuracy, and measuring true execution latency. Mistakes here—such as using OS-level timestamps that can drift, or timestamping after significant processing—can lead to trading decisions based on skewed information, severely compromising strategy performance and risk management effectiveness.

Ensuring Data Quality and Mitigating Gaps in Live Feeds

The notion of ‘perfect’ market data in a live trading environment is a myth. Data feeds can experience dropped packets, out-of-sequence messages, temporary disconnections, or even silent data corruption. Robust market data acquisition strategies must incorporate sophisticated mechanisms to detect and recover from these issues without introducing undue latency. Implementing sequence number checks for every message is a standard practice to identify gaps. Upon detecting a gap, systems often initiate retransmission requests from the data provider or fall back to a slower, but more reliable, secondary data source. Conflation, while sometimes necessary for managing data volume for slower strategies, must be carefully applied, as it intentionally discards some ticks, impacting the fidelity of the market picture. Maintaining an accurate, real-time representation of the order book and last sale data requires not just fast processing, but resilient logic that continuously validates data integrity and has defined recovery paths for anomalies, safeguarding against trading on stale or incomplete information.

- Sequence number validation: Critical for identifying missing messages and ensuring data integrity.

- Retransmission mechanisms: Protocol-specific requests for missed packets, balancing latency impact with data completeness.

- Redundant feeds: Implementing failover to secondary data sources (e.g., another direct feed, a vendor feed) in case of primary feed disruption.

- Stale data detection: Monitoring data arrival times and flagging instruments if updates cease, preventing trading on outdated prices.

- Checksums and CRC checks: Verifying data payload integrity, especially for binary protocols, to detect corruption.

Performance Monitoring and Continuous Optimization

Deploying a market data acquisition system is only the first step; continuous monitoring and optimization are essential. Real-time dashboards displaying key metrics like end-to-end latency, message throughput, CPU utilization, and network packet loss are indispensable. Tools that allow for granular capture and analysis of network traffic (e.g., tcpdump, Wireshark) are vital for deep-dive diagnostics. Regularly benchmarking different components of the pipeline – from network hops to parser performance – helps identify bottlenecks before they impact profitability. This iterative process often involves fine-tuning operating system parameters, optimizing code paths, and upgrading hardware. For instance, testing different NIC drivers or CPU core allocations can yield surprising performance gains. A proactive approach to performance tuning, combined with automated alerts for deviations from expected latency or data quality baselines, ensures that your market data pipeline remains at peak efficiency, continuously supporting your low-latency algorithmic trading strategies.