In the world of high-frequency and algorithmic trading, the speed and reliability of market data acquisition are paramount. A robust market data acquisition strategy is not merely about receiving data; it’s about getting the *right* data, at the *right* time, with the *lowest* possible latency, and ensuring its integrity from source to strategy. For systems like those developed by Algovantis, which provide algorithmic trading scripts, backtesting engines, and execution automation tools, the quality of the incoming market data directly impacts trading performance, signal generation, and ultimately, profitability. This article delves into the core components and practical considerations for building and maintaining market data pipelines designed for low-latency environments, addressing the real-world challenges faced by quantitative teams.

Selecting Optimal Data Feeds and Providers

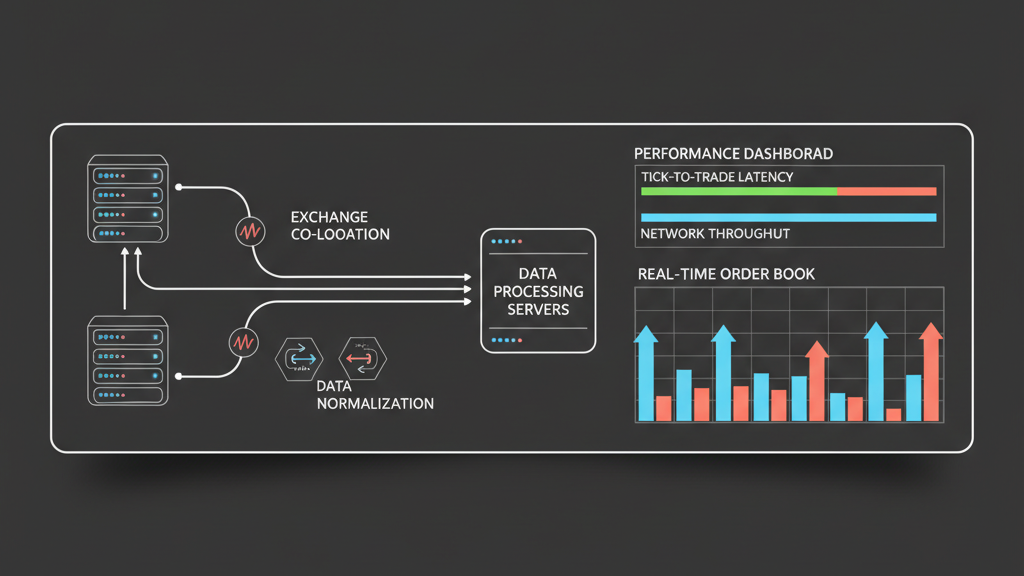

Choosing the right market data feed is the first critical step in establishing a low-latency trading pipeline. This isn’t just about price; it’s about the type of data (Level 1, Level 2, full order book, market-by-price, market-by-order), the format, and the delivery mechanism. Direct feeds from exchanges, often via dedicated fiber optics or collocated servers, offer the absolute lowest latency but come with significant infrastructure and licensing costs. Consolidated feeds, on the other hand, aggregate data from multiple exchanges and are typically more cost-effective and easier to implement, but introduce a latency penalty and potential for slower updates due to the aggregation process. For Algovantis’s execution automation tools, the trade-off between latency and cost often dictates the choice, particularly when dealing with instruments across various venues. A thorough due diligence on provider uptime, support, and historical data accuracy is essential, as data quality issues can silently corrupt backtesting results and lead to real-time execution errors.

- Direct Exchange Feeds: Lowest latency, highest cost, complex integration.

- Consolidated Feeds: Higher latency, lower cost, simpler integration, covers multiple venues.

- Data Granularity: Level 1 (top of book), Level 2 (order book depth), full tick data requirements.

- Vendor Reliability: Evaluate uptime guarantees, support response, and historical data availability.

Architecting Network Infrastructure for Latency Reduction

Beyond the feed itself, the physical network infrastructure plays an immense role in minimizing data acquisition latency. Colocation within exchange data centers is the gold standard, providing direct cross-connects to exchange matching engines and market data gateways. This eliminates the vast majority of network transit time, reducing round-trip delays to microseconds or even nanoseconds. For those unable to colocate, strategically placed points of presence (PoPs) with optimized network routes and dedicated lines can still offer a significant edge over public internet connections. Furthermore, hardware choices matter: low-latency network interface cards (NICs) with kernel bypass technologies (e.g., Solarflare, Mellanox) and custom-tuned operating systems can shave off additional microseconds by reducing CPU overhead and context switching. These are the kinds of implementation details that directly impact the performance ceiling of any algorithmic trading script operating in a real-time environment.

Efficient Data Parsing, Normalization, and Timestamping

Once raw market data hits your system, efficient processing is paramount. Data from different exchanges or providers often arrives in disparate, proprietary formats (e.g., binary, FIX, custom protocols). The parsing layer must be highly optimized to decode these messages with minimal delay, typically leveraging compiled languages (C++, Rust) or specialized libraries. Normalization is then required to transform this disparate data into a consistent, internal format that strategies can readily consume, abstracting away vendor-specific quirks. Crucially, accurate timestamping is essential: using hardware timestamps from NICs or synchronized NTP servers ensures precision, which is vital for accurately sequencing events, detecting arbitrage opportunities, and preventing race conditions in execution logic. A slight error in timestamping can lead to misinterpreting market conditions or even executing stale orders, undermining the entire low-latency effort.

- Custom Parsers: Optimized for specific binary protocols and vendor formats.

- Data Normalization: Unifying varied data structures into a consistent, internal schema.

- Hardware Timestamping: Using NIC-level timestamps for nanosecond precision.

- Synchronization: Maintaining strict clock sync across all system components via NTP/PTP.

Distributing Data Within the Trading System

Acquiring and processing data quickly is only half the battle; it must then be efficiently distributed to various components of the trading system: strategy engines, risk management modules, logging services, and UI dashboards. Common patterns include shared memory segments for inter-process communication (IPC) on the same host, zero-copy message queues (like ZeroMQ or Aeron) for robust and low-latency message passing between processes or machines, or custom pub/sub mechanisms. The choice depends on the scale, reliability requirements, and the degree of isolation needed between components. An Algovantis backtesting engine, for example, might replay historical data via a similar internal distribution mechanism, ensuring that the backtesting environment accurately mirrors the latency and data flow characteristics of the live execution system, which is critical for realistic performance evaluation and mitigating ‘backtest overfitting’ risks.

Building Resilience: Monitoring, Failover, and Data Gaps

Low-latency pipelines are inherently fragile; even a minor disruption can have significant financial consequences. Robust monitoring is non-negotiable, tracking everything from network latency and packet loss to data feed health, message rates, and processing bottlenecks. Implementing redundant data feeds from multiple providers or diverse network paths provides critical failover capabilities. When a primary feed drops or degrades, the system must automatically and rapidly switch to a backup with minimal interruption. Handling data gaps, which are inevitable, requires careful design; strategies need to know if data is missing, stale, or if the feed is down, to avoid making decisions on incomplete or erroneous information. This often involves buffering, sequence number checks, and ‘heartbeat’ messages to ensure continuous data flow. Without these safeguards, even the most advanced algorithmic trading scripts can become vulnerable to unexpected market events or infrastructure failures.

- Proactive Monitoring: Real-time alerts for latency spikes, feed outages, and data inconsistencies.

- Redundant Feeds: Multiple data sources and network paths for automatic failover.

- Gap Handling Logic: Detect and manage missing data, re-sequencing, and backfilling.

- Health Checks: Continuous validation of data integrity and system component availability.

Integrating Acquisition with Backtesting and Simulation

The design of your real-time market data acquisition strategies for low-latency trading pipelines directly impacts the quality of your historical data and, by extension, the reliability of your backtesting and simulation results. It’s crucial that the historical data used for backtesting accurately reflects the data types, timing, and any quirks present in the live feed. Discrepancies, such as differing timestamp precision, aggregation logic, or missing fields, can lead to strategies that perform well in backtests but fail in live trading. Algovantis’s backtesting engines emphasize this fidelity, allowing developers to replay historical data with similar processing logic used for live feeds. This approach ensures that performance evaluations, stress testing, and parameter optimization are conducted under conditions as close to reality as possible, helping to identify potential execution gaps or data-related issues before they impact live capital. The goal is a seamless transition from simulation to live operations, powered by consistent data pipelines.