Developing sophisticated algorithmic trading strategies hinges entirely on the quality and reliability of your market data infrastructure. Without a robust and resilient market data pipeline, quantitative research efforts are compromised by data gaps, inconsistencies, or outright failures, leading to inaccurate backtests and unreliable live trading decisions. Building such a system from the ground up involves careful consideration of data sources, ingestion mechanisms, storage architectures, processing logic, and distribution methods, all while planning for inevitable failures and scaling challenges. This isn’t just about pulling in quotes; it’s about engineering a system that can withstand the unpredictable nature of financial markets and maintain data integrity under pressure, forming the bedrock for any successful quantitative trading operation.

Ingestion Layer: Handling Diverse Sources and Volatility

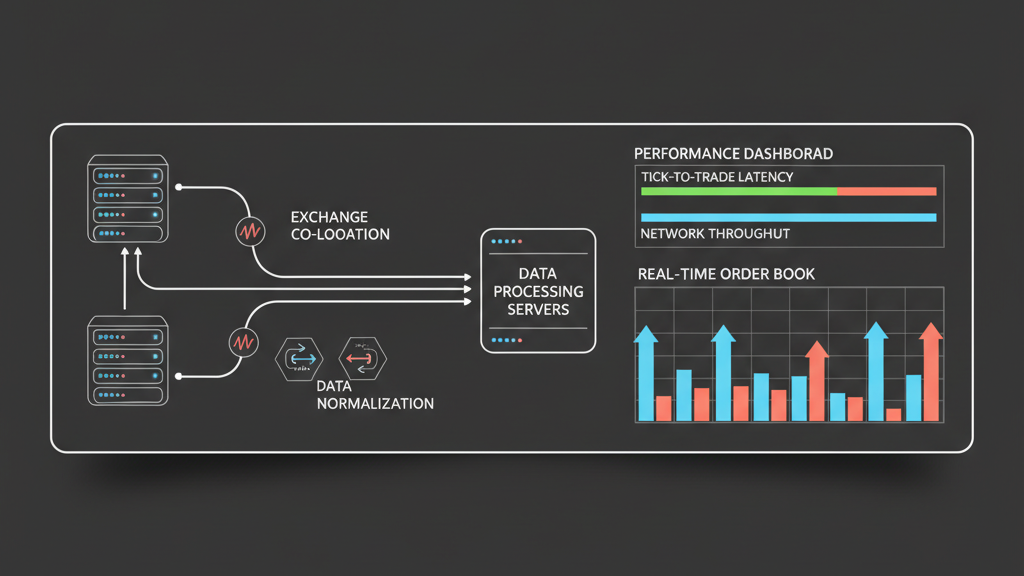

The initial challenge in building a resilient market data pipeline for quant research is managing the ingestion from diverse sources. Different venues and data providers often present data through varying protocols like FIX, websockets, or proprietary APIs, each with its own quirks regarding message formats, rate limits, and authentication schemes. Designing for resilience here means implementing robust error handling for connection drops, partial data feeds, and malformed messages. We typically employ a fan-out architecture where multiple redundant ingestion services connect to the same feed, ensuring that if one fails or gets rate-limited, others can take over seamlessly. It’s also critical to implement persistent queues (e.g., Kafka) immediately after ingestion to decouple the data producers from consumers, buffering data during transient outages or spikes in market activity and preventing data loss even if downstream processing components temporarily go offline.

Storage Architectures for High-Fidelity Historical Data

Selecting the right storage architecture is paramount for both real-time access and comprehensive backtesting. For tick-level data, traditional relational databases often struggle with throughput and storage costs. Time-series databases (TSDBs) like InfluxDB or Kdb+, or columnar stores like ClickHouse, are typically preferred due to their optimized append performance, compression capabilities, and native support for time-based queries. The design must account for data partitioning by date, symbol, or exchange to optimize query performance for large historical ranges. Implementing a tiered storage strategy, moving older, less frequently accessed data to cheaper object storage (e.g., S3) while keeping recent data on faster, more expensive storage, helps balance cost and access speed. Data integrity checks, like checksumming on write and read, are essential to ensure that what was ingested is what is stored and retrieved for research.

- Partition data by time and symbol for efficient historical queries.

- Utilize tiered storage to manage costs and access speeds effectively.

- Implement checksums to verify data integrity throughout the storage lifecycle.

- Consider specialized time-series or columnar databases for optimal performance.

Processing and Normalization: Addressing Data Quality Issues

Raw market data is rarely clean enough for direct use in quantitative models. The processing and normalization stage is where this raw data is transformed into a consistent, usable format, which is a critical step to design a resilient market data pipeline for quant research. This involves handling out-of-sequence messages, deduplicating records, interpolating missing ticks, adjusting for corporate actions (splits, dividends), and resolving symbol mapping inconsistencies across different exchanges. A common challenge is managing the latency introduced by this processing; while some historical data can tolerate batch processing, real-time trading requires low-latency, streaming normalization. Implementing a microservices-based approach allows specific processing steps to be scaled independently and fail gracefully. Regular validation against known good data sources or cross-referencing with other feeds helps identify and correct anomalies before they contaminate research results.

Distribution Layer: APIs and Access Patterns for Quants

Once data is ingested, stored, and processed, it needs to be efficiently distributed to quant researchers and execution systems. The distribution layer must provide flexible APIs that support various access patterns: streaming real-time feeds for live strategies, historical point-in-time queries for backtesting, and bulk downloads for large-scale data analysis. For real-time distribution, low-latency publish-subscribe mechanisms, often built on technologies like ZeroMQ or Apache Kafka, are crucial. For historical data, a robust REST API or a direct database connection with proper access controls allows researchers to query specific time ranges or instruments. Implementing caching layers for frequently accessed historical data, such as daily bars or specific instrument histories, can significantly reduce database load and improve researcher productivity. The goal is to provide reliable, high-performance access without exposing the underlying complexity of the pipeline.

- Offer flexible APIs for streaming, historical queries, and bulk downloads.

- Utilize low-latency pub-sub systems for real-time data distribution.

- Implement caching for frequently accessed historical data to reduce load.

- Ensure robust access controls to protect sensitive market data.

Resilience and Monitoring: Preventing and Mitigating Failures

True resilience means anticipating and actively managing failures at every stage. This involves designing for high availability with redundant components, failover mechanisms, and disaster recovery strategies across multiple data centers or cloud regions. Real-time monitoring of data feeds for completeness, latency, and correctness is non-negotiable. Alerting systems must be configured to notify engineers immediately of any deviation, such as a drop in tick count, a sudden increase in latency, or an out-of-tolerance price swing. Implementing a robust data lineage system helps track data from its source through all processing stages to its final consumption, which is invaluable for debugging data discrepancies or validating research results. Regular data integrity checks and reconciliation processes against reference data sources are also crucial for maintaining trust in the pipeline’s output.

Backtesting Integration: Ensuring Data Consistency and Preventing Look-Ahead Bias

A key purpose of a well-engineered market data pipeline is to feed reliable data into backtesting engines, forming the foundation of quant research. Ensuring that the historical data used for backtesting accurately reflects what would have been available to a live strategy at any given point in time is critical. This means rigorously handling data timestamps, including exchange time, received time, and processed time, to prevent look-ahead bias. For instance, using trade data only after it would have been disseminated on the live feed, or corporate actions data only after its effective date. Our pipelines often incorporate data versioning to allow researchers to backtest against specific snapshots of historical data, which is essential for reproducible research and auditing. The ability to quickly and reliably retrieve vast amounts of historical data, sometimes spanning decades for multiple assets, directly impacts the speed and depth of quantitative analysis, making data access performance a critical design consideration.

- Rigorously handle timestamps to prevent look-ahead bias in backtests.

- Implement data versioning for reproducible research and auditing.

- Ensure fast and reliable retrieval of vast historical data for extensive analysis.

- Align historical data availability with live feed dissemination timing for accuracy.