Developing and deploying high-frequency trading strategies demands an extremely granular understanding of market dynamics, which necessitates reliable access to tick-level data. The sheer volume and velocity of this data present significant challenges, making the underlying tick data storage architecture a critical component of any sophisticated algo trading system. Our focus at Algovantis is to engineer systems that not only ingest and store this data efficiently but also make it readily available for rigorous backtesting and real-time decision-making in live execution environments. A poorly designed data infrastructure can introduce look-ahead bias, cause performance bottlenecks during backtests, or lead to missed opportunities and erroneous trades in production. Getting this architecture right is foundational for developing robust, profitable algorithmic strategies and managing operational risks effectively, ensuring that data is always consistent, accurate, and accessible when it matters most, whether validating a strategy or reacting to a sudden market shift.

The Foundation: Understanding Tick Data Demands

Tick data represents the most granular form of market data, capturing every single price change or trade event. For strategies operating on short timeframes or those highly sensitive to micro-structure, this level of detail is non-negotiable. The volume of data generated by even a single liquid instrument can be staggering, easily reaching gigabytes or terabytes daily across multiple assets and venues. An effective tick data storage architecture must be built from the ground up to handle this scale, focusing on efficient ingestion, high-density storage, and rapid retrieval. Without this foundational capability, backtesting results become unreliable due to sampling or aggregation, and live execution logic can be starved of the critical, up-to-the-millisecond information required to make informed decisions. We’ve seen firsthand how a system struggling with data ingestion can drop ticks, creating gaps that lead to skewed historical simulations or, worse, incorrect real-time interpretations of market state.

Key Architectural Considerations for Tick-Level Storage

Designing an optimal tick data storage architecture requires a careful balance between storage efficiency, query performance, and operational overhead. The choice of database technology is paramount here, with time-series databases (TSDBs) often preferred over traditional relational databases due to their optimized indexing for time-based queries and superior compression algorithms for sequential data. Columnar stores also offer significant advantages for analytical queries across large datasets. Considerations extend beyond the database itself to the entire data pipeline, including robust data ingestion mechanisms that can handle bursts, real-time data validation and cleansing to filter out corrupt or duplicate entries, and a resilient data replication strategy to prevent data loss. We typically implement a multi-tiered storage approach, with a hot tier for recent data and a colder, more cost-effective tier for historical archives, which still needs to be performant enough for comprehensive backtesting.

- High-performance ingestion pipelines capable of handling sustained high-throughput and burst traffic without dropping data.

- Leveraging time-series databases (e.g., InfluxDB, TimescaleDB) or columnar stores (e.g., ClickHouse, Kdb+) for optimized storage and retrieval.

- Implementing advanced compression techniques (e.g., Gorilla, delta encoding) to reduce storage footprint and I/O latency.

- Developing robust data validation and cleaning routines to ensure data integrity at the point of ingest, correcting for common issues like out-of-order ticks or missing fields.

- Designing intelligent indexing and partitioning schemes to accelerate queries for specific time ranges, symbols, or exchanges during backtesting and analysis.

Tick Data for Backtesting: Balancing Fidelity and Speed

For backtesting, the primary goal is to simulate strategy performance as accurately as possible against historical market conditions. This demands not just access to tick data, but also the ability to replay it chronologically with precise timestamps, often across multiple instruments simultaneously. A performant tick data storage architecture enables rapid iteration during strategy development. If queries for historical data are slow, the feedback loop for testing new ideas grinds to a halt, impeding research. We often implement a dedicated backtesting data service that can efficiently stream tick data, sometimes pre-aggregated into common bar types (e.g., 1-minute, 5-minute bars) but always with the option to drill down to raw ticks when needed. This approach allows researchers to choose their desired level of granularity and speed. However, it’s crucial to ensure that any aggregation process used for faster backtesting accurately reflects the underlying tick dynamics to avoid introducing look-ahead bias or other data-related errors that distort performance metrics.

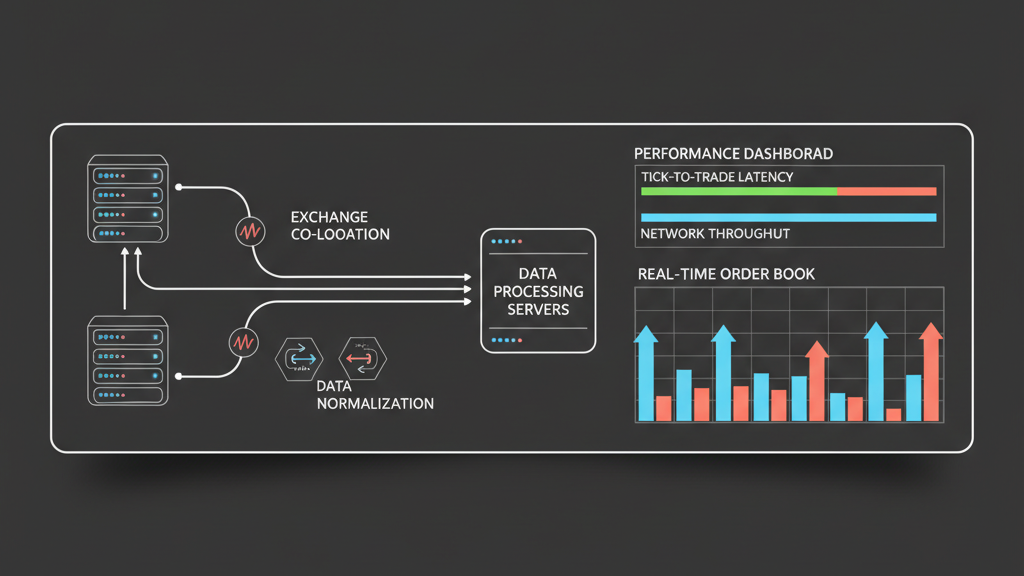

Real-time Tick Data for Live Execution: Latency and Reliability

In a live execution environment, the requirements for tick data shift dramatically towards ultra-low latency access and extreme reliability. Strategies often depend on constructing real-time order books, calculating derived indicators, or reacting to specific price movements within microseconds. The tick data storage architecture for live trading typically involves a combination of in-memory data structures and fast persistent storage. Data is often streamed directly from exchange APIs, processed by a low-latency pipeline, and held in a high-speed cache or fast time-series database for immediate consumption by execution algorithms. This minimizes disk I/O and network latency. Redundancy is paramount; any single point of failure in the data pipeline or storage can lead to stale data, missed signals, or incorrect order placement. We configure failover mechanisms and ensure multiple data feeds from different providers to safeguard against unexpected API outages or data quality degradation from a single source, which is a common, though often overlooked, real-world challenge.

- Maintaining in-memory data structures (e.g., hash maps, dequeues) for immediate access to recent ticks and order book state.

- Implementing high-speed, persistent storage for short-term historical data that can be accessed with minimal latency.

- Utilizing direct memory access (DMA) or kernel bypass techniques where extreme low latency is critical for data transfer.

- Designing robust fault-tolerant data pipelines with automatic failover for data ingestion from multiple exchange feeds.

- Ensuring atomic updates and consistent data views across distributed components in a high-frequency trading system.

Challenges in Building a Robust Tick Data Infrastructure

Building and maintaining a performant tick data storage architecture is fraught with practical challenges. Data quality is a perpetual concern; exchanges can send corrupted, duplicate, or out-of-sequence ticks, or even stop sending data altogether without warning. Reconciling these discrepancies and ensuring a clean dataset requires sophisticated validation and correction logic. Another major hurdle is managing the sheer cost associated with storing vast amounts of high-frequency data, particularly when considering cloud storage options or specialized hardware. Latency management across distributed components – from data ingest to processing and retrieval – is a continuous battle. Furthermore, evolving exchange APIs and market structures mean the data ingestion layer must be flexible and adaptable. These factors collectively highlight why a ‘set it and forget it’ approach is dangerous; continuous monitoring, maintenance, and optimization are essential to ensure the tick data remains a reliable foundation for trading decisions, not a source of hidden risk or operational burden.

Algovantis Approach: Integrating Tick Data into the Trading Workflow

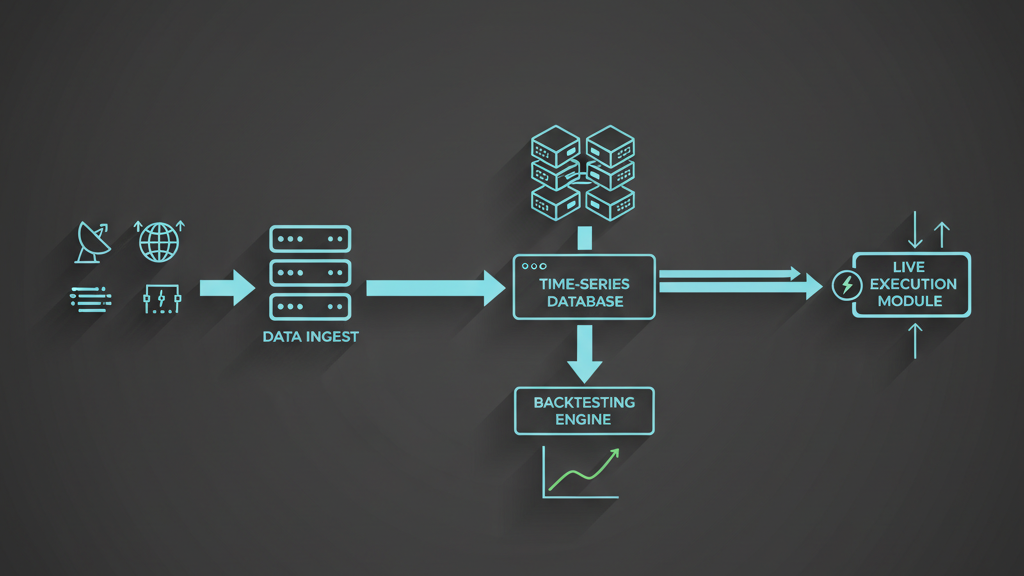

At Algovantis, our tick data storage architecture is designed as an integral part of the broader algorithmic trading ecosystem. We leverage a multi-database approach, often combining a specialized TSDB for raw tick storage with a relational database for metadata and a fast key-value store for derived real-time metrics. Data ingestion is handled by a streaming architecture using Kafka or similar message brokers, allowing for decoupled processing and high throughput. This setup ensures that data flows seamlessly from raw exchange feeds into our backtesting engines for strategy validation and then, with appropriate real-time processing, into our live execution modules. We employ advanced monitoring tools to track data integrity, latency, and system health across the entire pipeline. The ability to quickly query historical data for incident analysis, audit trails, and regulatory compliance is also built into our system, ensuring that every tick is accounted for and traceable throughout its lifecycle, providing comprehensive visibility into strategy behavior and market interactions.