Accurate market data is the bedrock of any successful algorithmic trading system. Without clean, reliable tick data, backtesting results become misleading, and live trading strategies can suffer from erroneous signals or flawed execution. Tick data, being the most granular form of market information, is particularly susceptible to anomalies arising from exchange feeds, data providers, network latencies, or processing errors. These anomalies, ranging from misplaced timestamps to extreme price spikes, can dramatically skew calculations, leading to suboptimal or even catastrophic trading decisions. This article will delve into practical data cleaning methods for tick data anomaly detection, providing a framework to build robust pre-processing pipelines essential for high-frequency trading and quantitative analysis.

Understanding Common Tick Data Anomalies

Tick data, by its very nature, is a continuous stream of discrete events, each carrying a timestamp, price, and volume. While this granularity offers precise insights, it also makes the data vulnerable to various types of anomalies that can invalidate analytical models. These issues are not always obvious and can propagate silently through a system if not properly addressed. Identifying the distinct categories of data errors is the first step in applying effective data cleaning methods for tick data anomaly detection. Understanding the root cause or typical manifestation helps in designing targeted filters rather than applying generic blanket rules that might discard valid information. For instance, a sudden price jump could be a genuine market event or a clear data error, and the cleaning process needs to differentiate these scenarios based on context and magnitude.

- **Out-of-Order Ticks:** Data records arriving with timestamps that are not strictly increasing, often due to network latency or merging data from multiple sources.

- **Duplicate Ticks:** Identical price, volume, and timestamp entries that can skew volume calculations or trigger redundant events.

- **Extreme Price/Volume Spikes:** Unrealistic price movements or unusually high/low volumes that are many standard deviations away from recent averages, typically caused by data feed glitches or fat-finger errors.

- **Missing Ticks/Gaps:** Periods where no data is recorded for an instrument when it should be actively trading, leading to incomplete time series and biased analysis.

- **Timestamp Inconsistencies:** Microsecond-level discrepancies or jumps that can distort spread calculations or lead to incorrect order book reconstruction.

- **Zero or Negative Price/Volume:** Clearly invalid data points that indicate fundamental data corruption.

Statistical Approaches for Anomaly Detection

Statistical methods form the backbone of many data cleaning methods for tick data anomaly detection, offering a quantitative way to flag suspicious entries. These techniques rely on the assumption that tick data generally follows certain distributions or behaves within expected bounds. Deviations beyond these statistical norms are then marked as potential anomalies. For prices, this often involves calculating rolling standard deviations or examining price changes relative to recent averages. Volume anomalies can be detected similarly, by comparing current trade volumes to historical averages or typical trade sizes. The challenge lies in setting appropriate thresholds; too strict, and genuine market movements might be filtered out; too lenient, and critical errors persist. A dynamic threshold, perhaps based on market volatility or instrument liquidity, often performs better than a static one. Implementing these methods requires careful consideration of the look-back window and the sensitivity of the statistical metric, as these parameters directly influence detection accuracy and false positive rates.

Rule-Based Filtering and Thresholding

Beyond statistical models, rule-based filtering provides a direct and often faster approach to implement data cleaning methods for tick data anomaly detection. These rules are typically derived from domain knowledge, market microstructure, and observations of common data feed errors. They involve setting hard thresholds or logical conditions that tick data must satisfy to be considered valid. While less adaptive than statistical methods, rule-based filters are highly effective for catching clear-cut, egregious errors that would otherwise severely corrupt a dataset. For example, a zero price or negative volume tick is unequivocally an error and can be immediately discarded. The key is to design a hierarchy of rules, starting with the most certain errors and progressing to more nuanced checks, ensuring that no valid data is inadvertently removed. Regular review and adjustment of these thresholds are crucial, especially when market conditions or instrument characteristics change, to maintain their effectiveness.

- **Price Range Bounds:** Discard ticks where the price falls outside a predefined minimum/maximum range (e.g., price < 0.01 or price > 1,000,000).

- **Max Price Jump/Drop:** Filter out ticks where the price change from the previous tick exceeds a certain percentage or absolute value (e.g., > 1% in 1 millisecond).

- **Minimum/Maximum Volume:** Remove ticks with zero volume or volumes exceeding typical exchange limits for a single trade.

- **Timestamp Order Enforcement:** Reorder or flag ticks where timestamps are not strictly monotonically increasing; often involves assigning a sequential ID after sorting.

- **Bid-Ask Spread Sanity Checks:** Ensure bid price is always less than or equal to ask price, and the spread is within reasonable bounds (e.g., not negative, not excessively large for liquid assets).

- **Consecutive Tick Repetition:** Identify and consolidate or remove duplicate ticks with identical timestamps, prices, and volumes to avoid overcounting.

Time-Series Based Anomaly Detection Techniques

Advanced data cleaning methods for tick data anomaly detection often leverage time-series analysis to identify patterns that deviate from expected temporal sequences. Unlike point-in-time statistical checks, these methods consider the context of preceding and succeeding ticks. Techniques like Exponentially Weighted Moving Averages (EWMA) can be used to track the ‘normal’ behavior of prices or volumes, flagging any tick that falls outside a certain multiple of the EWMA standard deviation. Kalman filters can also be employed to estimate the true state of a price series, correcting for noise and identifying points that significantly diverge from the filter’s prediction. For more complex patterns, machine learning algorithms such as Isolation Forests or One-Class SVMs can learn the ‘normal’ structure of tick data and detect outliers in multi-dimensional feature spaces (e.g., considering price, volume, and spread simultaneously). The computational cost of these methods can be higher, making real-time application challenging without optimized implementations, but they offer superior detection capabilities for subtle, correlated anomalies.

Impact of Dirty Tick Data on Backtesting and Live Execution

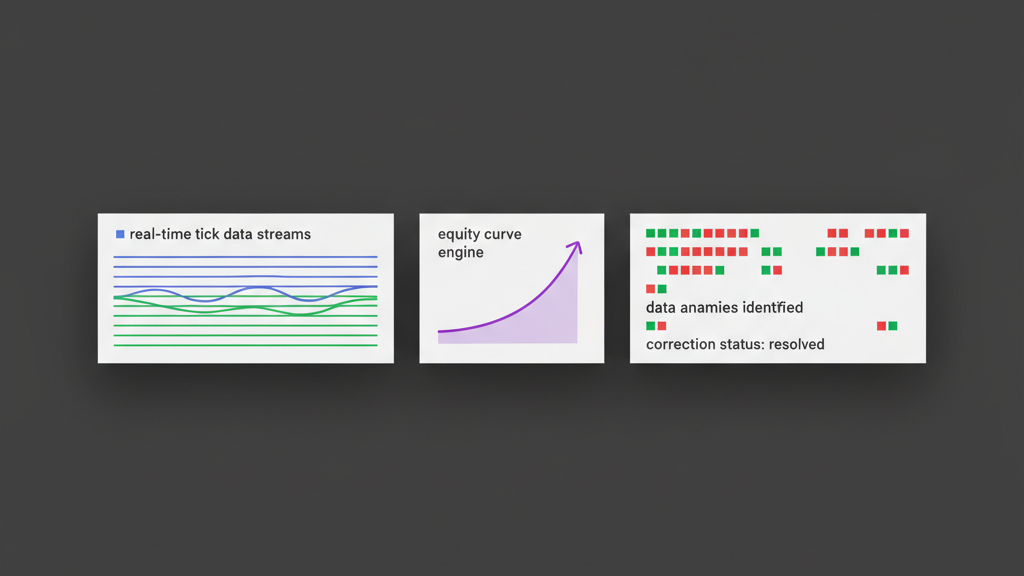

The consequences of neglecting robust data cleaning methods for tick data anomaly detection extend directly into both the backtesting phase and live trading. During backtesting, erroneous tick data can lead to inflated profits, unrealistic slippage calculations, or incorrect signal generation, giving a false sense of strategy viability. A strategy might appear profitable due to a single phantom price spike that generates an impossible trade. In live execution, the impact is more immediate and potentially costly. Malformed ticks can trigger trades at invalid prices, cause orders to be placed with incorrect quantities, or lead to rapid, unintended position changes. Such errors erode confidence in the trading system and necessitate significant manual intervention, negating the benefits of automation. Addressing these data quality issues pre-emptively is a critical risk management component, preventing financial losses and preserving the integrity of the algorithmic decision-making process.

- **Distorted P&L Curves:** Anomalies can create ‘phantom’ profits or losses in backtests, making strategy performance metrics unreliable.

- **Incorrect Slippage Modeling:** Unrealistic gaps or spikes in historical data lead to underestimation or overestimation of execution costs.

- **False Trading Signals:** Erroneous price or volume ticks can prematurely trigger entry/exit conditions, leading to bad trades in live systems.

- **Order Book Inaccuracies:** Corrupt bid/ask data can lead to mispriced limit orders or poor execution logic based on a skewed market view.

- **Increased Latency/Resource Usage:** Processing and validating large volumes of dirty data can consume excessive computational resources and introduce delays.

- **Breached Risk Limits:** Uncontrolled execution due to bad data can lead to trades exceeding predefined risk parameters, resulting in significant drawdowns.

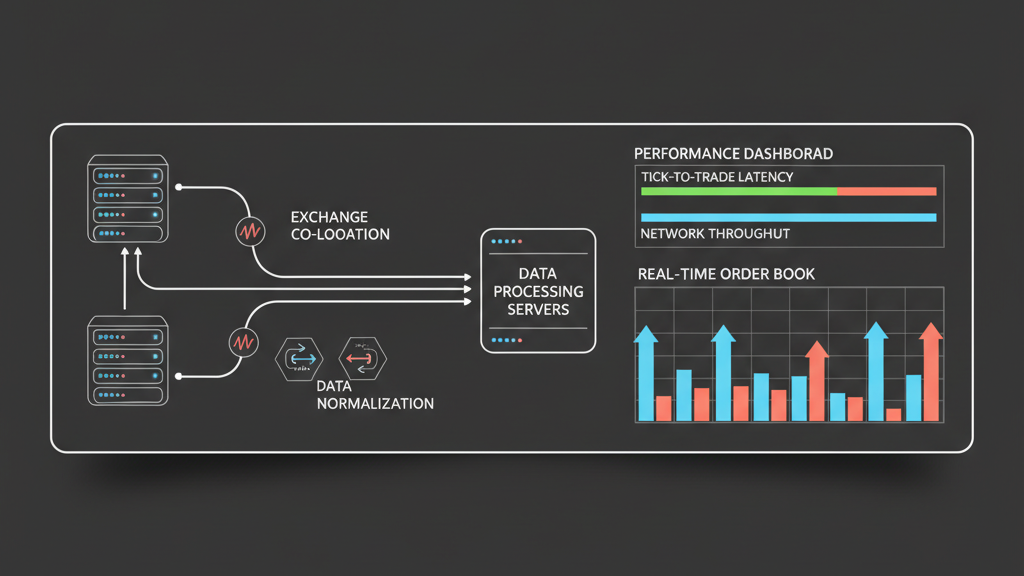

Implementing a Robust Data Cleaning Workflow

Integrating data cleaning methods for tick data anomaly detection into an automated trading workflow requires a systematic approach. The typical pipeline involves ingesting raw data, applying a series of filters and corrections, storing the cleaned data, and then making it accessible for backtesting or live strategy consumption. This pre-processing layer should be decoupled from the core strategy logic but tightly integrated with the data ingestion engine. It’s crucial to log all detected anomalies and the actions taken (e.g., corrected, discarded) for auditability and to refine the cleaning rules over time. Considerations like real-time versus batch processing, the computational overhead of complex filters, and the trade-off between speed and accuracy must be balanced. For high-frequency systems, some initial, computationally light filters might run in real-time, with more intensive corrections applied asynchronously to historical data. This iterative refinement ensures that the cleaning process itself doesn’t introduce unacceptable latency into the trading loop.

Challenges and Best Practices in Tick Data Cleaning

Despite sophisticated data cleaning methods for tick data anomaly detection, several challenges persist. Distinguishing genuine market microstructure events (like HFT market making or rapid price discovery) from actual data errors is often difficult. Over-filtering can lead to data loss and distort genuine market dynamics, while under-filtering leaves critical vulnerabilities. Another challenge is dealing with the sheer volume and velocity of tick data, where real-time processing demands highly optimized and distributed systems. Best practices include maintaining multiple data sources for cross-validation, applying checksums and data integrity checks during ingestion, and regularly comparing cleaned data against trusted historical archives. Moreover, a comprehensive logging system for every identified anomaly and the applied correction is non-negotiable for debugging and continuous improvement. The cleaning process should also be iterative and adaptable, capable of learning from new types of anomalies or adjusting thresholds based on evolving market conditions. Ultimately, the goal is to produce a dataset that is not only free of obvious errors but also accurately reflects the true market activity at its most granular level, enabling confident strategy development and deployment.