In algorithmic trading, the quality and consistency of your market data are paramount. Specifically, Open, High, Low, Close, Volume (OHLCV) data forms the bedrock for most technical analysis, indicator calculations, and strategy backtesting. However, sourcing this data from multiple exchanges and third-party vendors introduces a complex array of inconsistencies. Each provider might structure, timestamp, or even define OHLCV bars differently, making direct comparison or aggregation a significant hurdle. Without a robust process for OHLCV market data normalization across exchanges and vendors, your trading algorithms could be operating on flawed assumptions, leading to inaccurate backtesting results and unpredictable live performance. This isn’t a theoretical problem; it’s a daily operational challenge for any serious quantitative trading firm.

The Inherent Challenge of Disparate OHLCV Sources

When integrating OHLCV market data from various sources—be it direct exchange feeds or aggregated vendor products like Refinitiv, Bloomberg, or Polygon.io—you quickly encounter a lack of standardization. Each source often presents data with unique field names (e.g., ‘priceOpen’ vs. ‘openPrice’), differing data types (float vs. decimal, string timestamps vs. epoch integers), and implicit rules for bar construction. Some vendors might include pre-market or post-market activity in daily bars, while others strictly adhere to regular trading hours. Furthermore, nuances in trade aggregation methods, such as how block trades or odd lots are factored into volume, can create subtle discrepancies. Attempting to combine or cross-reference this data without a dedicated normalization layer inevitably leads to data integrity issues that propagate through your entire trading stack, undermining the reliability of your models.

Timestamp Synchronization and Granularity Management

One of the most insidious challenges in OHLCV market data normalization is managing timestamps and bar granularity. While two sources might both claim a ‘daily bar’ for a stock, one could define the day’s close at 16:00 ET, while another uses 17:00 ET for internal aggregation purposes, or even provides data in a different timezone without clear labeling. For intraday data, the precise start and end times of a 1-minute or 5-minute bar can vary, often due to how trades are bucketed (e.g., inclusive or exclusive end timestamps). Aligning these precisely across datasets is critical, especially for high-frequency strategies or those involving inter-market arbitrage where micro-second precision matters. Misaligned timestamps can create look-ahead bias in backtests or cause signals to trigger at the wrong time in live trading, leading to significant performance degradation.

Corporate Actions and Symbol Mapping Hurdles

Corporate actions like stock splits, dividends, mergers, and delistings pose a profound challenge to maintaining consistent historical OHLCV data. A simple stock split will halve the price and double the volume of all prior bars, requiring backward adjustment to create a continuous, comparable price series. Dividends similarly necessitate adjustments, often to the ‘adjusted close’ price, to accurately reflect total return. Different vendors handle these adjustments in distinct ways, or sometimes not at all, leaving the onus on the user. Furthermore, instrument identifiers are rarely universal; a stock might have a different symbol on NYSE versus NASDAQ, or a vendor-specific ID that doesn’t map directly to a standard ISIN or CUSIP. A robust normalization pipeline must include a sophisticated corporate actions engine and a comprehensive symbol mapping service to ensure you’re always comparing the correct, adjusted historical data.

- Accurately adjusting historical OHLCV for splits and dividends is essential for simulating realistic equity curves and P&L.

- Maintaining a dynamic symbol mapping database (e.g., RIC to ISIN to vendor ID) is crucial for consistent instrument identification across sources and time.

- Reconciling vendor-specific corporate action logic or applying a custom adjustment methodology is often necessary for data consistency.

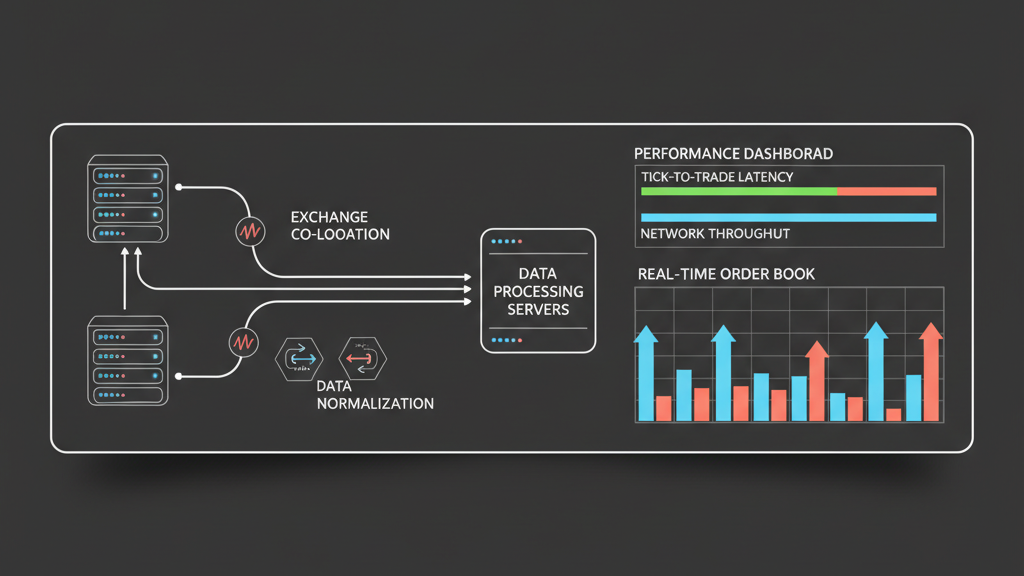

Building a Standardized Data Model and Ingestion Pipeline

To effectively achieve OHLCV market data normalization, a well-defined internal data model and an extensible ingestion pipeline are indispensable. Start by defining a canonical schema for your OHLCV data, specifying precise data types, field names (e.g., `timestamp_utc_ms`, `open`, `high`, `low`, `close`, `adjusted_close`, `volume`, `currency`, `exchange_id`), and ensuring all timestamps are normalized to a single reference (e.g., UTC epoch milliseconds). Each data source then requires a dedicated adapter responsible for parsing its native format and transforming it into this canonical structure. This transformation layer should handle type conversions, unit scaling, missing data imputation, and explicit timezone conversions. Implementing robust error handling and retry mechanisms within these adapters is also critical to manage transient API failures or malformed data packets from upstream providers, preventing silent data gaps or corruptions.

Impact on Backtesting and Execution Reliability

The direct consequence of inadequate OHLCV market data normalization is a significant degradation in both backtesting accuracy and live execution reliability. If your historical data is inconsistent or misaligned, your backtests will produce optimistic or pessimistic results that do not reflect actual market conditions, leading to ‘data-fit’ strategies that fail in production. For instance, a strategy relying on a specific price pattern across multiple markets might fail if the OHLCV bars from different exchanges aren’t perfectly time-aligned. In live trading, discrepancies between the normalized data used for signal generation and the actual market data received can lead to stale signals, incorrect order sizing, or even trades placed at prices significantly different from the model’s expectation. This introduces slippage risk and can severely impact a strategy’s profitability and overall operational integrity, turning a theoretically sound algorithm into a liability.

- Flawed OHLCV data normalization directly leads to inaccurate backtesting, masking true strategy performance and risk profiles.

- Inconsistent real-time OHLCV across feeds can cause signal timing issues, increasing execution latency and slippage.

- Routinely validate live trading P&L against backtest projections, identifying potential data normalization issues as a root cause for discrepancies.