In algorithmic trading, especially within high-frequency or latency-sensitive strategies, execution slippage isn’t just a minor inconvenience; it’s a direct erosion of profit margins. The difference between your intended entry or exit price and the actual executed price can significantly impact overall strategy performance, often turning winning backtests into losing live trades. Successfully reducing execution slippage with queue position and latency controls requires a deep understanding of market microstructure, meticulous system engineering, and a relentless focus on minimizing every microsecond in the order lifecycle. This isn’t about magical solutions, but rather a systematic approach to optimizing how and when your orders hit the exchange, aiming to capture the best available price at the moment of execution.

The Microstructure of Execution Slippage

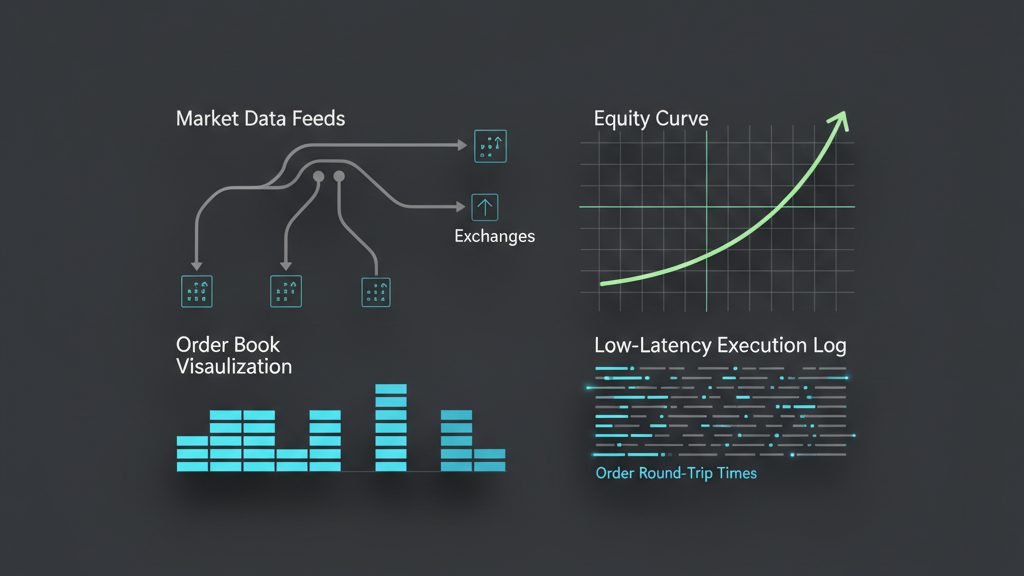

Execution slippage is often simplified as the difference between the displayed quote and the fill price, but its roots are far more complex, deeply embedded in market microstructure. For market orders, slippage arises from immediate liquidity consumption; if your order size exceeds the available liquidity at the best bid or offer, it ‘walks’ up or down the order book until fully filled. With limit orders, slippage manifests as an opportunity cost or a missed fill if the market moves away before your order gets hit. Understanding this dynamic means recognizing that slippage isn’t just a price delta; it’s a function of order size, market volatility, available liquidity, and critically, the speed and placement of your order relative to other market participants. Real-time insights into the order book depth and recent quote changes are essential for predicting and mitigating this phenomenon before an order is even sent.

Leveraging Queue Position for Better Fills

For limit order strategies, being at the front of the queue significantly increases the probability of getting filled at your desired price. When a new incoming market order hits the book, it fills the oldest limit order at that price level first. If you’re deep in the queue, a minor price fluctuation can move the market past your order without it ever being touched, or you might only get a partial fill before the price changes, forcing you to re-evaluate. Actively managing queue position involves techniques like ‘refreshes’ – canceling and resubmitting a limit order – to get to the front of the queue if a better price becomes available, or if you suspect your existing order is too far back. This process is inherently latency-sensitive, as a slow refresh might ironically put you further back or cause you to miss the opportunity entirely.

- Prioritize order resubmission logic for ‘queue jumping’ when market conditions warrant.

- Implement mechanisms to detect being ‘jumped’ in the queue by faster participants.

- Use smart order routing to place orders on exchanges where queue position can be gained more easily.

- Integrate real-time order book depth analysis to understand queue pressure at different price levels.

Architecting for Minimal Latency in Order Submission

Achieving minimal latency for order submission involves a holistic approach, spanning network infrastructure, hardware optimization, and software design. Colocation is often the first step, physically positioning servers as close as possible to the exchange matching engines to reduce network hop times. Beyond that, specialized hardware like FPGAs for market data processing and order gate logic can shave off microseconds. On the software side, applications must be written in low-latency languages (e.g., C++) and optimized for cache efficiency, avoiding garbage collection pauses or excessive context switching. Data serialization needs to be compact and fast, bypassing traditional network protocols where possible with custom solutions. Every millisecond gained means more opportunities to react to market changes and secure a better queue position, directly reducing execution slippage with queue position and latency controls.

Dynamic Latency Controls and Adaptive Execution

Static latency targets are insufficient; effective slippage reduction requires dynamic, adaptive latency controls. This means monitoring network jitter, exchange gateway load, and internal system processing times in real-time. If latency spikes, the execution algorithm might need to adjust its behavior: perhaps switching from aggressive queue-jumping to more passive execution, or even temporarily pausing. For instance, if a known latency event like an exchange ‘reboot’ or market data feed disruption occurs, the system should adapt its order submission timing or even route orders to a different venue. Machine learning models can be employed to predict latency spikes based on historical data and current network telemetry, allowing the system to pre-emptively adjust its execution strategy and avoid detrimental fills or missed opportunities. This dynamic adaptation is crucial for maintaining consistent performance across varied market conditions.

- Implement real-time network and system telemetry to detect latency anomalies.

- Develop adaptive algorithms that adjust order submission frequency and aggressiveness based on observed latency.

- Utilize multiple network paths or exchange gateways for redundancy and dynamic routing.

- Backtest execution strategies under simulated varying latency conditions to understand robust performance.

Measuring and Attributing Slippage in Production

After deploying strategies aimed at reducing execution slippage with queue position and latency controls, the critical next step is rigorous measurement and attribution. It’s not enough to just track the average slippage; you need to break it down by order type, market condition, venue, and even specific algorithm versions. This involves capturing precise timestamps for order submission, exchange receipt, and actual fill times, then correlating these with real-time market data at those exact moments. Comparing the price at the time of order submission, the ‘quoted’ price at exchange receipt, and the actual fill price helps isolate where slippage is occurring – whether it’s network delay, exchange processing, or market movement. Robust data pipelines and visualization tools are indispensable for identifying trends, confirming the effectiveness of optimizations, and pinpointing areas for further improvement, preventing ‘blind spots’ in performance analysis.

Trade-offs and Realistic Expectations for Slippage Control

While optimizing for queue position and latency can significantly reduce slippage, it’s crucial to approach this with realistic expectations and an understanding of inherent trade-offs. Perfect execution at zero slippage is often an unattainable ideal due to fundamental market dynamics like bid-ask spread, latency arbitrage by other participants, and the simple fact that market prices are constantly moving. Aggressive queue management, like frequent order refreshes, can generate high message traffic, potentially leading to exchange-imposed limits or increased infrastructure costs. Furthermore, overly complex latency optimization can introduce its own set of risks, such as increased system fragility or difficulty in debugging. It’s about finding the optimal balance where the cost of further optimization outweighs the marginal benefit in slippage reduction, always considering the specific strategy’s sensitivity to price precision versus execution certainty and throughput.

- Understand that market volatility and depth fundamentally limit slippage reduction.

- Be aware of exchange message limits and potential penalties for excessive order traffic.

- Weigh the engineering complexity and cost of latency reduction against the expected ROI.

- Recognize that some slippage is unavoidable and should be factored into strategy profitability models.