Developing a robust order management system (OMS) is fundamental for any serious algorithmic trading operation. While the core concept of sending orders and receiving fills seems straightforward, the reality of live trading introduces significant complexity, particularly when dealing with partial fills and the asynchronous nature of execution reports. A well-designed OMS must maintain an accurate, real-time internal state that precisely mirrors the external market and broker’s view of an order, even amidst network latency, message reordering, and varying reporting standards. Failure to manage this state meticulously can lead to incorrect position tracking, flawed risk calculations, and ultimately, significant trading errors. This article delves into the critical design considerations for building an OMS capable of reliably handling these nuanced aspects of order execution.

Introduction to OMS State Management Challenges

The primary challenge in order management system design lies in effectively synchronizing the internal perception of an order’s status with the external reality provided by various execution venues. Orders progress through a complex lifecycle, transitioning from ‘New’ to ‘Open,’ ‘Partially Filled,’ ‘Filled,’ ‘Canceled,’ or ‘Rejected.’ Each transition is typically triggered by an execution report (ExecReport) received from a broker, which can arrive asynchronously and out of sequence. This asynchronous processing, coupled with network latencies and the inherent delays in broker systems, necessitates an OMS built on a robust state machine model. The system must accurately absorb these reports, update quantities, calculate average prices for partial fills, and manage potential race conditions where a cancel request might cross a fill report, all while maintaining a consistent and verifiable audit trail. This complexity underscores why a naive approach to order state management quickly breaks down in production.

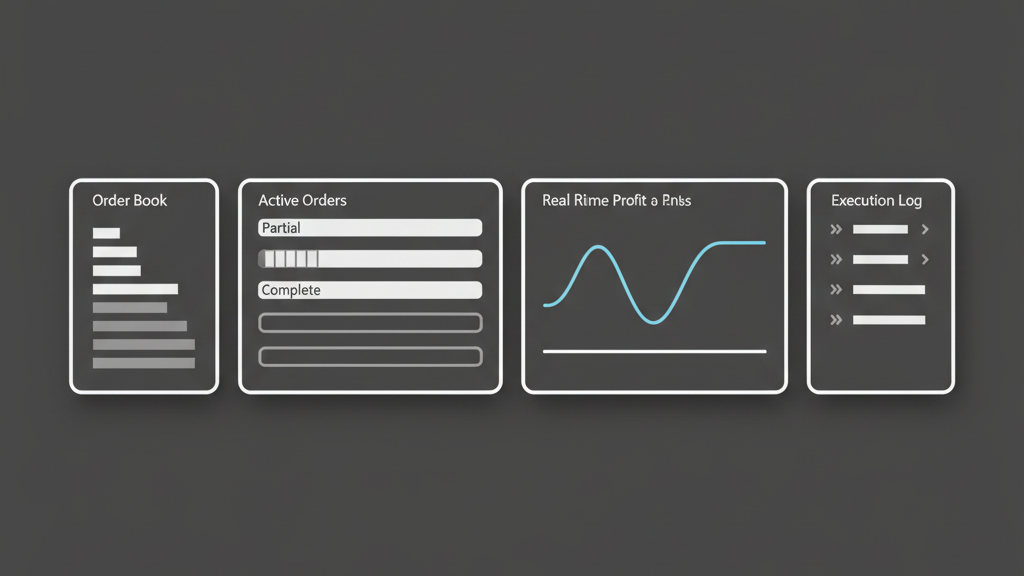

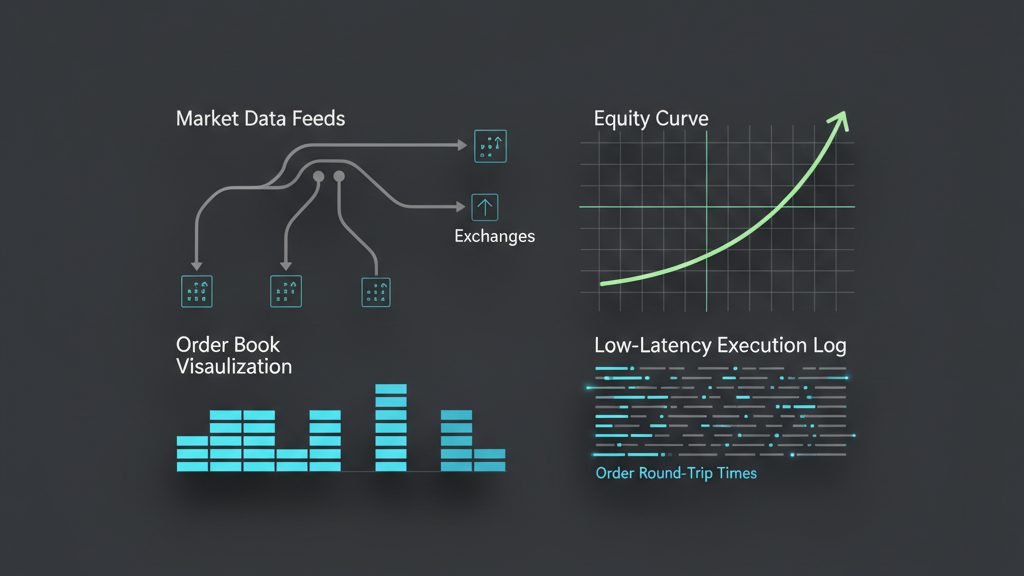

Core Architectural Components for Execution Reporting

A well-structured OMS typically relies on an event-driven architecture to process the stream of execution reports. Incoming FIX messages or proprietary API callbacks are parsed and translated into a standardized internal event format, then typically placed onto a message queue for processing. This decoupling ensures that the system can handle bursts of reports without blocking the ingress path. A dedicated execution report processing service consumes these events, updating an in-memory order book for low-latency lookups and persisting the full history of orders, fills, and associated reports to a robust database. Idempotency is crucial here; the system must correctly process reports even if they are received multiple times due to network retries, without corrupting the order state. This setup is key for maintaining high throughput and consistency across the order lifecycle.

- Standardized ingress layer to normalize diverse broker API formats into internal events.

- Dedicated message queue (e.g., Kafka, RabbitMQ) for asynchronous report processing.

- Persistent storage layer (e.g., PostgreSQL, NoSQL) for comprehensive order and fill history.

- High-performance in-memory order cache for low-latency state lookups and updates.

- Idempotent processing logic to prevent state corruption from duplicate execution reports.

Navigating Partial Fills and Out-of-Order Reports

Handling partial fills is a critical aspect of order management system design. When an order is only partially filled, the OMS must accurately update the remaining quantity, calculate the weighted average fill price, and ensure that the position tracking and PnL calculations reflect this nuanced state. The challenge is compounded by the fact that multiple partial fills for a single order can arrive non-sequentially, or a final fill might arrive before an earlier partial fill, especially when dealing with multiple venues or smart order routers. Implementing a robust mechanism that uses unique execution IDs (ExecIDs) and original order IDs (OrigClOrdID) to correctly apply updates and prevent race conditions is paramount. Without this, internal position reconciliation becomes a constant headache, potentially leading to over-exposure or missed trading opportunities due to an inaccurate view of available capital. This often requires careful sequencing logic and the ability to re-evaluate order state as delayed reports finally arrive.

Ensuring Data Integrity and System Resilience

Data integrity in an OMS is non-negotiable. Every state transition, every fill, and every associated execution report must be reliably recorded and persisted to ensure an immutable audit trail and enable system recovery. Atomic transactions are essential when updating order state in the database, ensuring that either all changes are committed, or none are. For resilience, the system needs comprehensive logging and monitoring, not just for operational metrics, but for discrepancies between internal state and external broker confirmations. Automated reconciliation processes, perhaps at the end of the trading day or during system startup, are vital to detect and correct any inconsistencies. This often involves comparing internal trade blotters against broker statements and flagging any unmatched or mispriced fills, which is a common operational task that a well-designed OMS aims to minimize through robust initial design.

- Leverage atomic database transactions for all order state modifications to guarantee consistency.

- Maintain an immutable, high-resolution log of all incoming execution reports and outgoing order requests.

- Implement automated end-of-day reconciliation workflows with prime brokers and clearing firms.

- Design robust error handling and alerting for malformed or unexpected execution report messages.

- Develop self-healing and recovery mechanisms, including replaying historical events from logs upon system restart.

Performance and Latency Considerations for Execution Reports

The performance of an order management system, particularly its ability to swiftly process execution reports, directly impacts an algorithmic trading strategy’s effectiveness. In low-latency trading, every microsecond counts; delays in updating an order’s state can lead to stale market views, missed opportunities for hedging, or increased slippage. The trade-off between persistence (which involves disk I/O and database latency) and in-memory processing speed must be carefully managed. Architectures that prioritize fast in-memory updates for critical decision-making, coupled with asynchronous persistence for auditability, are common. Furthermore, the volume of execution reports can be substantial during volatile periods, requiring an OMS to be designed for high throughput and efficient resource utilization to prevent backlogs that degrade real-time responsiveness and the accuracy of calculated positions and risk metrics.

Rigorous Testing and Backtesting Integration

Thorough testing is paramount for an order management system, especially concerning partial fills and execution reports. This involves comprehensive unit tests for every state transition and calculation logic, ensuring that average prices and remaining quantities are correctly updated under various scenarios. Integration tests are crucial, using mock broker APIs to simulate complex real-world conditions like out-of-order reports, partial fills followed by cancels, and various error messages. Performance tests under simulated high-volume conditions help identify bottlenecks. Furthermore, the OMS’s logic for handling fills directly impacts the realism of a backtesting engine. A truly effective backtesting engine must incorporate realistic fill models, accounting for partial fills, market impact, and slippage, all derived from the operational behavior observed and managed by the OMS. Without this integration, backtesting results can be overly optimistic and misleading when deployed live.

- Extensive unit testing for all order state transitions, calculations, and message parsing logic.

- Integration tests utilizing mock broker APIs to simulate diverse real-world execution scenarios.

- Performance and stress testing under peak message loads to identify latency bottlenecks.

- Chaos engineering to test system resilience against delayed, corrupted, or duplicate reports.

- Seamless integration with backtesting engines for realistic fill modeling that mimics live execution behavior.