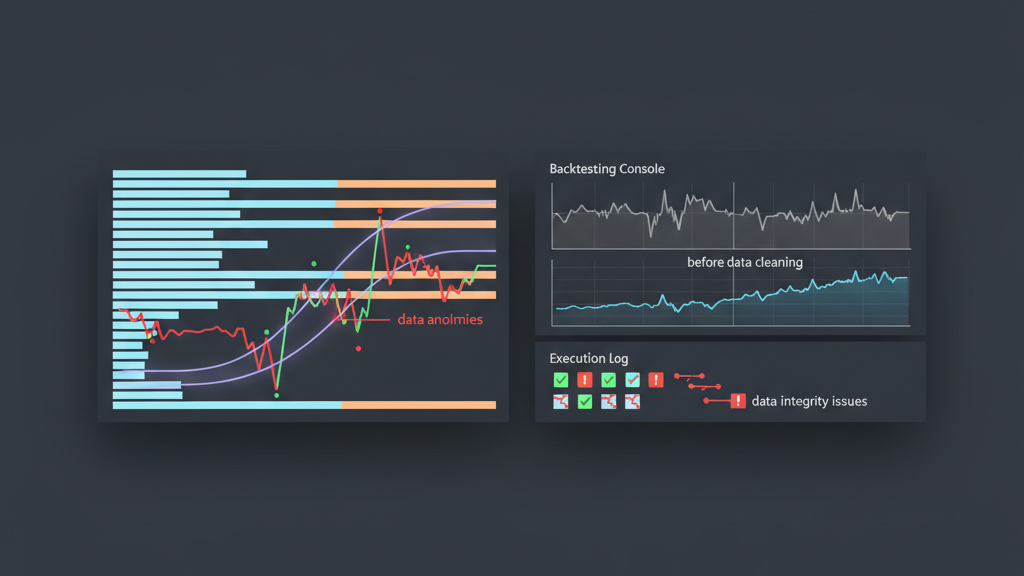

Accurate market data is the bedrock of any successful algorithmic trading strategy. Without pristine tick data, backtests are misleading, and live execution can lead to catastrophic losses. Bid-ask and trade tick data, in particular, are prone to various anomalies introduced by exchange glitches, data feed issues, network latency, or simple human error. Implementing robust data cleaning rules isn’t just a best practice; it’s a fundamental requirement for anyone operating in a low-latency or high-frequency environment. This article delves into the practical aspects of identifying and correcting common data inconsistencies, offering insights gained from years of building and deploying production-grade trading systems. We’ll explore specific validation checks that ensure the integrity of your market data, from the raw feed all the way to its consumption by your strategy logic.

The Imperative of Pristine Tick Data for Algos

In algorithmic trading, especially for high-frequency strategies, even minuscule data errors can have a disproportionately large impact. A single misreported trade price or a stale bid-ask quote, if not corrected, can propagate through a strategy’s indicators, trigger incorrect signals, and ultimately lead to significant P&L deviations or outright losses. Relying on raw, unvalidated tick data is akin to building a skyscraper on sand; the foundation is inherently unstable. Our experience shows that the initial hours spent defining and implementing comprehensive data cleaning rules for bid-ask and trade tick data pay dividends by preventing costly errors in backtesting performance attribution and live trading. This proactive approach ensures that any observed strategy behavior is a true reflection of market dynamics rather than an artifact of data quality issues, allowing for more reliable research and robust deployments.

Identifying Common Anomalies in Bid-Ask Market Data

Bid-ask market data streams are constantly active and highly susceptible to various forms of corruption. These anomalies can range from logical inconsistencies to outright data corruption, often stemming from network jitter, exchange feed retransmissions, or internal system bottlenecks. Understanding these common pitfalls is the first step in constructing an effective data cleaning pipeline. Without proper validation, a crossed bid-ask spread could trick an algorithm into attempting an impossible arbitrage, or a stale quote might cause a market order to execute significantly away from the perceived price, resulting in unexpected slippage. Real-world systems must actively monitor for these discrepancies and implement immediate corrective actions, whether that means discarding the data point, flagging it for review, or attempting a repair based on established rules.

- **Crossed Spreads:** Bid price is greater than or equal to the ask price (e.g., Bid 100.05, Ask 100.03). This is a logical impossibility in an efficient market.

- **Stale Quotes:** The timestamp indicates a quote that is significantly older than the current market activity, often due to connectivity issues or an inactive symbol.

- **Zero Bid/Ask:** Either the bid or ask price is reported as zero, or a clearly invalid value, indicating a data feed error rather than a true market state.

- **Extreme Price Jumps:** Sudden, unrealistic jumps or drops in bid or ask prices that are inconsistent with historical volatility or recent market movement.

- **Non-Monotonic Timestamps:** Data points arriving out of chronological order, which can severely disrupt time-series analysis and indicator calculations.

Developing Robust Bid-Ask Validation Rules

Crafting effective `data cleaning rules` for bid-ask data requires a blend of logical constraints and adaptive thresholds. Beyond simply checking for crossed spreads, a comprehensive approach involves validating the reasonableness of the spread itself, the quoted size, and the recency of the data. For instance, a very wide spread might be valid in an illiquid market, but suspicious for a highly liquid instrument. We often employ dynamic thresholds that adapt to the instrument’s volatility or typical market conditions. When an anomaly is detected, the choice of action is critical: should the data point be discarded, or can it be corrected? Often, discarding is safer for high-frequency trading, while historical backtesting might allow for interpolation if the missing data segment is small and the instrument is liquid.

Implementing Trade Tick Data Cleaning Protocols

Trade tick data presents its own set of unique cleaning challenges. While bid-ask data represents intentions, trade data represents executed transactions, making its accuracy paramount for P&L attribution and volume analysis. Anomalies in trade data can include impossible execution prices, zero or illogical trade volumes, and duplicate entries. A common issue we encounter is trade prices that fall outside the prevailing bid-ask spread at the time of execution. While some ‘flicks’ outside the spread might occur due to latency, persistent or significant deviations typically signal data quality issues. Our `data cleaning rules` for trades often involve comparing trade timestamps with the nearest bid-ask quotes, applying filters for minimum valid volume, and using unique trade IDs where available to prevent duplicates. Ignoring these details can lead to inaccurate fill price estimations and flawed slippage calculations in backtests.

- **Impossible Trade Prices:** A trade executed at a price significantly outside the prevailing bid-ask spread, or at a value that is clearly an error (e.g., negative price).

- **Zero or Invalid Volume:** Trades reported with zero volume, or volumes that are clearly erroneous (e.g., extremely large volumes that don’t match market depth).

- **Duplicate Trades:** The same trade event appearing multiple times in the data feed, often due to retransmission or processing errors.

- **Timestamp Mismatches:** Trade timestamps that do not align logically with the surrounding bid-ask quotes, making it difficult to determine the true market context of the trade.

Outlier Detection and Filtering Strategies

Beyond explicit rule violations, effective data cleaning also involves identifying and handling statistical outliers. These aren’t necessarily ‘errors’ in the sense of being logically impossible, but rather extreme values that might distort statistical analysis or trigger unintended strategy behavior. For instance, a single large trade volume in an otherwise quiet market might be legitimate but could skew volume-weighted average price calculations if not handled carefully. Techniques like Z-scores or Median Absolute Deviation (MAD) can be employed to detect price or volume outliers based on recent history. The challenge lies in distinguishing legitimate fat-finger trades or genuine market events from noise. Over-filtering can lead to discarding valuable information about market microstructure, while under-filtering leaves the system vulnerable to noise. It’s an iterative process of refinement, often requiring domain expertise to fine-tune the thresholds.

The Operational Impact of Data Discrepancies

The consequences of inadequate `data cleaning rules for bid ask and trade tick data` extend far beyond just backtesting inaccuracies. In a live trading environment, processing erroneous data can lead to real-money losses. A stale ask price might cause a market buy order to execute at a much higher, unfavorable price than anticipated. A crossed spread could trigger a buy signal when a sell is intended, or vice versa. These discrepancies erode confidence in the trading system and necessitate manual intervention, which defeats the purpose of automation. Furthermore, persistent data quality issues complicate post-trade analysis, making it difficult to accurately attribute P&L, evaluate strategy performance, and fulfill regulatory reporting requirements. A robust data cleaning pipeline acts as a critical line of defense, safeguarding capital and maintaining the integrity of the entire trading operation.

- **Inaccurate Backtesting:** Historical simulations generate unrealistic profits or losses, leading to misinformed strategy development.

- **Increased Slippage:** Live orders execute at prices significantly worse than expected due to stale or incorrect bid-ask quotes.

- **False Signals:** Strategy indicators compute erroneous values, triggering trades based on invalid market conditions.

- **Execution Failures:** Attempts to place orders at impossible prices or against invalid market depth can lead to API errors or rejected orders.

- **Misleading P&L Attribution:** Difficulty in precisely calculating profit and loss for specific trades or strategies due to corrupted execution data.