Developing profitable algorithmic trading strategies requires rigorous testing to ensure their robustness and generalization capability. A critical, yet often misunderstood, component of this process is cross-validation. Traditional cross-validation methods, while effective for independent and identically distributed (i.i.d.) data, fall short when applied to time series due to inherent temporal dependencies. Directly applying techniques like K-fold cross-validation to financial time series data can lead to severe look-ahead bias and an overestimation of a strategy’s performance, resulting in significant discrepancies between backtested results and live trading outcomes. For Algovantis users building complex trading systems, understanding and correctly implementing cross-validation methods tailored for time series backtesting is paramount to validate strategies effectively and mitigate the risk of deploying overfit models.

The Pitfalls of Naive Cross-Validation in Time Series

Applying standard K-fold or shuffled cross-validation directly to time series data is a common pitfall that often yields misleadingly optimistic performance metrics. The fundamental issue stems from data leakage: these methods allow the model to be trained on data points that chronologically occur after the data points it is being tested on. For instance, if a model trains on market data from 2020-2021 and is then tested on 2019 data, or if it trains on a mixed bag of past and future data, it implicitly gains knowledge of future market states. This look-ahead bias artificially inflates Sharpe ratios and reduces perceived drawdowns during backtesting, creating a false sense of security about a strategy’s predictive power. In a live trading environment, where only historical data is available for training, such a model would predictably underperform, exposing the trading desk to unexpected risks and capital loss.

Walk-Forward Optimization: Simulating Live Deployment Realism

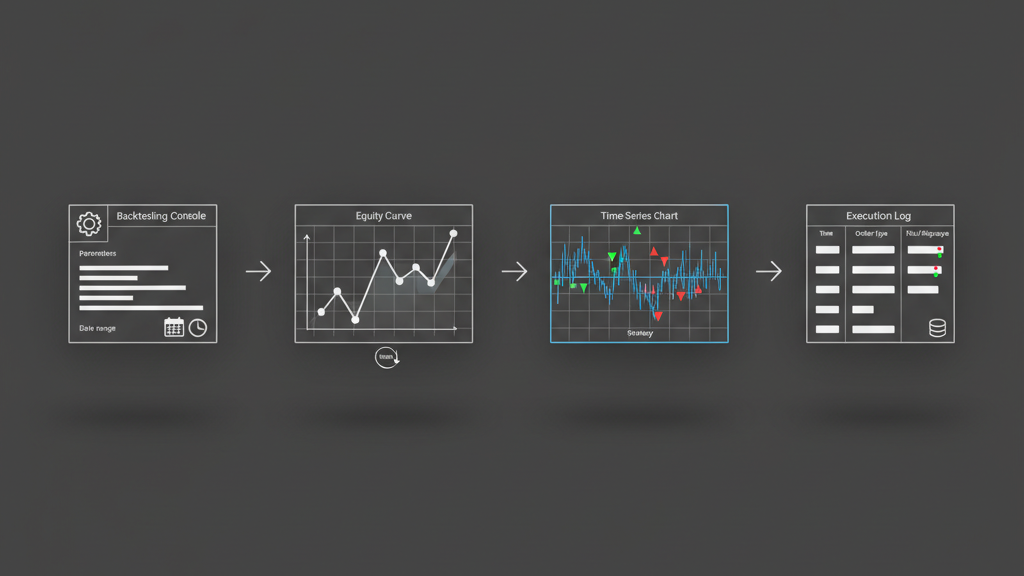

Walk-forward optimization is arguably the most common and practical cross-validation method for time series, as it closely mimics how an algorithmic strategy is developed and deployed in a production environment. The process involves defining an initial training window, optimizing the strategy parameters on this historical data, and then testing the optimized parameters on a subsequent, unseen walk-forward period. After this test period, the training window slides forward, incorporating the new data, and the process repeats: re-optimization, followed by testing on the next unseen block. This iterative approach helps assess a strategy’s robustness across different market regimes and ensures that the model’s parameters are regularly adapted to evolving market conditions, much like a real-world system would be recalibrated. The length of the training and walk-forward periods, along with the re-optimization frequency, are critical parameters that impact the computational cost and the strategy’s responsiveness to market changes, demanding careful consideration based on the strategy’s time horizons and trading frequency.

- Defining appropriate training and validation window sizes to balance data sufficiency and market responsiveness.

- Determining the optimal re-optimization frequency to adapt to new market regimes without over-fitting to noise.

- Monitoring parameter stability across walk-forward segments to identify robust versus regime-specific strategies.

- Managing the computational overhead of repeated optimizations, often requiring distributed computing resources.

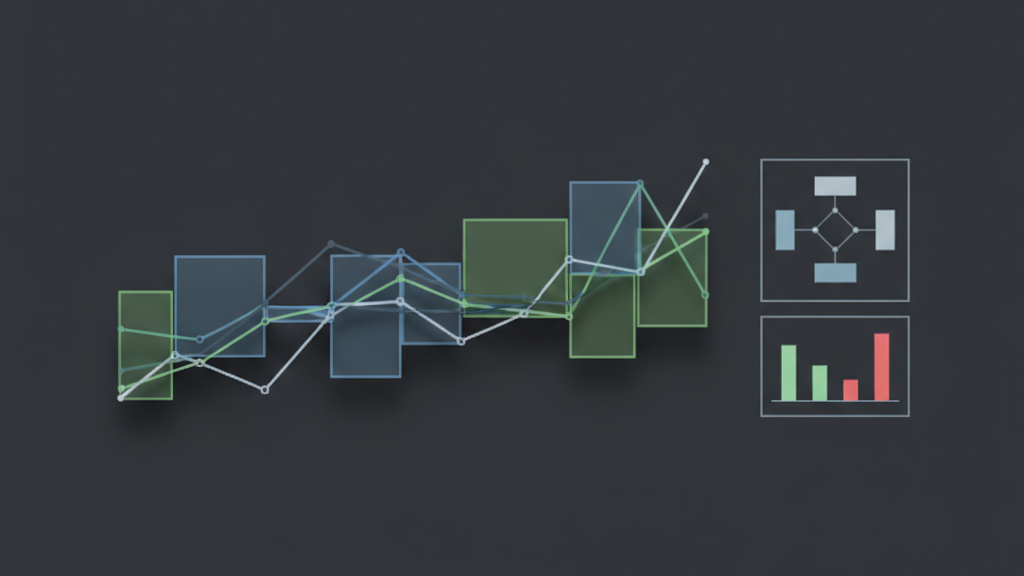

Blocked Cross-Validation: Preserving Temporal Continuity

Blocked cross-validation methods address the issue of temporal dependency by ensuring that the training and validation sets are contiguous blocks of time, preventing any future data from leaking into the past. Unlike random splits, blocked methods explicitly respect the chronological order of observations. A common approach involves creating folds where each fold consists of a continuous block of data, and the training data for any given fold always precedes the validation data. This means if you have ‘N’ blocks, you train on blocks 1 through ‘i’ and test on block ‘i+1’. This methodology avoids the direct data leakage seen in naive K-fold CV. Variations include ‘purged k-fold’, where not only is the temporal order maintained, but observations around the boundaries of the training and test sets are explicitly removed (purged) to prevent subtle forms of leakage, especially when features are derived from rolling windows or depend on nearby data points. This is crucial for avoiding an optimistic bias in strategy performance when assessing the generalization capability of a model that uses time-dependent features.

Stationary Bootstrap for Statistical Significance and Model Stability

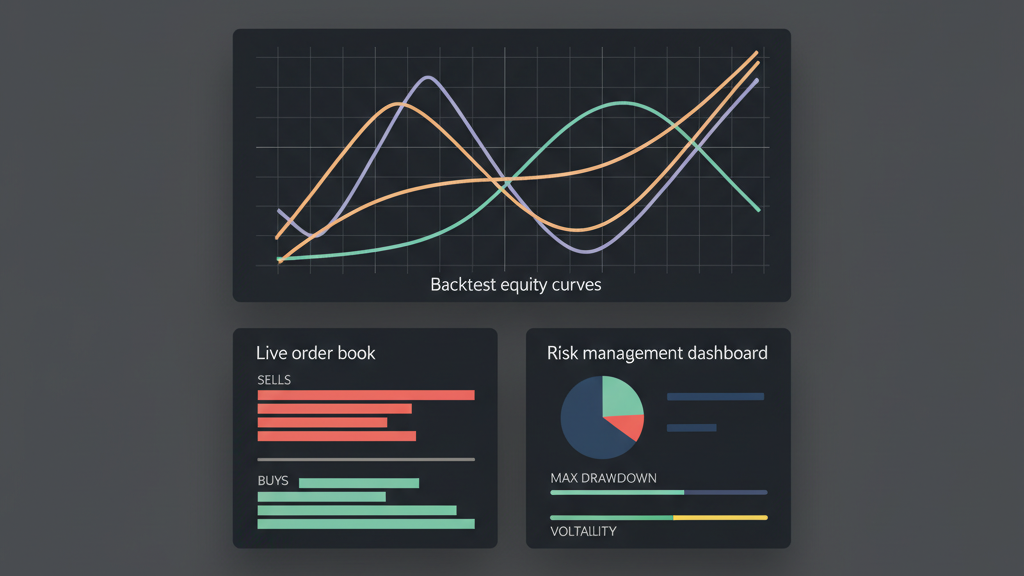

While walk-forward validation assesses robustness over time, the stationary bootstrap method offers a way to evaluate the statistical significance and stability of strategy performance by generating multiple simulated equity curves. Unlike a standard bootstrap, which assumes i.i.d. observations, the stationary bootstrap samples blocks of consecutive data points with replacement, where the block length is randomly chosen from a geometric distribution. This preserves some of the temporal dependence inherent in the original series while still generating a distribution of potential outcomes. By repeatedly sampling and re-evaluating the strategy on these bootstrapped series, we can construct confidence intervals around performance metrics like the Sharpe Ratio or maximum drawdown. This provides a more robust estimate of expected performance and helps quantify the uncertainty of our backtest results, giving us a clearer picture of how likely the observed performance is to occur purely by chance or due to inherent market structure, rather than true strategy edge. It’s a computationally intensive method, but invaluable for robust statistical inference.

- Determining an appropriate distribution for block lengths to capture relevant market dynamics.

- Interpreting the distribution of performance metrics (e.g., Sharpe, Sortino) across bootstrap samples.

- Balancing computational cost with the desired number of bootstrap replications for reliable statistics.

- Using bootstrap results to assess the statistical significance of a strategy’s alpha beyond mere historical observation.

Mitigating Overlapping Observations and Subtle Data Leakage

Beyond the obvious chronological splits, another subtle but critical source of data leakage in time series backtesting arises from overlapping observations, particularly when features are engineered using rolling windows. For instance, if a 20-day moving average is a feature, a data point for a test set observation at `t` inherently uses data from `t-1` to `t-19`. If any of these `t-1` to `t-19` observations are also present in the training set for that specific validation fold, or if the training set contains observations too close to the test set, information can implicitly leak. This issue is often addressed through ‘purging’ and ’embargoing.’ Purging involves removing training samples that fall within the ‘look-back’ window of a test sample to ensure no feature overlap. Embargoing extends this by also removing training samples immediately following a test sample, to prevent the model from learning from future market reactions to events that occurred during the test period. These techniques are vital for truly independent validation, especially for high-frequency strategies or those relying on heavily smoothed indicators, where even small overlaps can lead to significant biases.

Execution Constraints and Performance Evaluation

Implementing these advanced cross-validation methods within an Algovantis system requires careful consideration of execution constraints and a nuanced approach to performance evaluation. For example, during walk-forward optimization, parameter re-optimization must be quick enough to realistically fit within a trading system’s re-calibration cycle. Latency in data processing, slippage during simulated executions, and the frequency of re-training cycles can significantly impact the ‘real’ performance of a strategy compared to an idealized backtest. When evaluating performance across different cross-validation folds, simply averaging metrics can be misleading. Instead, it’s often more informative to look at the distribution of performance metrics (e.g., median Sharpe ratio, worst-case drawdown across all folds) and identify any folds where the strategy performed significantly worse. Such ‘stress test’ scenarios can highlight specific market regimes where the strategy might fail, informing more robust risk management logic and potentially prompting architecture decisions to include adaptive parameter tuning or regime-switching components. The goal is not just to find a profitable strategy but a resilient one that can withstand diverse market conditions without catastrophic failure.

- Integrating cross-validation routines into an automated backtesting and deployment pipeline for continuous strategy validation.

- Developing robust performance evaluation frameworks to interpret results across multiple folds, looking for consistency and outlier behavior.

- Considering computational resource allocation for intensive cross-validation runs, often leveraging parallel processing or cloud infrastructure.

- Using cross-validation results to inform risk limits, position sizing, and stop-loss mechanisms in the live trading system.